Taalas replaces programmable GPUs with robust AI chips to earn 17,000 tokens per second for virtualization.

In the advanced world of AI infrastructure, the industry has operated under one assumption: flexibility is king. We build general-purpose GPUs because AI models change every week, and we need programmable silicon that can adapt to the next research breakthrough.

But That’s itthe Toronto-based startup thinks flexibility is exactly what’s holding AI back. According to the Taalas team, if we want AI to be as common and cheap as plastic, we should stop ’emulating’ intelligence on general purpose computers and start ‘casting’ it directly on silicon.

The Problem: The ‘Memory Wall’ and GPU Tax

The current cost of running a Large Language Model (LLM) is driven by an obvious barrier: the The Memory Wall.

Traditional processors (GPUs) are based on ‘Instruction Set Architecture’ (ISA). They separate the computer from the memory. When using an inference pass on a model like the Llama-3, the chip spends most of its time and energy switching loads from High Bandwidth Memory (HBM) to processing cores. This ‘data movement tax’ accounts for nearly 90% of energy consumption in modern AI data centers.

Answer from Taalas: complete the memory fetch cycle. Using a proprietary automated design flow, Taalas translates a model’s computational graph directly into a physical chip layout. In their own HC1 (Hardcore 1) chip, model weights and structures are literally embedded in silicon wires.

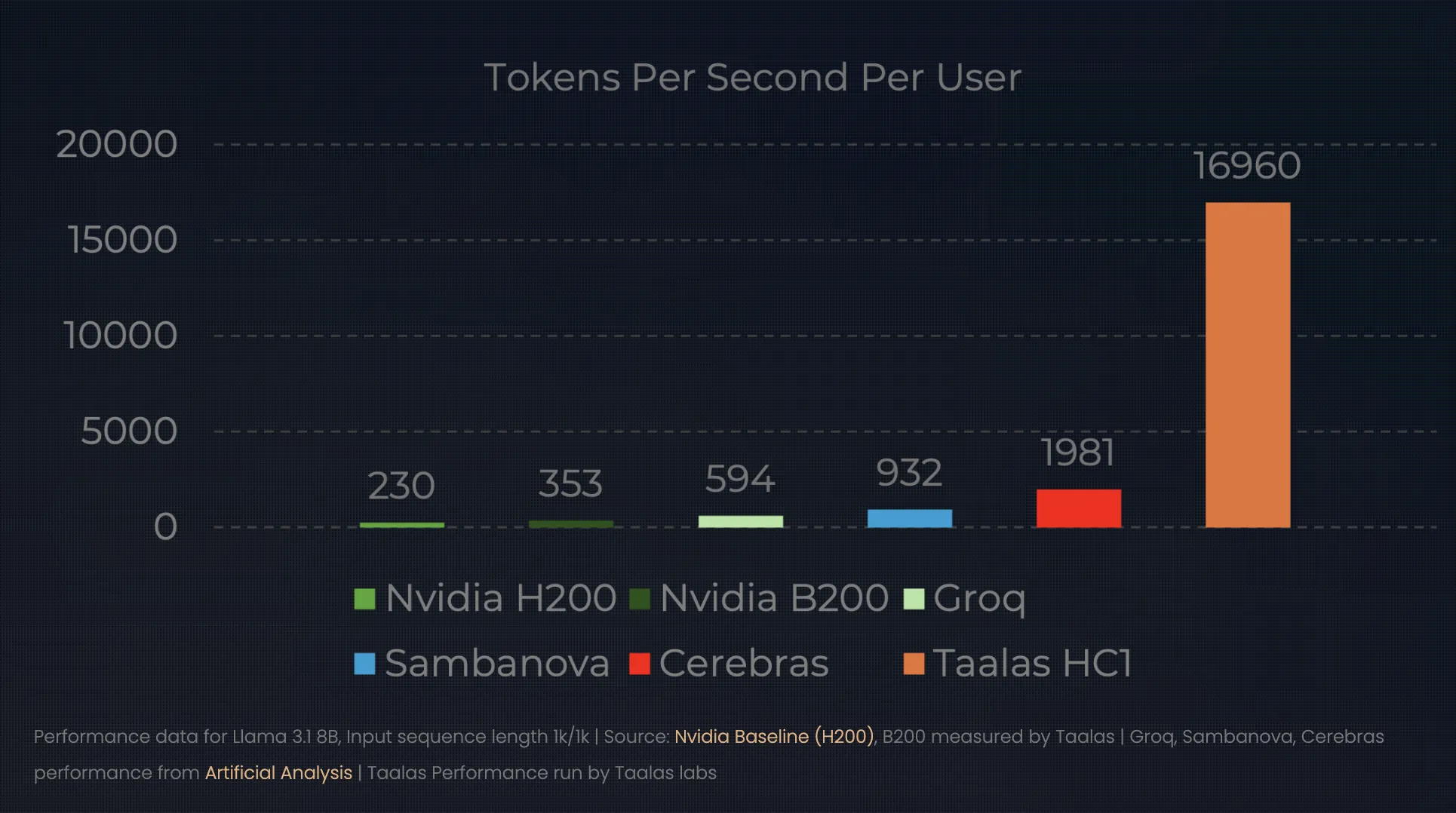

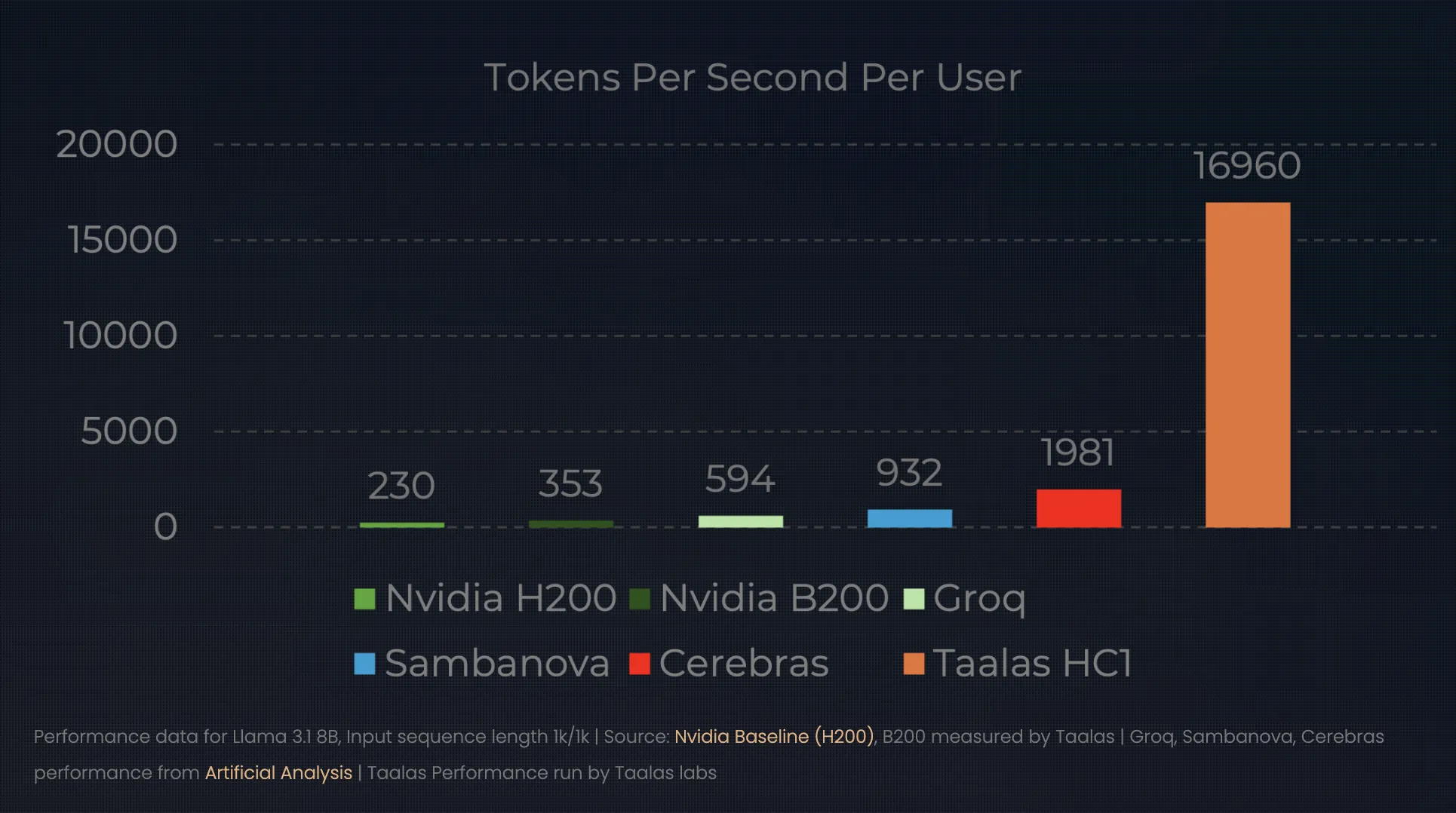

Hard models: 17,000 Tokens per second

The results of this ‘direct-to-silicon’ approach redefine the performance ceiling for consideration. In their latest unveiling, Taalas showed HC1 using the Llama 3.1 8B model. While the top-of-the-line NVIDIA H100 might give a single user ~150 tokens per second, the HC1 is incredibly efficient. 16,000 to 17,000 tokens per second.

This changes the ‘economic unit’ of AI:

- Performance: A single HC1 chip can outperform a small GPU data center in terms of raw throughput for a particular model.

- Efficiency: Taalas says a 1000x improvement in terms of efficiency (watt-hours and dollar-hours) compared to conventional chips.

- Infrastructure: Because the weights are hardwired, there is no need for external HBM or complex liquid cooling systems. An air-cooled rack can house ten of these 250W cards, bringing the power of an entire GPU cluster to a single server box.

Breaking the 60-Day Barrier: The Automated Foundry

The obvious ‘catch’ for AI developers is flexibility. If you make a model into a chip today, what happens if a better model comes out tomorrow? Historically, designing an ASIC (Application-Specific Integrated Circuit) took two years and tens of millions of dollars.

Taalas solved this automatic. They built a foundry-like compiler system that takes model weights and generates a chip design in about a week. By focusing on a simplified workflow—where they only change the silicon top metal mask—they spent time changing from ‘weights-to-silicon’ to just. two months.

This allows for a ‘seasonal’ hardware cycle. A company can fine-tune a frontier model in the spring and have thousands of special, high-performance chips deployed in the summer.

Market Shift: From Shovels to Stamps

This change marks an important moment in the AI hype cycle. We move from the ‘Research and Training’ phase—where GPUs are critical for flexibility—to the ‘Deployment and Insight’ phase, where token cost is the only metric that matters.

If Taalas succeeds, the AI market will be divided into two distinct categories:

- General Purpose Training: Led by NVIDIA and AMD, it provides the large, flexible clusters needed to discover and train new architectures.

- Special description: It is led by ‘foundations’ such as Taalas, which take those proven structures and ‘print’ them into cheap, ubiquitous silicon for everything from smartphones to industrial sensors.

Key Takeaways

- The ‘Hardwired’ Paradigm Shift: Taalas leaves from there software-defined AI (running models on general-purpose GPUs) to hardware-defined AI. By ‘baking’ specific model weights and structures directly into the silicon, they eliminate the need for basic instruction overhead, making the model a processor in itself.

- Death of the Memory Wall: Traditional AI hardware wastes ~90% of its data moving energy between memory and computation. Taalas HC1 (Hardcore 1) The chip eliminates the “Memory Wall” by literally wiring the model boundaries to the metal layers of the chip, eliminating the need for expensive High Bandwidth Memory (HBM).

- 1000x Efficiency Leap: By removing the ‘order tax’, Taalas says a 1,000x improvement in performance-per-watt and performance-per-dollar. Basically, this means that HC1 can beat 17,000 tokens per second on the Llama 3.1 8B model—performs more efficiently than a standard GPU while using much less power.

- Automatic ‘Direct-to-Silicon’ Foundry: To solve the problem of model obsolescence, Taalas uses identity automated design flow. This cuts the time to create a custom AI chip from years to mere years weeksallowing companies to ‘print’ their finely tuned models into silicon at certain times of the year.

- The Future of Commodity AI: This technology represents a shift from ‘Cloud-First’ to ‘Device-Native’ AI. As projection becomes a cheap, hard-wired commodity, AI will move from centralized servers to local, low-power hardware—from smartphones to industrial sensors—with no latency and no subscription costs.

Check it out Technical details. Also, feel free to follow us Twitter and don’t forget to join our 100k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.