Stanford Researchers Release OpenJarvis: The First Local Framework for Building Personal AI Agents with Tools, Memory, and Learning.

Stanford researchers are silent OpenJarvisopen source architecture framework AI personal agents run entirely on the device. The project comes from Stanford’s Scaling Intelligence Lab and is presented as a research platform and ready-to-deploy infrastructure for the first local AI systems. It focuses on not just modeling, but also the broader software stack needed to make on-device agents usable, scalable, and scalable over time.

Why OpenJarvis?

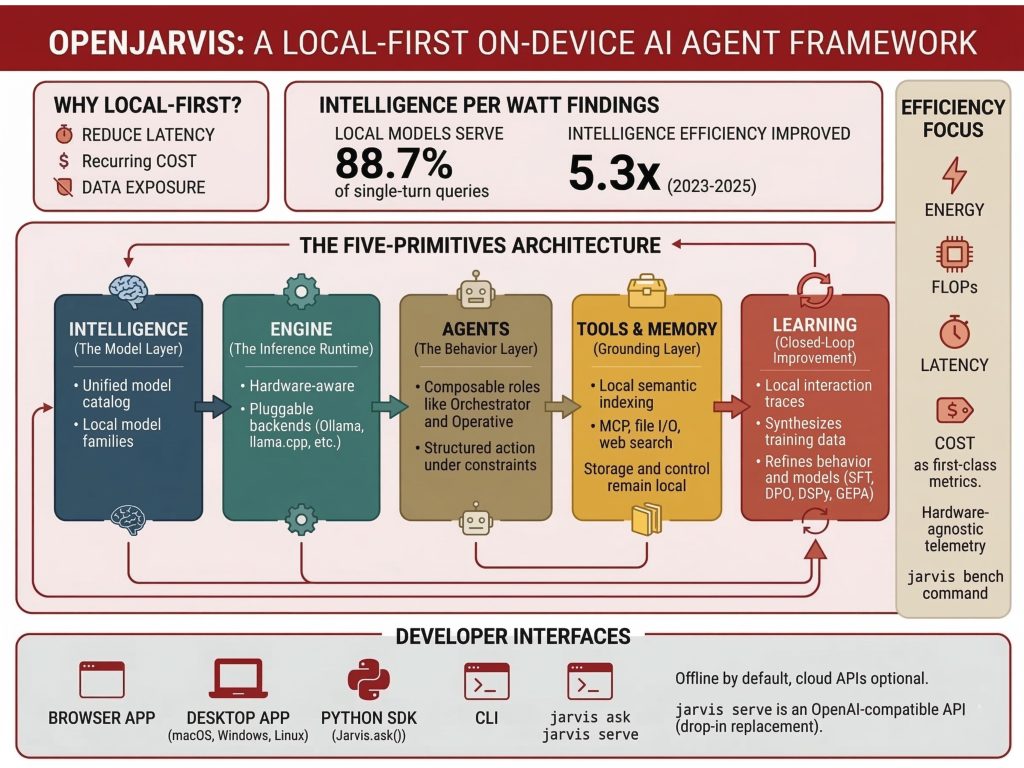

According to a Stanford research team, many current AI projects still keep the on-premises component thin while moving the underlying logic through external cloud APIs. That design introduces latency, recurring costs, and data disclosure concerns, especially for agents/agents who use personal files, messages, and persistent user context. OpenJarvis is designed to change that balance by automating on-premise deployments and optional cloud deployments.

The research team attributes this release to its original Intelligence Per Watt research. In that work, they report that local language models and local accelerators can work accurately 88.7% of response questions and reasoning questions at the same timewhile intelligence efficiency improved by 5.3× from 2023 to 2025. OpenJarvis is positioned as a software layer that follows from that result: if consumer models and hardware become useful for many local tasks, developers need a common stack to build and test those systems.

Five-Primitives Architecture

At the architectural level, OpenJarvis is organized around the first five: Intelligence, Engine, Agents, Tools and Memory, and Learning. The research team describes this as an integrable abstraction that can be scaled, adapted, and improved independently or used together as an integrated system. This is important because local AI projects often combine inference, orchestration, tools, retrieval, and logic into a single application that is difficult to produce. OpenJarvis instead tries to give each layer a more specific role.

Intelligence: The Model Layer

I Intelligence primitive is the model layer. It sits on top of a changing set of local model families and provides a unified model catalog so developers don’t have to manually track parameter calculations, hardware fit, or memory trade-offs for every release. The goal is to make model selection easier to learn in contrast to other parts of the system, such as the inference backend or agent logic.

Engine: Inference Runtime

I Engine primitive is a runtime approximation. It is a common interface on similar backends Ollama, vLLM, SGlang, llama.cpp, and cloud APIs. The engine layer is broadly framed as the implementation of hardware information, where commands such as jarvis init find the available hardware and recommend the appropriate engine and model configuration, while jarvis doctor helps to maintain that setting. For developers, this is one of the most useful parts of the design: the framework does not take a single runtime, but treats the concept as an interface layer.

Agents: An Ethical Background

I Agents primitive is the behavioral layer. Stanford defines it as a component that transforms the ability of a model to perform a programmed action under real device constraints such as limited windows, limited working memory, and performance limitations. Rather than relying on a single general-purpose agent, OpenJarvis supports composable roles. The Stanford article specifically mentions roles such as The Orchestratorwhich divides complex tasks into subtasks, and It worksintended as a lightweight executor for repetitive human workflows. The documentation also describes the agent harness as managing system information, tools, context, logical reasoning, and cognitive output.

Tools & Memory: Grounding the Agent

I Tools and Memory primitive is the lowest layer. This classic includes support for MCP (Model Context Protocol) use of common tools, Google A2A agent-to-agent communication, and semantic index to bring back notes, documents, and papers. It also supports messaging platforms, webchat, and webhooks. It also includes a view of small tools including web search, access counter, file I/O, code interpretation, retrieval, and external MCP servers. OpenJarvis is not just a local chat room; it is intended to connect spatial models to tools and a continuous personal context while maintaining storage and managing space automatically.

Learning: Closed-Loop Development

The fifth classic, Readingit’s what gives the framework a closed-loop optimization approach. Stanford researchers describe it as a layer that uses spatial interaction traces to combine training data, refine agent behavior, and improve model selection over time. OpenJarvis supports efficiency across the board four layers stack: model weights, Information about LM, agent logiconce a thinking engine. Examples listed by the research team include SFT, GRPO, DPOquick setup with DSPyagent configuration with GEPAand engine level tuning such as volume selection and batch setting.

Efficiency as a First Class Metric

A major technical point in OpenJarvis is its emphasis cognitive efficiency assessment. The frame is in charge power, FLOPs, latency, and dollar costs as first-class issues alongside the quality of work. It also emphasizes a hardware-agnostic telemetry system for power profiling in the NVIDIA GPUs with NVML, AMD GPUsagain Apple Silicon with powermetricswith 50 ms sampling intervals. I jarvis bench The command is intended to perform measurements on the level of latency, throughput, and power per query. This is important because the local deployment is not only about whether the model can answer the question, but whether it can do so within the actual limits of power, memory, and response time.

Developer Interfaces and Usage Options

From a developer’s perspective, OpenJarvis presents several entry points. Official documents show a browser applicationa desktop applicationa Python SDKand a The CLI. The browser-based interface can be started with ./scripts/quickstart.shwhich includes dependency, it starts Ollama and the local model, introduces the background and foreground, and opens the local UI. A desktop application is available macOS, Windows, and Linuxand the backend is still running on the user’s machine. The Python SDK exposes ia Jarvis() object and methods like ask() again ask_full()while the CLI includes commands such as jarvis ask, jarvis serve, jarvis memory indexagain jarvis memory search.

The docs also say that all important functions work without a network connectionwhile cloud APIs are optional. For dev teams building local applications, another feature comes into play jarvis servestarting a FastAPI server with SSE streaming and is defined as a replacing OpenAI clients. That lowers migration costs for developers who want to prototype against an API-style interface while maintaining local assumptions.

Check it out Repo, Documentation again Technical details. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.