Model Context Protocol (MCP) vs. AI Agent Skills: Deep Dive into Programming Tools and Behavioral Guidance for LLMs

In recent times, many developments in the agent ecosystem have focused on enabling AI agents to interact with external tools and access domain-specific information more effectively. Two common methods that have emerged are these abilities again MCPs. Although they may seem similar at first glance, they differ in the way they are organized, the way they do things, and the audience they are designed for. In this article, we will examine what each method offers and examine their main differences.

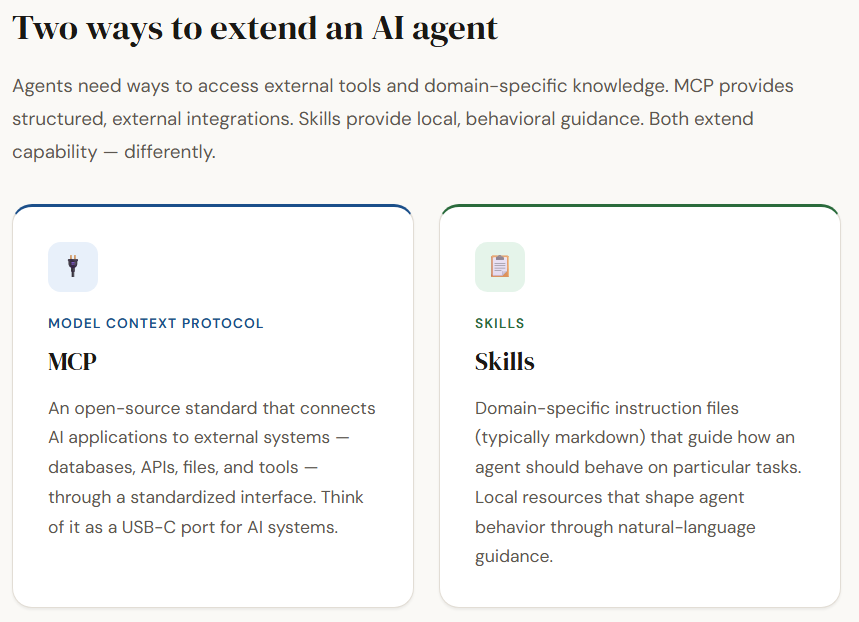

Model Context Protocol (MCP)

Model Context Protocol (MCP) is an open source standard that allows AI applications to communicate with external systems such as databases, local files, APIs, or specialized tools. It extends the capabilities of large-scale language models by declaratively tools, resources (structured content such as documents or files), and warnings model that I can use during consultation. In simple terms, MCP acts as a default interface—similar to method a USB-C port connects devices—making it easy for AI systems like ChatGPT or Claude to interact with external data and services.

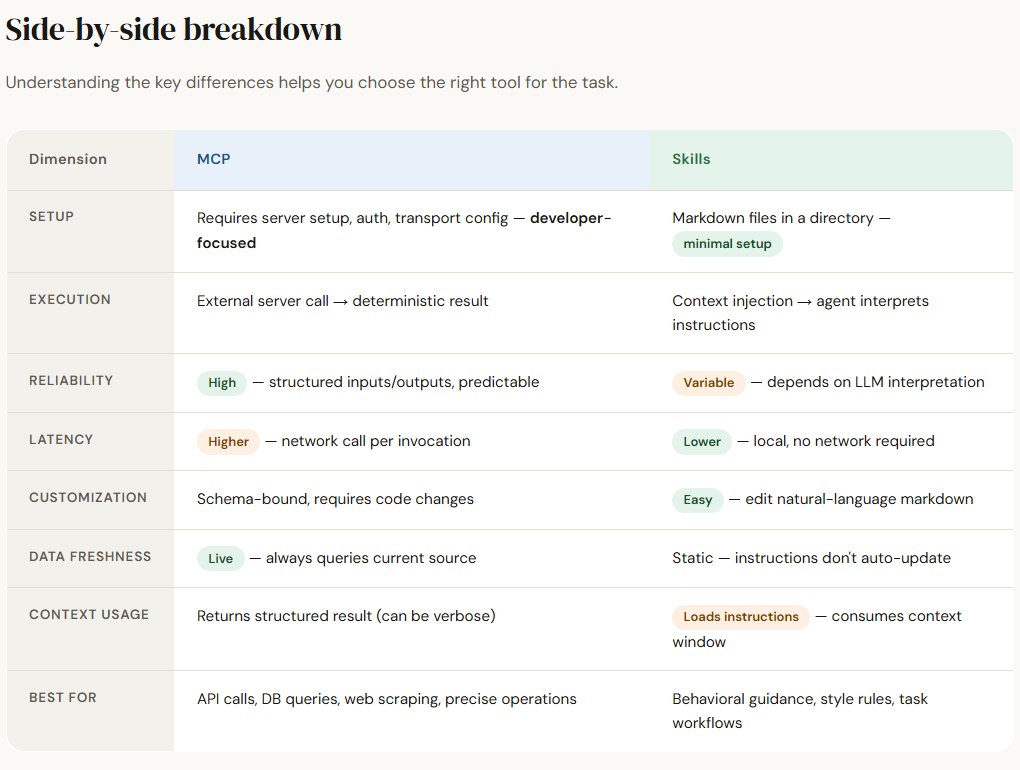

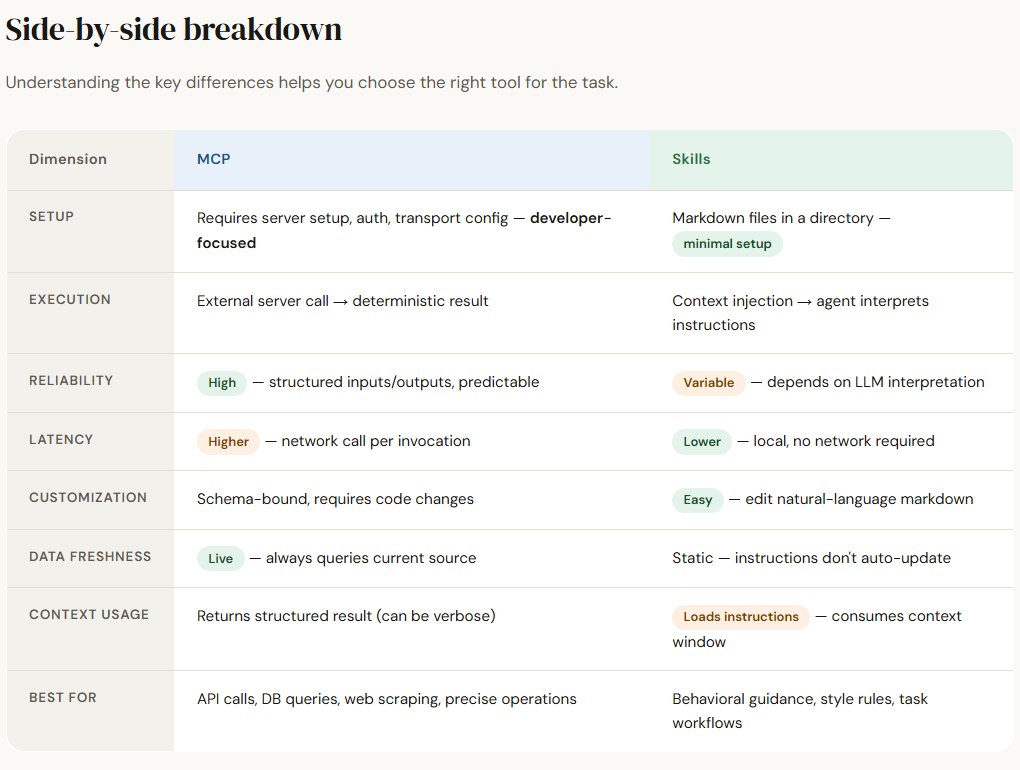

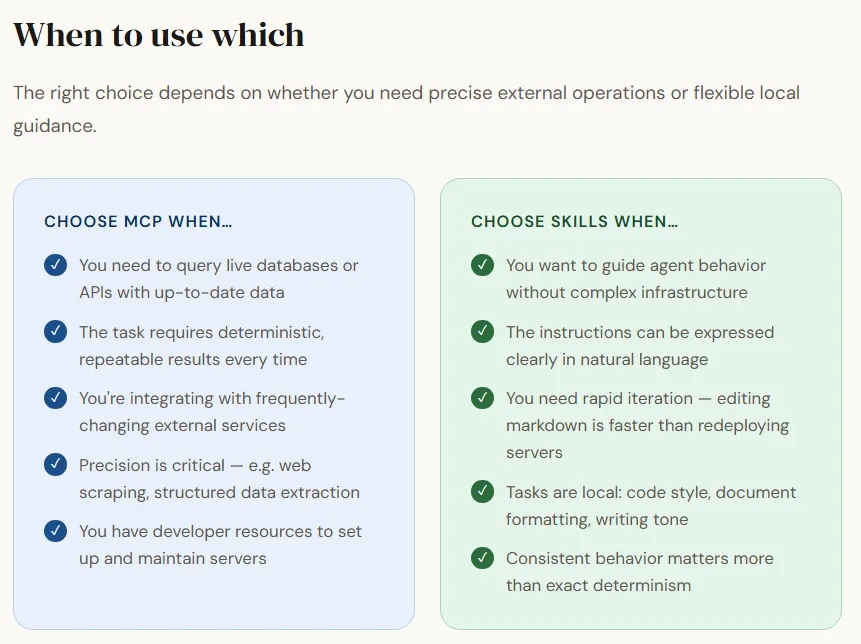

Although MCP servers are not overly difficult to set up, they are designed mainly for developers who are comfortable with concepts such as authentication, migration, and command-line interfaces. Once configured, MCP enables predictable and structured interactions. Each tool typically performs a specific task and returns a predetermined result given the same input, making MCP reliable for precise tasks such as web scraping, database queries, or API calls.

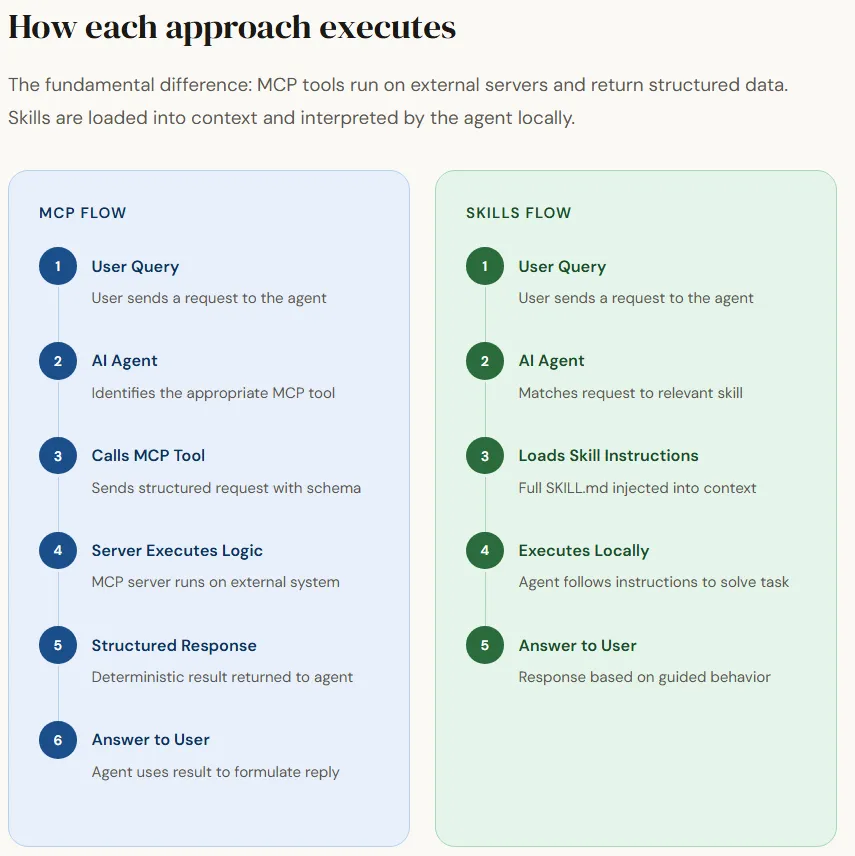

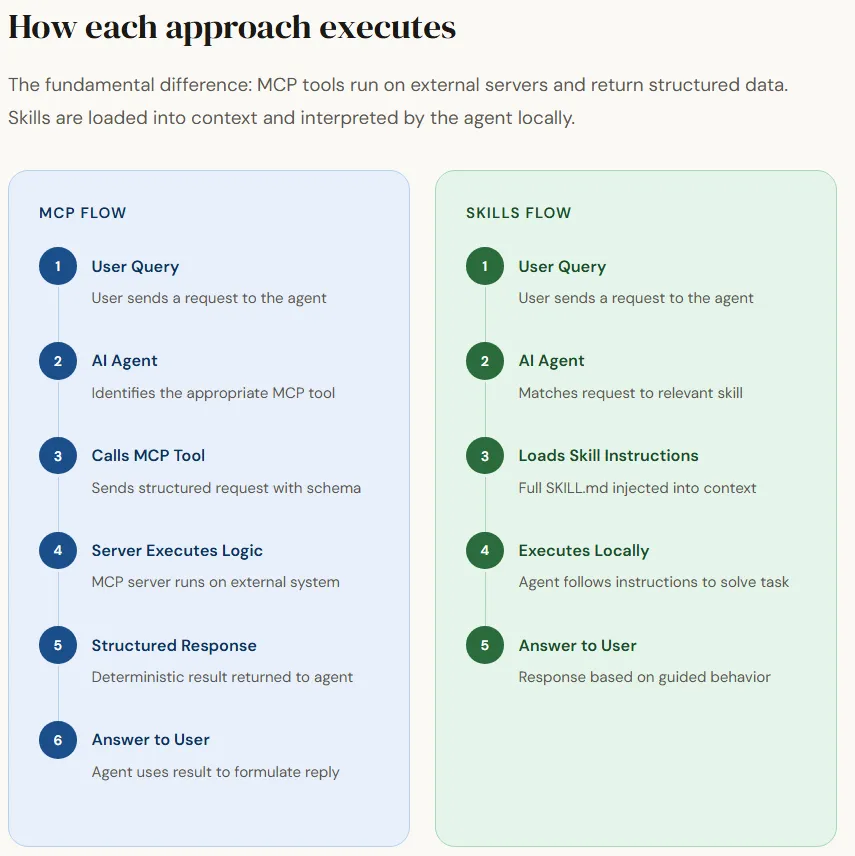

Normal Flow of MCP

User Query → AI Agent → Calls MCP Tool → MCP Server Executes Logic → Returns Generated Response → Agent Uses Result to Respond to User

Limitations of MCP

While MCP provides a powerful way for agents to interact with external systems, it also introduces several limitations to the workflow context of AI agents. One key challenge is tool measurement and acquisition. As the number of MCP tools increases, the agent must rely on tool names and descriptions to identify the correct one, while adhering to the input schema of each tool.

This can complicate tool selection and lead to the development of solutions such as MCP gateways or discovery layers to help agents navigate large tool ecosystems. Additionally, if the tools are poorly designed, they may return very large responses, which may clutter the agent’s window of content and reduce the efficiency of the inference.

Another important limitation is latency and high performance. Since MCP tools often involve network calls to external services, all invocations introduce additional latency compared to local operations. This can reduce multi-step agent workflows where several tools need to be called in sequence.

In addition, MCP interactions require structured server setup and session-based communication, which add complexity to deployment and maintenance. While this trade-off is generally acceptable when accessing external data or services, it may not work well for tasks that could be handled locally within the agent.

Skills

Skills domain-specific instructions that guide how an AI agent should behave when handling certain tasks. Unlike MCP tools, which rely on external resources, generic capabilities local resources—often written in markup files—contain structured instructions, references, and sometimes code snippets.

If the user’s request matches the description of the skill, the agent loads the appropriate instructions into its context and follows them while solving the task. In this way, skills act as a the moral layermodifying how the agent handles certain problems using natural language guidance rather than external tool calls.

The main advantage of skills is them simplicity and flexibility. They require little setup, can be easily customized in natural language, and are stored locally in directories rather than external servers. Agents typically load only the name and description of each skill initially, and when a request matches a skill, the full instructions are delivered to the context and executed. This method keeps the agent efficient while still allowing access to detailed task-specific guidance when needed.

General Skills Workflow

User Query → AI Agent → Matches Appropriate Skill → Loads Skill Instructions into Content → Performs Task Following Instructions → Returns Response to User

Competency Documentation Structure

A standard skills director structure organizes each skill into its own folder, making it easy for the agent to find and use it when needed. Each folder usually contains a main instruction file and optional documents or reference documents that support the work.

| .claude/skills ├── pdf-pasing │ ├── script.py │ └── SKILL.md ├── python-code-style │ ├── REFERENCE.md │ └── SKILL.md └── web-scraping └── SKILL.md |

In this structure, all skills contain a SKILL.md file, which is the main instruction document that tells the agent how to perform a task. The file usually includes metadata such as a skill name and description, followed by step-by-step instructions for the agent to follow when the skill is activated. Additional files such as scripts (script.py) or reference documents (REFERENCE.md) can also be included to provide code resources or extended instructions.

Limitation of Skills

While the capabilities offer flexibility and easy customization, they also present some limitations when used in an AI agent workflow. The biggest challenge comes from the fact that capabilities are written in natural language commands instead of arbitrary code.

This means that the agent must interpret how to use the instructions, which can sometimes lead to misinterpretations, inconsistent executions, or misunderstandings. Even if the same skill is invoked multiple times, the result may vary depending on how the LLM reasons the commands.

Another limitation is that skills put a lot of thinking burden on the agent. The agent must not only decide which skill to use and when, but also decide how to carry out the instructions within the skill. This increases the chances of failure if the instructions are unclear or the task requires precise execution.

Additionally, since skills depend on context injection, loading multiple or complex skills can consume valuable context space and affect performance in long conversations. As a result, while skills are more flexible in guiding behavior, they may be less reliable than formal tools where tasks require consistent, predetermined performance.

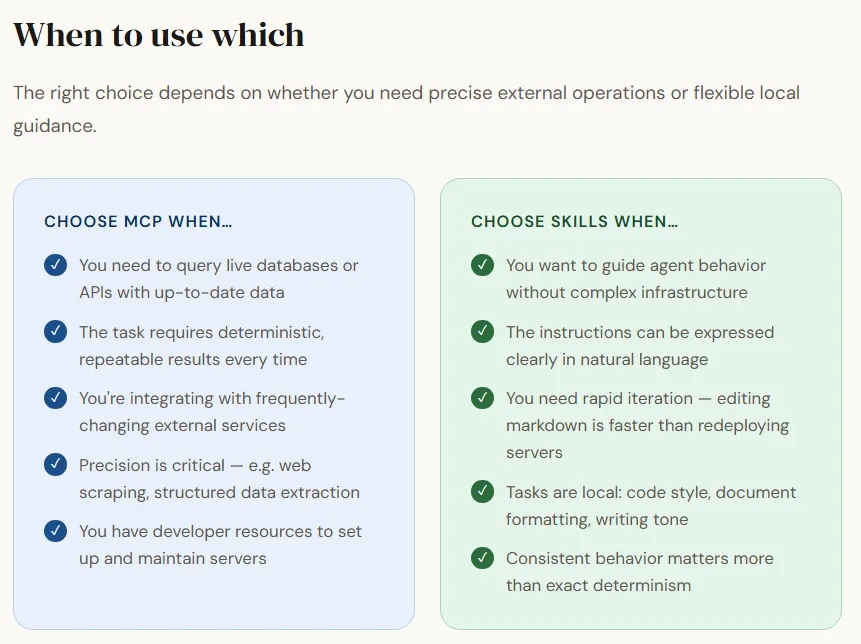

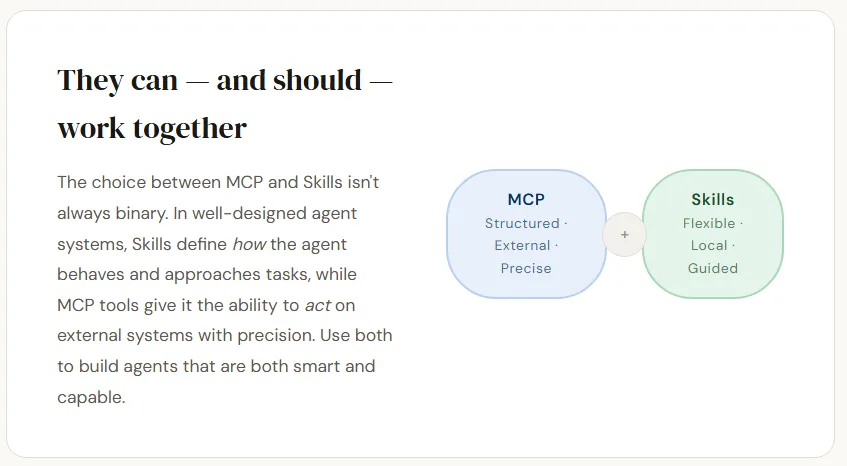

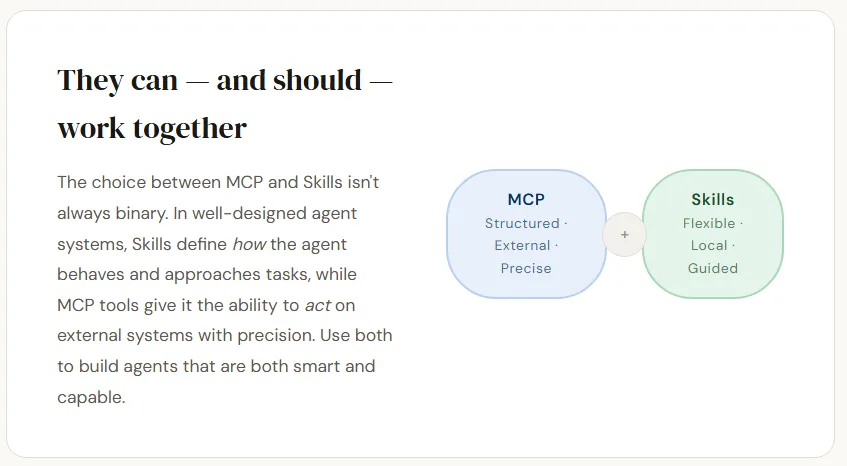

Both approaches provide ways to extend the capabilities of an AI agent, but they differ in how they provide information and perform tasks. One way depends on it systematic tool linkswhere an agent accesses external systems through well-defined inputs and outputs. This makes execution more predictable and ensures that information is returned to a central source, which is continuously updatedwhich is especially useful when the underlying information or APIs change frequently. However, this method usually requires more technical setup and introduces network latency as the agent needs to communicate with external services.

Another way is to focus on it behavioral instructions defined in the area which direct how the agent should handle certain tasks. These commands are lightweight, easy to create, and can be customized quickly without complex infrastructure. Because they run locally, they avoid the network and are easy to maintain with minimal setup. However, since they rely on natural language guidance instead of programmed execution, they can sometimes be interpreted differently by the agent, leading to less consistent results.

Ultimately, the choice between the two depends largely on use the case-whether the agent needs precise, externally derived or locally defined flexible behavioral guidance.

I am a Civil Engineering Graduate (2022) from Jamia Millia Islamia, New Delhi, and I am very interested in Data Science, especially Neural Networks and its application in various fields.