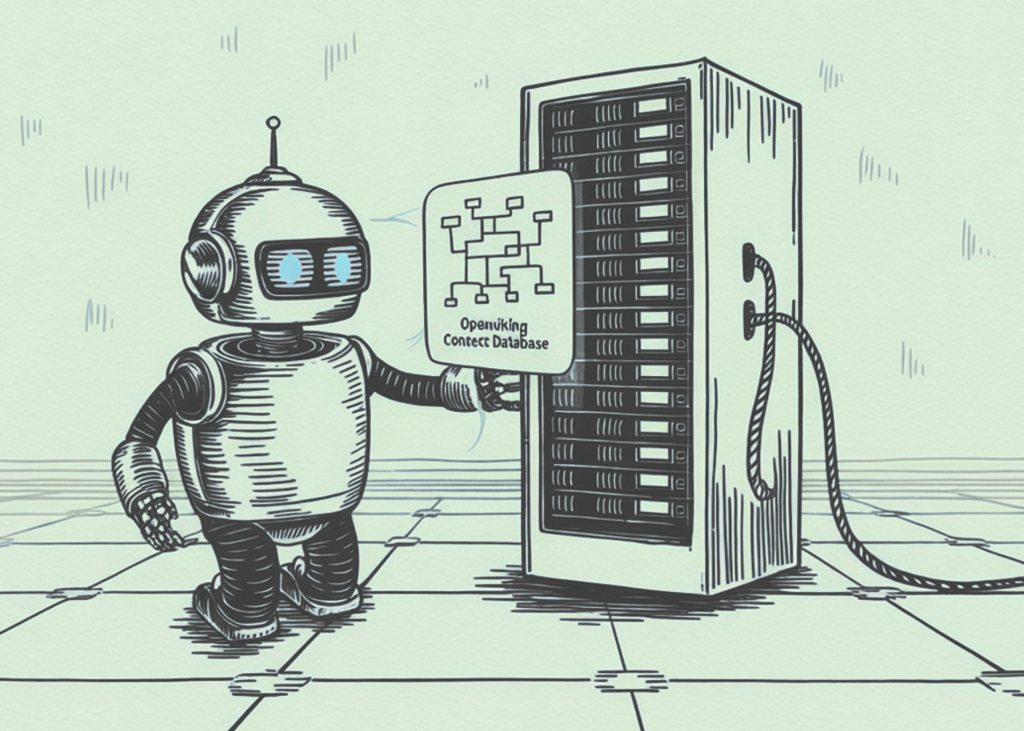

Meet OpenViking: An Open-Source Context Database Bringing Filesystem-Based In-Memory and Retrieval to AI Agent Systems like OpenClaw

OpenViking is open source Content Database on AI Agents from Volcengine. The project is built around a simple architectural concept: agent systems should not treat context as a flat collection of text blocks. Instead, OpenViking organizes the context by using a file system paradigmwith the goal of doing memory, resources, and skills it is controlled by the unitary structure of the section. In the frame of the project itself, this is the answer to five problems that continue in the development of the agent: different contexts, the increase in the volume of the context during long-lasting tasks, the poor retrieval quality in the flat RAG pipelines, the poor visibility of the retrieval behavior, and the limited memory iteration that exceeds the history of the conversation.

A Virtual Filesystem for Context Management

In the center of the design is a virtual file system exposed under the viking:// The protocol. OpenViking puts different context types into indexes, including resources, useragain agent. Under those top-level directories, an agent can access project documents, user preferences, task memories, skills, and commands. This is a move away from ‘flat text chunks’ to abstract file system objects identified by URIs. The intended benefit is that the agent can use standard browser-style functions such as ls again find to find information in a deterministic way, rather than relying only on the same search for every flat vector index.

How Repeated Directory Retrieval Works

That choice of properties is important because OpenViking does not try to remove semantic retrieval. It tries to suppress and organize it. The project’s retrieval pipeline first uses a vector retrieval to identify the top-point directory, then performs a second retrieval within that directory, and iteratively drills down into subdirectories if needed. The README calls this out Restoration of Repetitive Documents. The basic idea is that retrieval should preserve both the local relevance and the structure of the global context: the system should not only find a semantically similar fragment, but also understand the context of the directory where that fragment resides. For agent workloads that include repositories, documents, and redundant memory, that’s a clearer recovery model than the traditional one-shot RAG.

Classified Content is Loaded to Reduce Token Overhead

OpenViking also adds a built-in method Tiered Context Loading. When content is written, the system automatically processes it into three layers. L0 is an abstract, defined as a one-sentence abbreviation used for quick retrieval and identification. L1 a summary containing key information and planning application scenarios. L2 original comprehensive content, intended for in-depth reading only when necessary. The README examples are illustrative .abstract again .overview related files and directories, while the underlying documents are still available as detailed content. This design is intended to reduce bloat quickly by allowing the agent to load high-level snapshots first and defer the full context until the task actually requires it.

Visual Recovery and Debugging

The second important feature of the system is visibility. OpenViking saves the directory browsing path and file position during retrieval. The README file explains this as Visual Retrieval Method. In practical terms, that means developers can test how the system navigates the hierarchy to retrieve context. This is useful because most agent failures are not model failures in the narrow sense; they are content routing failures. If the wrong memory, document, or skill is discovered, the model can still produce a bad answer even when the model itself knows it. OpenViking’s method makes that retrieval method visible, giving developers something concrete to configure instead of treating context selection as a black box.

Session Memory and Repetition

The project also extends memory management beyond logging. OpenViking includes Automatic Session Management with built-in memory self-iteration loop. According to the README file, at the end of the session developers can trigger the memory release, and the system will analyze the results of the operation and the user’s feedback, and then update both the user’s memory directory and the Agent. Target results include user preference memories and agent-side performance information such as tool usage patterns and usage tips. That makes OpenViking closer to a persistent context substrate for agents than a traditional vector database used only for retrieval.

OpenClaw test results reported

The README file includes a test section for OpenClaw memory plugin in LoCoMo10 long distance conversation data set. The setup uses 1,540 cases after removing stage 5 samples without the ground truth, reports OpenViking version 0.1.18and usage seed-2.0-code as a model. In the results reported, OpenClaw(memory-core) reaches a 35.65% completion rate on 24,611,530 input tokens, while OpenClaw + OpenViking Plugin (-memory-core) it reaches 52.08% on 4,264,396 input tokens as well OpenClaw + OpenViking Plugin (+memory-core) it reaches 51.23% of the 2,099,622 input tokens. These are the results reported by the project rather than third-party independent benchmarks, but they are consistent with the system’s design goal: to improve retrieval structure while reducing unnecessary token consumption.

Shipping Details

The written requirements are Python 3.10+, Go 1.22+again GCC 9+ or Clang 11+with support for Linux, macOS, and Windows. Installation is available with pip install openviking --upgrade --force-reinstalland there is a Rust CLI option called ov_cli which can be installed with a script or built with Cargo. An OpenViking implementation requires two model capabilities: a The VLM model to get an understanding of the image and content, and The embedding model for vectorization and semantic retrieval. Supported VLM access methods include Volcengine, OpenAIagain LiteLLMwhile the example server configuration includes OpenAI embedding with text-embedding-3-large and the OpenAI VLM example we use gpt-4-vision-preview.

Key Takeaways

- OpenViking manages the agent context as a file systemto combine memory, resources, and skills under a single hierarchical structure instead of a flat RAG-style store.

- Its retrieval pipeline is iterative and direction-awareto combine directory structure with semantic search improving context accuracy.

- It uses layered content loading of L0/L1/L2therefore agents can read the digests first and load the full content only when needed, reducing token usage.

- OpenViking displays retrieval trajectorieswhich makes content selection more visible and easier to modify than the typical black-box RAG workflow.

- It also supports session-based memory replicationextracting long-term memory from conversations, tool calls, and activity history.

Check it out Repo. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.