OpenRouter’s guide to AI development

Building with AI today can feel messy. You can use one API for text, another for images, and a different one for something else. Every model comes with its own setup, API key, and payment. This slows you down and makes things more difficult than they need to be. What if you could use all these models with one simple API. This is where OpenRouter comes in handy. It gives you a single point of access to models from providers like OpenAI, Google, Anthropic and more. In this guide, you’ll learn how to use OpenRouter step by step, from your first API call to building real applications.

What is OpenRouter?

OpenRouter allows you to access multiple AI models using a single API. You do not need to configure each provider separately. You connect once, use one API key, and write one set of code. OpenRouter handles the rest, such as authentication, request formatting, and billing. This makes it easy to try different models. You can switch between models like GPT-5, Claude 4.6, Gemini 3.1 Pro, or Llama 4 by changing a single parameter in your code. This helps you choose the right model based on cost, speed or features such as image recognition.

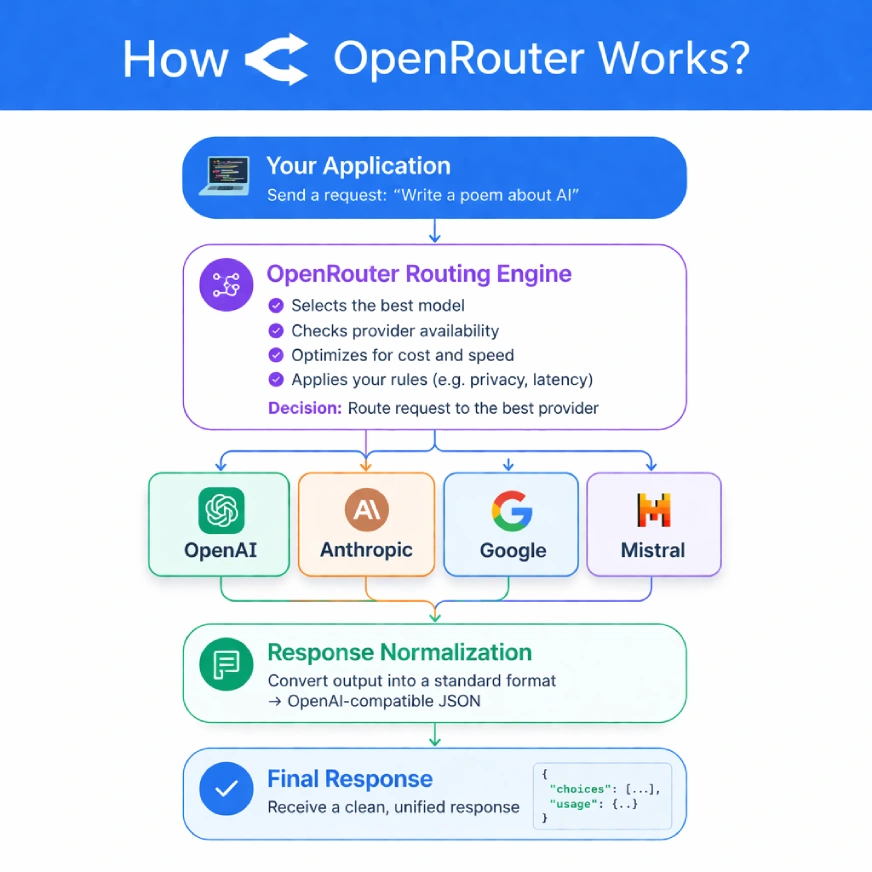

How does OpenRouter work?

OpenRouter acts as a bridge between your application and different AI providers. Your application sends a request to the OpenRouter API, and converts that request to a common format that any model can understand.

Then there is the advanced steering engine. It will find the best provider for your application according to the rule set you can set. To give an example, it can be set to give preference to the least expensive provider, the one with the shortest, or those with a specific need for data privacy such as Zero Data Retention (ZDR).

The platform tracks the performance and overtime of all suppliers and as a result, is able to make smart, real-time routing decisions. In the event that your preferred provider is not working properly, OpenRouter automatically fails over to a well-known provider and improves the stability of your application.

Getting Started: Your first API call

OpenRouter is also easy to set up as it is a hosted service, meaning no software to be installed. It can be ready in just a few minutes:

Step 1: Create an account and get credits:

First, register on OpenRouter.ai. To use paid models, you will need to purchase some credits.

Step 2: Generate an API key

Navigate to the “Keys” section of your account dashboard. Click “Create Key,” give it a name, and copy the key securely. For best practice, use different keys for different areas (eg, dev, prod) and set spending limits to control costs.

Step 3: Prepare Your Site

Keep your API key in an environment variable to avoid exposing it in your code.

Step 4: Environment Setup using Environment Variable:

For macOS or Linux:

export OPENROUTER_API_KEY="your-secret-key-here"For Windows (PowerShell):

setx OPENROUTER_API_KEY "your-secret-key-here"Making a request to OpenRouter

Since OpenRouter has an API compatible with OpenAI, you can use OpenAI client libraries to make requests. This makes the process of migrating an already completed OpenAI project incredibly easy.

A Python example using the OpenAI SDK

# First, ensure you have the library installed:

# pip install openai

import os

from openai import OpenAI

# Initialize the client, pointing it to OpenRouter's API

client = OpenAI(

base_url="

api_key=os.environ.get("OPENROUTER_API_KEY"),

)

# Send a chat completion request to a specific model

response = client.chat.completions.create(

model="openai/gpt-4.1-nano",

messages=[

{

"role": "user",

"content": "Explain AI model routing in one sentence."

},

],

)

print(response.choices[0].message.content)Output:

Model Testing and Advanced Routing

OpenRouter shows its true power in more than simple applications. Its platform supports a dynamic and intelligent AI model routing.

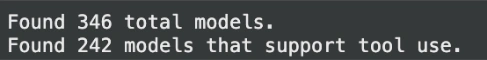

Program Acquisition Models

As models are continuously added or updated, you should not hardcode model names into one of your production applications, instead openrouter has an endpoint/models which eventually returns a list of all available models with suggested prices, content restrictions and capabilities.

import os

import requests

# Fetch the list of available models

response = requests.get(

"

headers={

"Authorization": f"Bearer {os.environ.get('OPENROUTER_API_KEY')}"

},

)

if response.status_code == 200:

models = response.json()["data"]

# Filter for models that support tool use

tool_use_models = [

m for m in models

if "tools" in (m.get("supported_parameters") or [])

]

print(f"Found {len(models)} total models.")

print(f"Found {len(tool_use_models)} models that support tool use.")

else:

print(f"Error fetching models: {response.text}"Output:

Intelligent Routing and Fallbacks

You can manage how OpenRouter chooses a provider and you can set up backups in case a request fails. This is an important strength of production systems.

- Route: Send a provider object to your application to rate models for latency or value, or provide policies such as zdr (Zero Data Retention).

- Issues: When the first one fails, OpenRouter automatically tries the next one in the list. Only successful attempt can be charged.

Here’s a Python example that shows the recursion chain:

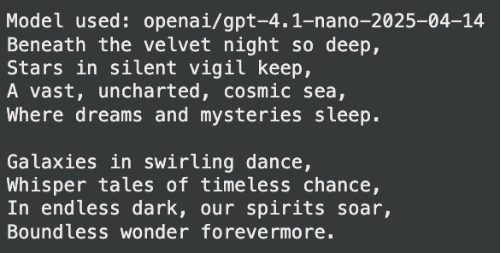

# The primary model is 'openai/gpt-4.1-nano'

# If it fails, OpenRouter will try 'anthropic/claude-3.5-sonnet',

# then 'google/gemini-2.5-pro'

response = client.chat.completions.create(

model="openai/gpt-4.1-nano",

extra_body={

"models": [

"anthropic/claude-3.5-sonnet",

"google/gemini-2.5-pro"

]

},

messages=[

{

"role": "user",

"content": "Write a short poem about space."

}

],

)

print(f"Model used: {response.model}")

print(response.choices[0].message.content)Output:

Teaching Advanced Skills

The same chat completion API can be used to send images to any model with vision capabilities for analysis. All it takes is to add the image as a URL, or a base64-encoded string to your message list.

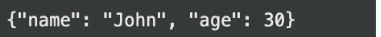

Formatted Output (JSON Mode)

Need reliable JSON output? You can instruct any compatible model to return a response that conforms to a specific JSON schema. OpenRouter even has an optional Response Healing plugin that can be used to fix incorrect JSON due to models with strict formatting issues.

# Requesting a structured JSON output

response = client.chat.completions.create(

model="openai/gpt-4.1-nano",

messages=[

{

"role": "user",

"content": "Extract the name and age from this text: 'John is 30 years old.' in JSON format."

}

],

response_format={

"type": "json_object",

"json_schema": {

"name": "user_schema",

"schema": {

"type": "object",

"properties": {

"name": {"type": "string"},

"age": {"type": "integer"}

},

"required": ["name", "age"],

},

},

},

)

print(response.choices[0].message.content)Output:

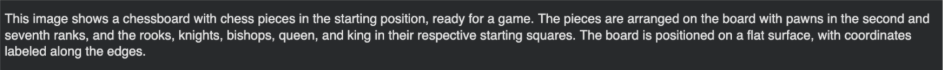

Multimodal Input: Working with Images

You can use the same chat completion API to send images to any vision-capable model for analysis. Simply add the image as a URL or base64-encoded string to your message list.

# Sending an image URL for analysis

response = client.chat.completions.create(

model="openai/gpt-4.1-nano",

messages=[

{

"role": "user",

"content": [

{

"type": "text",

"text": "What is in this image?"

},

{

"type": "image_url",

"image_url": {

"url": "

}

},

],

}

],

)

print(response.choices[0].message.content)Output:

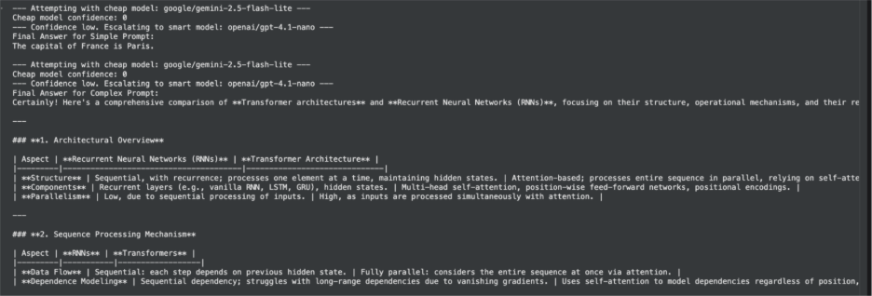

Cost-Agent, Multi-Provider Agent

The real strength of OpenRouter lies in the development of advanced, affordable, and highly available applications. As an illustration, we can create a virtual agent that will dynamically choose the best model to accomplish a task with the help of a cheap-to-smart integrated approach.

The first thing this agent will do is try to answer the question given by the user using a fast and cheap model. In case that model is not good enough (eg if the task involves critical thinking) it will redirect the query upwards to a more powerful, premium model. This is a common trend when it comes to manufacturing systems that must strike a balance between performance, price, and quality.

“Cheap-to-Smart” Logic

Our agent will follow these steps:

- Get user information.

- Send information to the least expensive model at first.

- Check the response to find out if the model was able to respond to the request. One simple way to do this is to ask the model to provide a confidence score for its output.

- If confidence is low, the agent will automatically repeat the same information with a high-level model that leads to a good response to a complex task.

This approach ensures that you don’t overpay for simple applications while still having the power of high-level models where needed.

Python Implementation

Here’s how you can implement this logic in Python. We will use the programmed output to ask the model its confidence level, making the analysis of the answer more reliable.

from openai import OpenAI

import os

import json

# Initialize the client for OpenRouter

client = OpenAI(

base_url="

api_key=os.environ.get("OPENROUTER_API_KEY"),

)

def run_cheap_to_smart_agent(prompt: str):

"""

Runs a prompt first through a cheap model, then escalates to a

smarter model if confidence is low.

"""

cheap_model = "mistralai/mistral-7b-instruct"

smart_model = "openai/gpt-4.1-nano"

# Define the desired JSON structure for the response

json_schema = {

"type": "object",

"properties": {

"answer": {"type": "string"},

"confidence": {

"type": "integer",

"description": "A score from 1-100 indicating confidence in the answer.",

},

},

"required": ["answer", "confidence"],

}

# First, try the cheap model

print(f"--- Attempting with cheap model: {cheap_model} ---")

try:

response = client.chat.completions.create(

model=cheap_model,

messages=[

{

"role": "user",

"content": f"Answer the following prompt and provide a confidence score from 1-100. Prompt: {prompt}",

}

],

response_format={

"type": "json_object",

"json_schema": {

"name": "agent_response",

"schema": json_schema,

},

},

)

# Parse the JSON response

result = json.loads(response.choices[0].message.content)

answer = result.get("answer")

confidence = result.get("confidence", 0)

print(f"Cheap model confidence: {confidence}")

# If confidence is below a threshold (e.g., 70), escalate

if confidence < 70:

print(f"--- Confidence low. Escalating to smart model: {smart_model} ---")

# Use a simpler prompt for the smart model

smart_response = client.chat.completions.create(

model=smart_model,

messages=[

{

"role": "user",

"content": prompt,

}

],

)

final_answer = smart_response.choices[0].message.content

else:

final_answer = answer

except Exception as e:

print(f"An error occurred with the cheap model: {e}")

print(f"--- Falling back directly to smart model: {smart_model} ---")

smart_response = client.chat.completions.create(

model=smart_model,

messages=[

{

"role": "user",

"content": prompt,

}

],

)

final_answer = smart_response.choices[0].message.content

return final_answer

# --- Test the Agent ---

# 1. A simple prompt that the cheap model can handle

simple_prompt = "What is the capital of France?"

print(f"Final Answer for Simple Prompt:n{run_cheap_to_smart_agent(simple_prompt)}n")

# 2. A complex prompt that will likely require escalation

complex_prompt = "Provide a detailed comparison of the transformer architecture and recurrent neural networks, focusing on their respective advantages for sequence processing tasks."

print(f"Final Answer for Complex Prompt:n{run_cheap_to_smart_agent(complex_prompt)}")Output:

This working example goes beyond a simple API call and shows how to design a smarter, more cost-effective system using OpenRouter’s core strengths: multi-model and structured results.

Monitoring and visibility

Understanding your application’s performance and costs is important. OpenRouter provides built-in tools to help.

- Uses of Accounting: Every API response contains detailed metadata about token usage and cost for that particular request, allowing for real-time cost tracking.

- Broadcast Feature: Without any additional code, you can configure OpenRouter to automatically send detailed traces of your API calls to virtual platforms such as Langfuse or Datadog. This provides in-depth information on latency, errors, and performance across all models and providers.

The conclusion

The era of being tied to a single AI provider is over. Tools like OpenRouter fundamentally change what a developer does by providing an abstraction layer that unlocks unprecedented flexibility and robustness. By integrating different AI environments, OpenRouter not only saves you from the tedious task of managing multiple integrations but also empowers you to build smart, cost-effective, and robust applications. The future of AI development is not about picking a single winner; it’s about having seamless access to all of them. With this guide, you now have a map to navigate that future.

Frequently Asked Questions

A. OpenRouter provides a single, unified API to access hundreds of AI models from various providers. This simplifies development, improves reliability with automatic failover, and allows you to easily change models to optimize cost or performance.

A. No, it is designed to be OpenAI-API compatible. You can use existing OpenAI SDKs and usually only need to change the base URL to point to OpenRouter.

A. OpenRouter’s fallback feature automatically tries your request with the fallback model you specify. This makes your application more resilient to provider termination.

A. Yes, you can set hard spending limits for each API key, with daily, weekly, or monthly reset schedules. Every API response includes detailed cost data for real-time tracking.

A. Yes, OpenRouter supports scheduled output. You can provide a JSON schema in your request to force the model to return a response in a valid, predictable format.

Sign in to continue reading and enjoy content curated by experts.