Google’s KV Cache Development Explained

A few days ago, a group of researchers at Google downloaded a PDF that didn’t just convert the AI: it wiped billions of dollars off the stock market.

If you look at the charts of Micron (MU) or Western Digital last week, you saw the sea Red. Why? Because the new technology is called TurboQuant we just proved that we may not need nearly as much hardware to run large AI models as we thought.

But don’t worry about complicated calculations. Here’s a simple breakdown of Google’s latest key cache optimization strategy—creating TurboQuant.

We present a set of improved theoretically based algorithms that allow for large compression of large language models and vector search engines. – Google Release Official Notice

Memory Constraint

Think of the AI model as a giant library. Typically, every “book” (data point) is recorded in high definition, 4K detail. This takes up a huge amount of shelf space (called techies VRAM or memory).

The more the AI ”talks” to you, the more shelf space it needs to remember what happened ten minutes ago. This is why AI hardware is so expensive. Companies like Micron make a fortune because AI models are the “last hogs” for success.

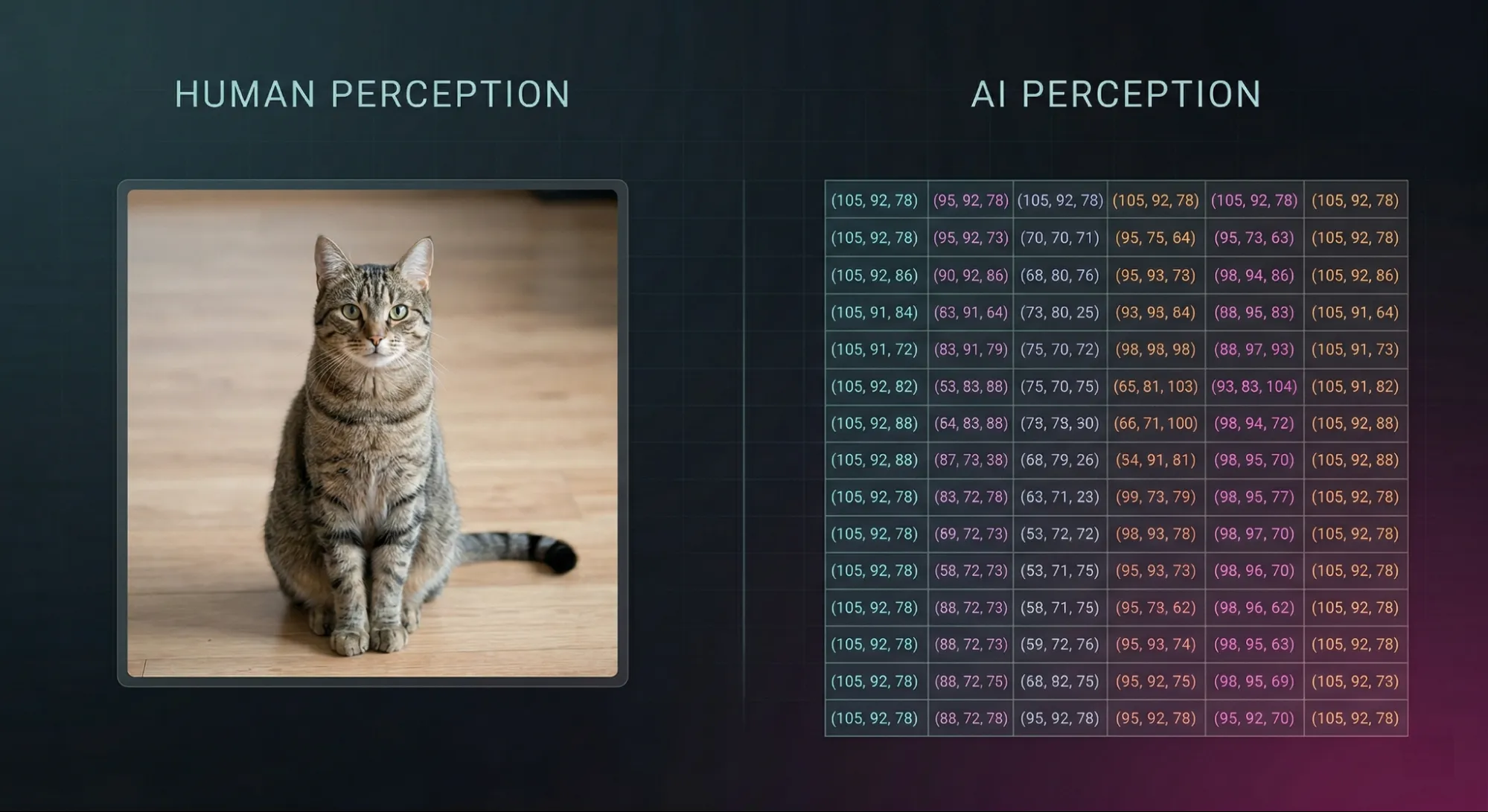

AI language: Vectors

To understand why these books are so difficult, you have to look at the “ink” used in these books. AI doesn’t see words or images: it sees Vectors.

A vector is actually a set of coordinates, a series of numbers as precise as 0.872632, that tell the AI where a piece of information sits on a large, multi-dimensional map.

- Simple vectors may describe a single point on the graph.

- Vectors are high dimensional capture complex meanings, such as the particular “vibe” of a sentence or the features of a person’s face.

High-dimensional vectors are more efficient, but require significant memory, to create bottles in the key-value store. In transformer models, the KV cache stores the key of previous tokens and value vectors so that the model does not have to return attention from the beginning every time.

Solution: Vector Quantization

To combat the inflammation of the memory, developers use the so-called movement Vector Quantization. If the links are too long, we simply “snip” the ends to save space.

Imagine you have an array of n-dimensional vectors:

- 0.872632982

- 0.192934356

- 0.445821930

That’s a lot of data to store. To save space, we “balance” by shaving the ends:

- 0.872632982 → 0.87

- 0.192934356 → 0.19

- 0.445821930 → 0.44

* Rotation shown is to scale rotation. In practice, vectors are combined and mapped into a subset of representative values, not just rotations with each other.

This reduces the accuracy of the coefficient or the shaving. This can be done using methods such as rounding-to-n digits, dynamic thresholding, limited guess thresholding, Least Significant Bit (LSB).

This optimization step has two advantages:

- Advanced Vector Search: It enables massive AI by enabling high-speed matchmaking, search engines and retrieval systems. significantly Immediately.

- Unblocked KV Cache Bottlenecks: By reducing the size of key-value pairs, it reduces memory costs and speeds up matching searches within the cache, which is important for model performance.

When Vector Quantization Fails?

This process has a hidden cost: the full accuracy of the constant measurement (a scale and a the zero point) must be stored in all blocks. This storage is important so that the AI can later “shave off” or remove the value of the data. This adds up 1 or 2 extra bits for each numbercan cost up to 50% of your target amount. Because each block needs its own scale and offset, you’re not only storing data but also storing instructions to decode it.

The solution reduces memory at the expense of accuracy. TurboQuant changes that trade-off.

TurboQuant: Compression without Caveats

Google’s TurboQuant is a compression method that achieves a high reduction in model size with a low loss of accuracy by fundamentally changing. how AI perceives vector space. Instead of just crunching the numbers and hoping for the best, it uses a two-stage math pipeline to fit any data to the most efficient grid.

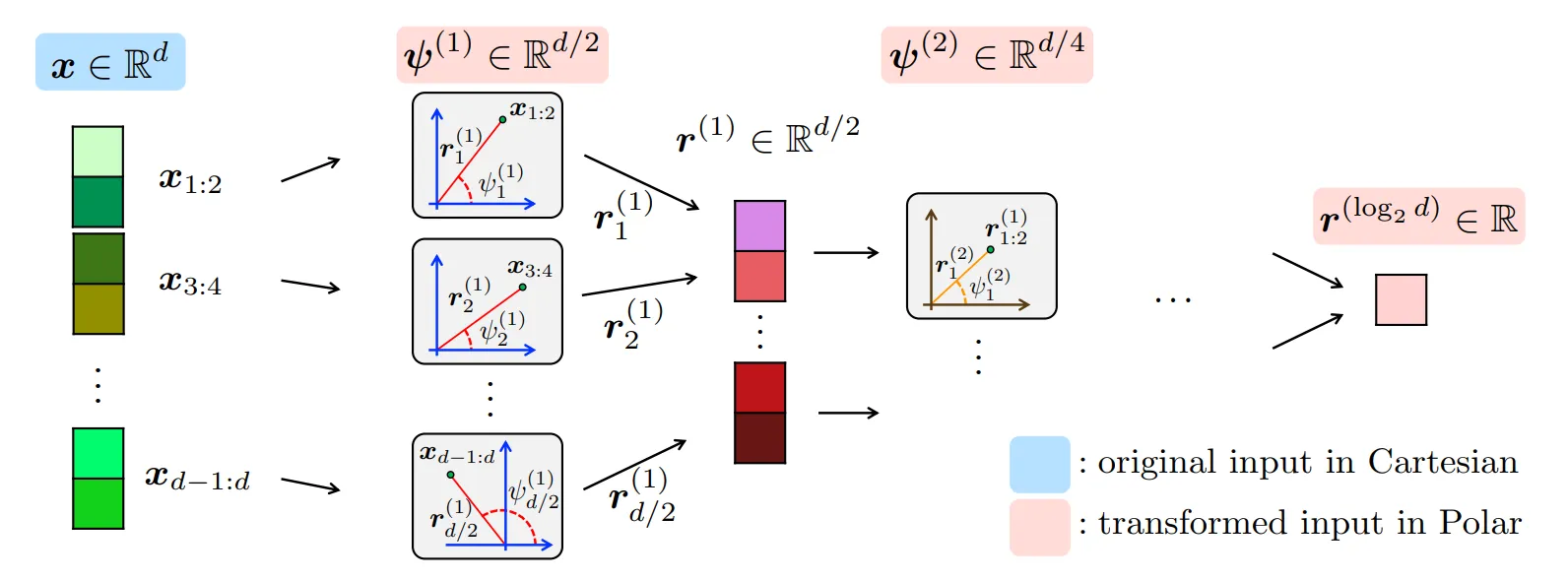

Phase 1: Random Rotation (PolarQuant)

Conventional measurement fails because real-world data is messy and unpredictable. To remain accurate, you are forced to keep the “scale” and “zero point” instructions for all data blocks.

TurboQuant solves this by first installing ia random rotation (or random correction) to the input vectors. This rotation forces the data into a predictable, fixed distribution (especially polar coordinates) regardless of what the original data looked like. Random rotation distributes the information evenly across all dimensions, smoothing out spikes and making the data behave more uniformly.

- Benefit: Because the distribution is now “flat” and predictable, the AI can apply perfect rotation to all combinations without needing to keep those extra “scale and zero” measurements.

- Result: You bypass the normalization step entirely, achieving huge memory savings with zero overhead.

To learn more about the PolarQuant method see: arXiv

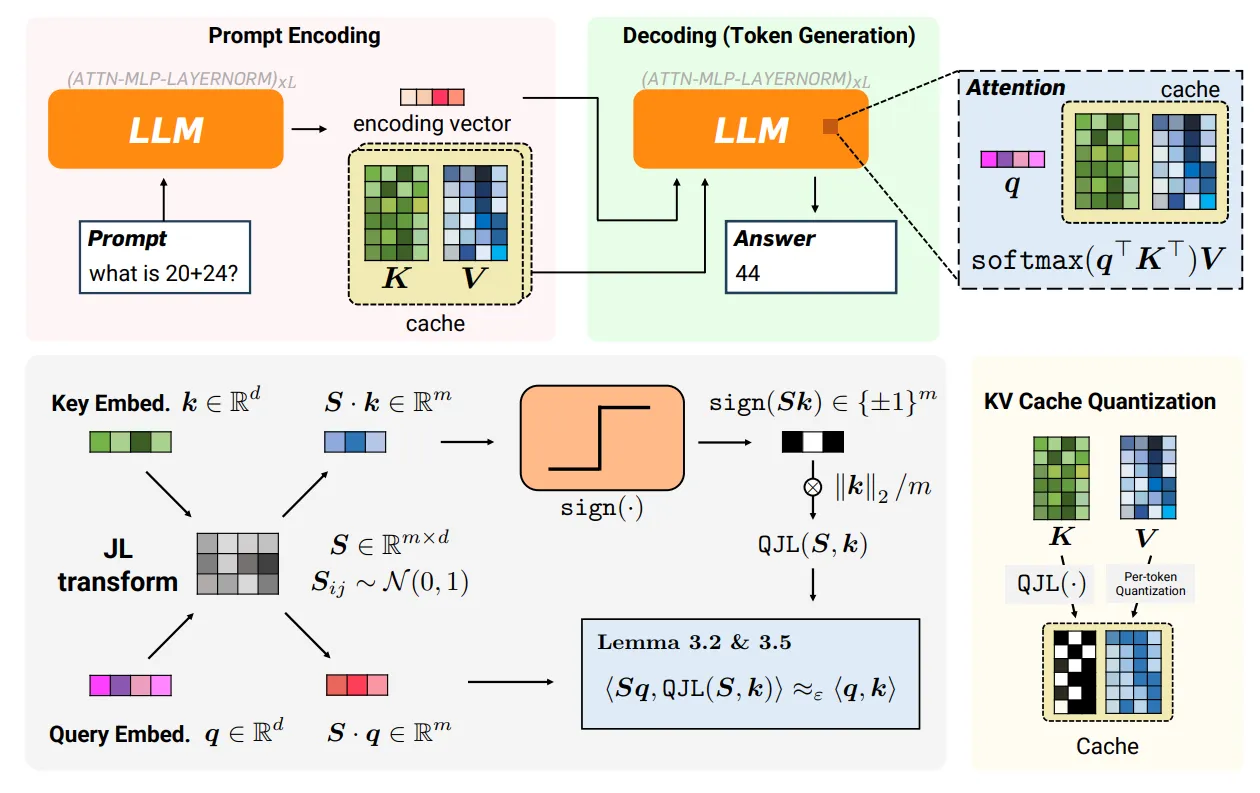

Stage 2: 1-Bit “Residual” Correction (Quantized JL)

Even a perfect rotation, a simple rotation is inducing bias. Small statistical errors are one-sided. Over time, these errors accumulate, causing the AI to lose its “train of thought” or hallucinate. TurboQuant fixes this using Quantized Johnson-Lindenstrauss (QJL).

- Remaining: It isolates the “residual” (residual) error that was lost during the first phase of the contraction.

- 1-Bit Symbol: It measures this error to one bit (a sign bit, or +1 or -1).

- Statistics: This 1-bit check acts as an “unbiased estimator,” meaning that in most operations, the smallest (1-Bit Sign) sign statistically cancels the bias.

To read more about the QJL method see: arXiv

PolarQuant and QJL are used in TurboQuant to reduce key-value constraints without sacrificing AI model performance.

| The way | Memory | Accuracy | The highest |

| Standard KV storage | At the top | It’s complete | Nothing |

| Quantization | Down | Small loss | Top (metadata) |

| TurboQuant | Much less | Near-perfect | A little |

Performance Reality

By removing metadata tax and correcting circular bias, TurboQuant delivers the “best of both worlds” result for high-speed AI systems:

- Quality Neutrality: In testing with similar models Llama-3.1TurboQuant achieved the exact same performance as the full precision model while compressing the memory by a certain amount 4x to 5x.

- Quick Search: In nearest neighbor search operations, it outperforms existing techniques while reducing “index time” (the time required to process data) to almost zero.

- Hardware Friendly: The entire algorithm is designed for vectorization, which means it can run parallely on modern GPUs with a light footprint.

Reality: Beyond the Research Paper

The true effect of TurboQuant measured not just by quotes, but by how it reshapes the global economy and the physical hardware in our pockets.

1. Breaking the “Memory Wall”

For many years, the “Memory Wall” was the single biggest threat to AI development. As the models grew, they required large amounts of RAM and storage, making AI hardware prohibitively expensive and keeping powerful models locked away in the cloud.

When TurboQuant was unveiled, it fundamentally changed that equation:

- The Semiconductor Shift: The announcement of the launch of TurboQuant sent shock waves through the storage industry. AI can suddenly work 6x memory, the huge demand for physical RAM will cool down.

- From Cloud to Consumer: By reducing the AI’s “digital cheat sheet” (KV cache) down to 3 bits per value, TurboQuant has successfully “opened” the hardware bottleneck. This has moved high-end AI from large server farms to 16GB consumer devices such as the Mac Mini, which allows high-performing LLMs to work locally and privately.

2. A New World Standard

TurboQuant has proven that the future of AI is not just architecture big libraries, but in terms of inventing a more efficient “ink”.

- “Invisible” Infrastructure: Unlike previous studies that required complex retraining, TurboQuant is designed to be data agnostic. It can be installed on any existing transformer model (such as Google Gemini) to quickly reduce costs and energy consumption.

- Democratizing Intelligence: This functionality provided a bridge for AI to scale to new users. In the first mobile markets, it turned the dream of a fully-cognitive, on-device AI assistant into a battery-friendly reality. Your next phone may use GPT-level AI locally!

Ultimately, TurboQuant marks the moment when AI efficiency becomes as critical as raw computing power. It is no longer just a “score sheet” achievement. It is the invisible scaffolding that enables the next generation of semantic search and autonomous agents to operate on a global, human scale.

TurboQuant: Future Outlook

For years, scaling AI meant throwing more hardware at the problem: more GPUs, more memory, more cost. TurboQuant challenges that belief.

Instead of expanding outward, it focuses on using what we already have more wisely. By reducing the memory load without significantly compromising performance, it changes the way we think about building and running large models.

Frequently Asked Questions

IA. TurboQuant is an AI memory optimization method that reduces RAM usage by compressing KV cache data with minimal impact on performance.

A. It uses random rotation and efficient scaling to compress vectors, remove extra metadata and reduce the memory required for AI models.

A. Not completely, but it significantly reduces storage requirements, making larger models more efficient and easier to run on smaller hardware.

Sign in to continue reading and enjoy content curated by experts.