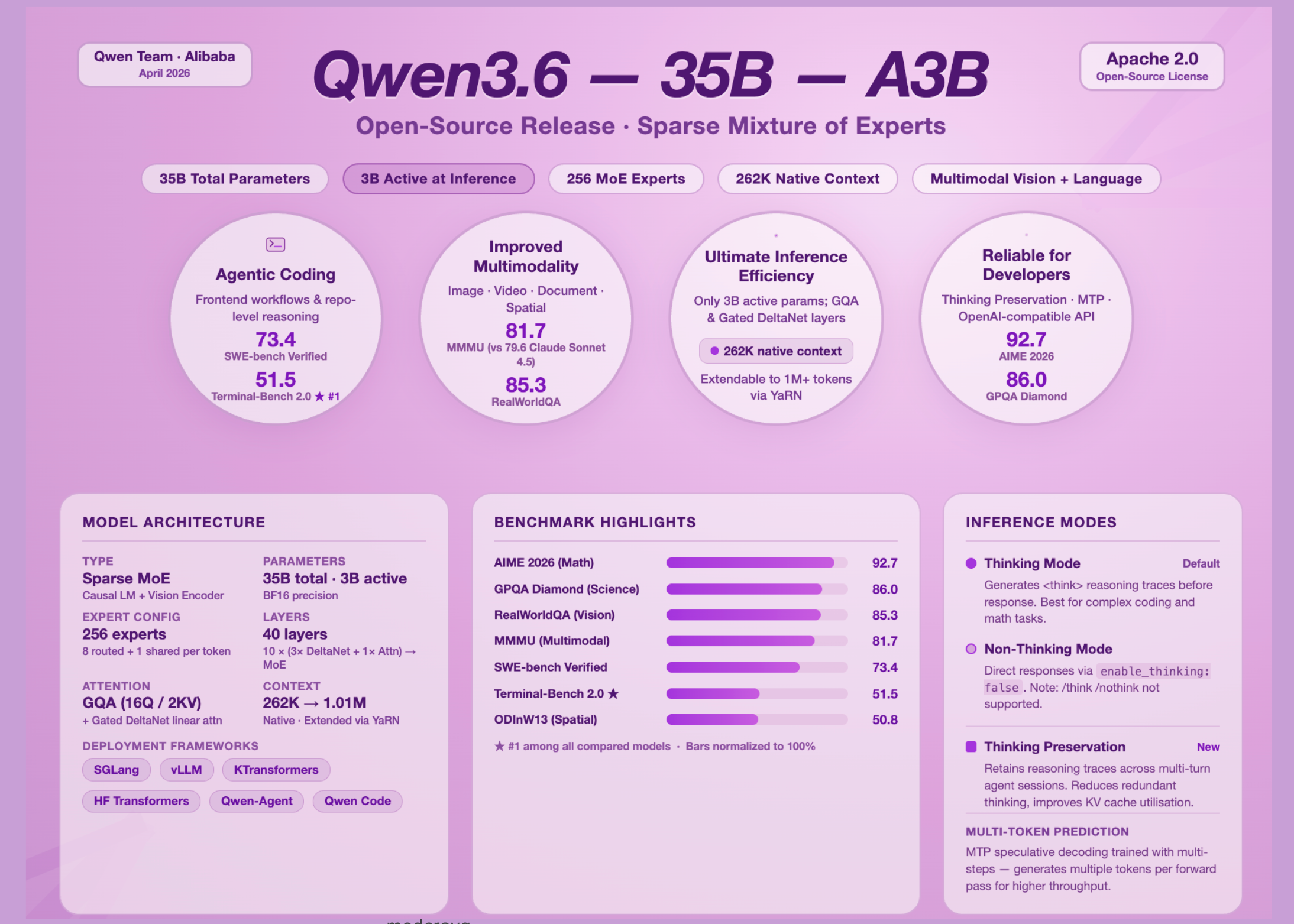

Qwen Team Open-Sources Qwen3.6-35B-A3B: A Perception-Language Model for Sparse MoE with 3B Functional Parameters and Agentic Coding Capabilities

The open source AI space has a new entry worth paying attention to. The Qwen team at Alibaba released the Qwen3.6-35B-A3B, the first open weight model from the Qwen3.6 generation, and it makes a strong argument that parameter efficiency is more important than raw model size. With 35 billion parameters but only 3 billion activated at the time of consideration, this model delivers agent code performance competitive with dense models ten times its effective size.

What is the Sparse MoE Model, and Why Does It Matter Here?

The Mixture of Experts (MoE) model does not use all its parameters in every forward pass. Instead, the model routes each input token through a small set of specialized sub-networks called ‘experts.’ Other parameters are left blank. This means that you can have a large number of parameter calculations while keeping the computational cost – and therefore the computational cost and latency – only proportional to the parameter calculation that works.

Qwen3.6-35B-A3B is a causal language model with Vision Encoder, trained in both pre-training and post-training stages, with 35 billion and 3 billion open parameters. Its MoE layer consists of a total of 256 experts, 8 routed experts and one shared expert working per token.

The plot presents an unusual hidden structure worth understanding: the model uses a pattern of 10 blocks, each with 3 instances of (Gated DeltaNet → MoE) followed by one instance of (Gated Attention → MoE). In all 40 layers, Gated DeltaNet sublayers handle direct attention – a cheaper alternative compared to standard attention – while Gated Attention sublayers use Grouped Query Attention (GQA), with 16 attention heads for Q and only 2 for KV, which greatly reduces the pressure on the KV-cache memory during decision-making. The model supports a native context length of 262,144 tokens, which expands up to 1,010,000 tokens using the YaRN scale (But another RoPE extension).

Agentic code is where this model gets serious

On SWE-bench Verified — the canonical benchmark for real-world GitHub problem solving — Qwen3.6-35B-A3B scores 73.4, compared to 70.0 for Qwen3.5-35B-A3B and 52.0 for Gemma4-31B. In Terminal-Bench 2.0, which tests an agent completing tasks inside a real terminal environment with a three-hour shutdown time, the Qwen3.6-35B-A3B scores 51.5 — the highest of all comparable models, including the Qwen3.5-27B (41.6), Gemma4-31B (45B-31B) and Q3B3. (40.5).

The execution of the frontend code shows a sharp improvement. In QwenWebBench, the first bilingual code generation benchmark covering seven categories including Web Design, Web Applications, Games, SVG, Data Visualization, Animation, and 3D, the Qwen3.6-35B-A3B scores 1397 — well ahead of the Qwen3.5-38B5-A3B5 and 30B5-A3B (10B) (978).

For STEM and thinking benchmarks, the numbers are equally impressive. The Qwen3.6-35B-A3B scores 92.7 on the AIME 2026 (complete AIME I & II), and 86.0 on the GPQA Diamond — a graduate-level scientific reasoning measure — both competitive with larger models.

Multimodal Vision Performance

Qwen3.6-35B-A3B is not a text-only model. It ships with a viewport and handles image, document, video, and spatial imaging functions natively.

In MMMU (Massive Multi-discipline Multimodal Understanding), a benchmark that tests university-level thinking in all images, Qwen3.6-35B-A3B scores 81.7, surpassing Claude-Sonnet-4.5 (79.6) and Gemma4-31B (80.4). In RealWorldQA, which tests visual perception in real-world image situations, the model reaches 85.3, ahead of the Qwen3.5-27B (83.7) and well above the Claude-Sonnet-4.5 (70.3) and the Gemma 4-31B (72.3).

Spatial intelligence is another area of measurable benefit. In ODINW13, the object detection benchmark, the Qwen3.6-35B-A3B scores 50.8, up from 42.6 for the Qwen3.5-35B-A3B. For video intelligibility, it reaches 83.7 in VideoMMMU, besting Claude-Sonnet-4.5 (77.6) and Gemma4-31B (81.6).

Thinking mode, non-thinking mode, and critical behavior change

One of the most useful design decisions in Qwen3.6 is transparent control over model behavior. Qwen3.6 models work in automatic thinking mode, generating the content of the closed thinking "enable_thinking": False in the dialog template kwargs. However, AI professionals migrating from Qwen3 should be aware of an important behavior change: Qwen3.6 does not officially support Qwen3 soft switching, that is, /think again /nothink. Changing the mode should be done via an API parameter rather than inline notification tokens.

A more novel addition is a feature called Thought Saving. By default, only logic blocks generated by the user’s most recent message are saved; Qwen3.6 has also been trained to save and use logic traces from historical messages, which can be done by setting preserve_thinking option. This capability is particularly beneficial in agent scenarios, where maintaining a full reasoning context can improve decision consistency, reduce redundant reasoning, and improve the use of KV cache in both reasoning and non-reflection modes.

Key Takeaways

- Qwen3.6-35B-A3B is an Expert Hybrid model with 35 billion parameters but only 3 billion activated at a given time.which makes it much cheaper to use than the parameter value suggests – without sacrificing performance in complex tasks.

- The model’s coding capabilities are its strongest suitwith a score of 51.5 in Terminal-Bench 2.0 (the highest of all compared models), 73.4 in SWE-bench Verified, and 1,397 leading in QwenWebBench which includes frontend code generation in all seven categories including Web Applications, Games, and Data Views.

- Qwen3.6-35B-A3B is a traditional multimodal modelsupporting image, video, and document understanding out of the box, with scores of 81.7 on MMMU, 85.3 on RealWorldQA, and 83.7 on VideoMMMU — outperforming the Claude-Sonnet-4.5 and Gemma4-31B on some of these.

- The model introduces a new feature of Imagination Conservation which allows reasoning traces from the previous discussion to be stored and reused across multiple agents of the workflow, reducing redundant reasoning and improving the efficiency of the KV cache in both reasoning and non-reflection modes.

- Released under Apache 2.0, the model is fully open for commercial use and is compatible with major open-source benchmark frameworks – SGlang, vLLM, KTransformers, and Hugging Face Transformers – with KTransformers specifically enabling CPU-GPU multi-distribution in resource-rich environments.

Check out Technical details again Model weights. Also, feel free to follow us Twitter and don’t forget to join our 130k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? contact us