Why AI Models Are Cheap

A year or two ago, using advanced AI models felt expensive enough that you had to think twice before asking anything. Today, using those same models feels cheap enough that you don’t even notice the cost.

This is not just because “technology has advanced” in a vague sense. There are some reasons behind it, and it comes down to how AI systems use computation. That’s what people say when they talk token economy.

Tokens: The Basic Unit

AI doesn’t read words the way we do. It breaks the text into smaller building blocks called tokens.

A token is not always a full name. It can be a complete word (like an apple), part of a name (like un again believable), or just a comma.

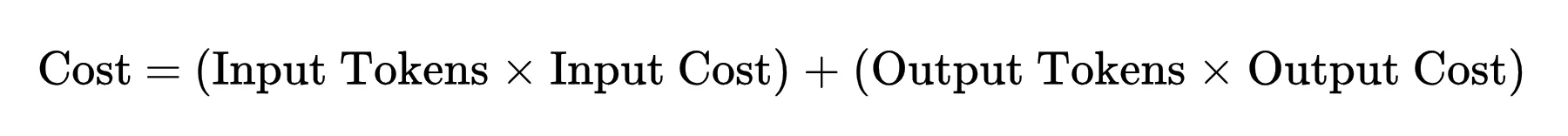

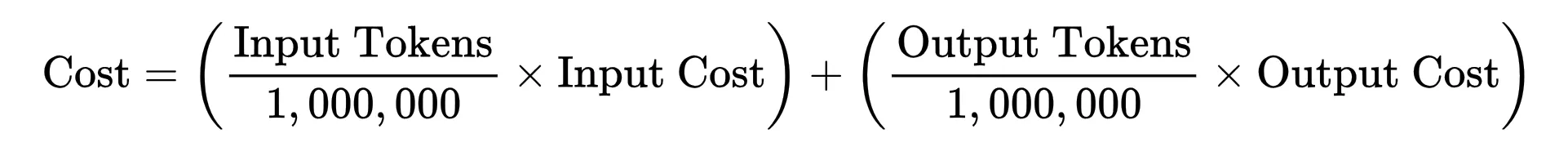

Each token generated requires a certain amount of computation. So when you break the picture down, the cost of using AI comes down to a simple relationship:

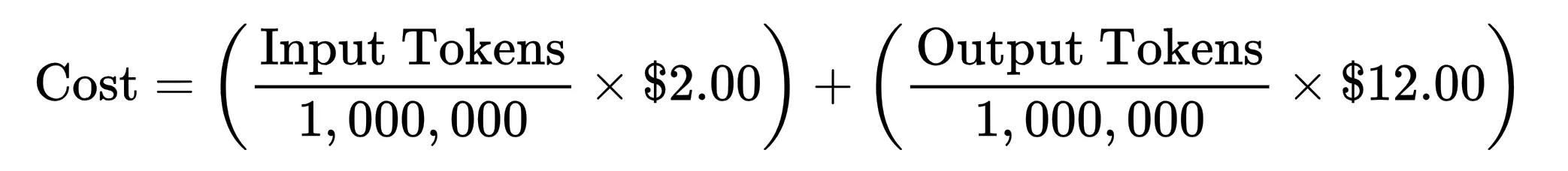

Since the cost of the AI token is one million tokensthe equation checks in:

Click here to see how the cost is calculated for the model

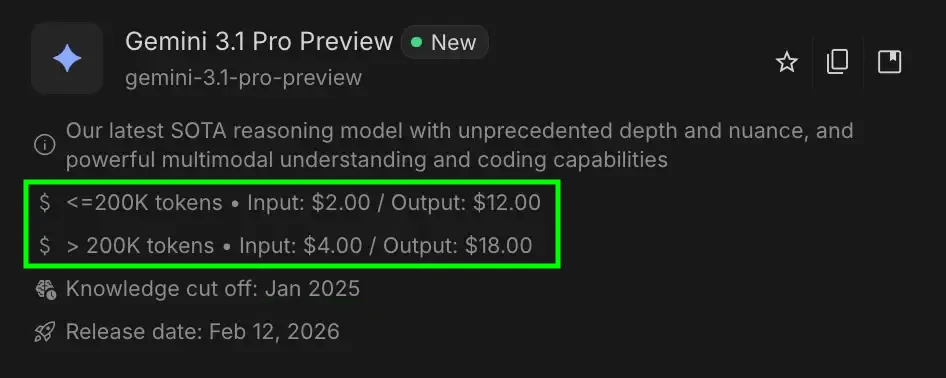

We’ll be doing some math on the Gemini 3.1 Pro Preview.

Let’s say you send information that is 50,000 tokens (Input Tokens) and the AI writes back 2,000 tokens (Extraction Tokens).

Since tokens are AI currency. If you control the tokens, you control the cost.

If AI is less expensive, then we are doing one of two things:

- Reducing how much each token needs by counting (Input/Output Tokens)

- Making that computer cheaper (Token price)

In fact, we did both!

Using a small computer per token

The first wave of development came from a simple vision:

We were using more calculations than necessary.

The original models treated all requests in the same way. Small or large query, text or image input, use the model with full accuracy every time. That works, but it is what a waste.

So the question was: where can we cut the computer without harming the quality of the output?

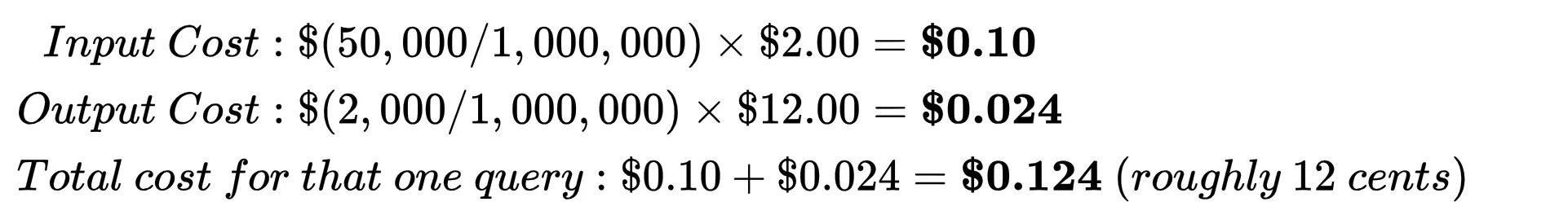

Quantization: Simplifying each task

The most specific improvement came from quantization. Early models used high precision numbers for calculations. But it turns out that you can reduce that accuracy significantly without degrading performance in most cases.

This effect comes together quickly. Every token goes through thousands of such transactions, so even a small reduction in each transaction leads to a meaningful decrease in cost per token.

Be careful: Fully accurate measurements (scale and zero point) must be maintained for each block. This storage is important so that the AI can later extract the amount of data.

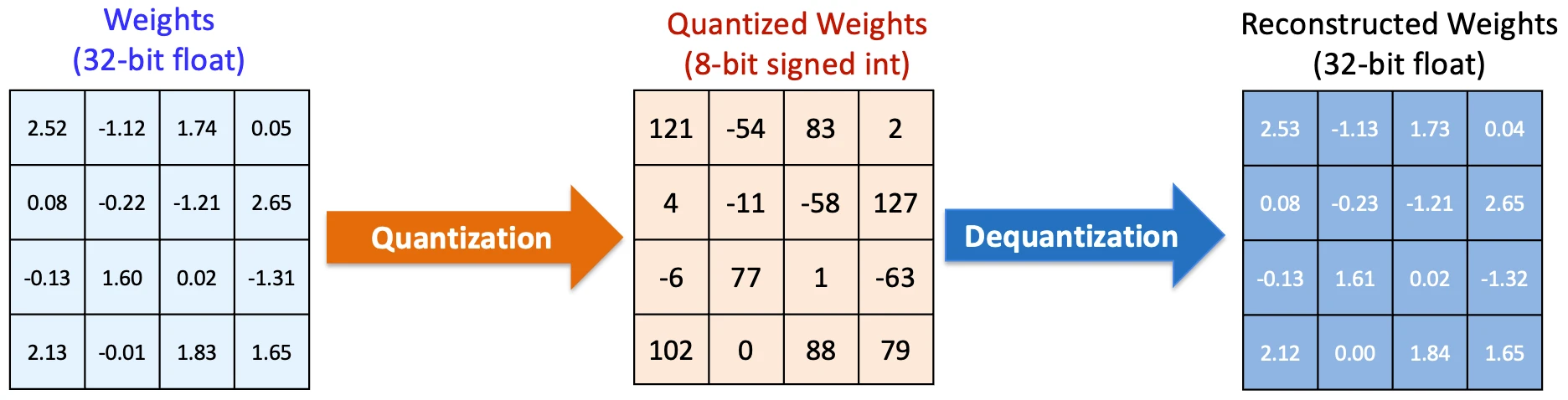

MoE Architecture: Does not use every model all the time

The following observations were even more influential:

Maybe we don’t need every model to work for every answer.

This led to the construction of structures such as Mix of Experts (MoE).

Instead of one big network that handles everything, the model is divided into small “experts”, and only a few of them are activated to receive a given input. The routing method determines which ones are important.

So the model can still be big and perfect, but for any question, it’s only a fraction that does the job.

That directly reduces the computation per token without reducing the overall intelligence of the model.

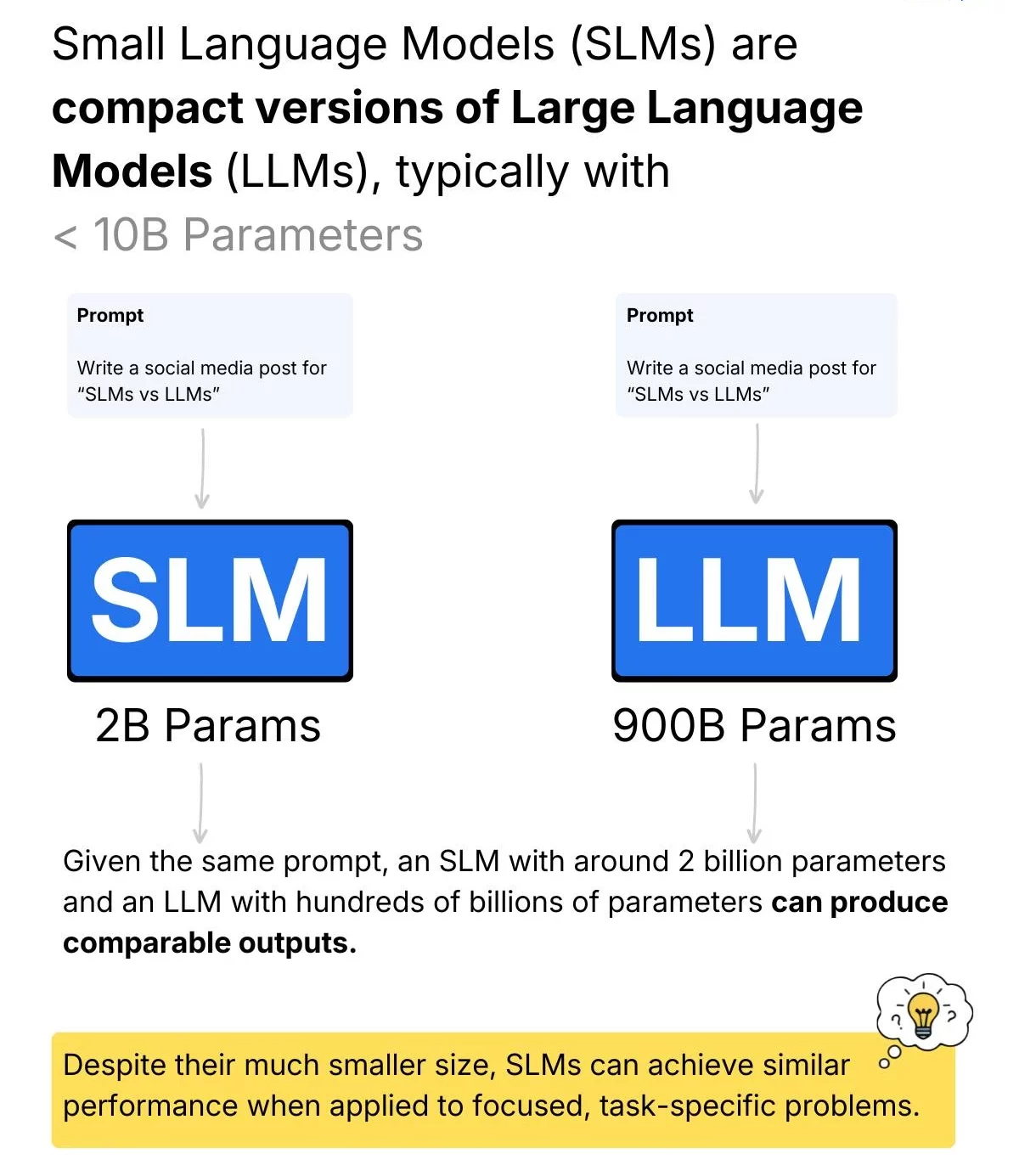

SLM: Choosing the right model size

Then came a more useful idea.

Most real-world jobs aren’t that difficult. Much of what we ask AI to do is repetitive or straightforward: summarizing text, outputting formatting, answering simple questions.

It is there Small Language Models (SLMs) come in. These are simple models designed to handle simple tasks efficiently. In today’s systems, they tend to handle a lot of work, while larger models are reserved for more difficult problems.

So instead of developing one model endlessly, use the smallest model that fits your purpose.

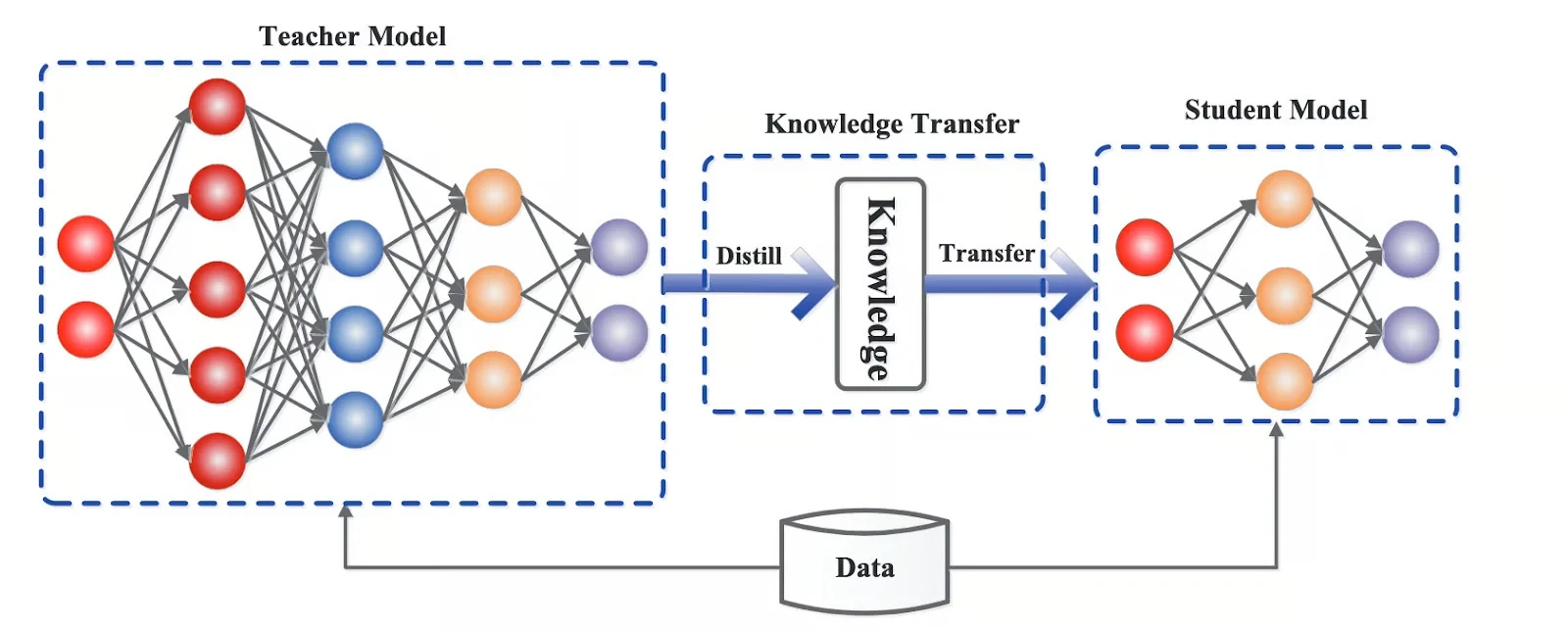

Distillation: Compressing larger models into smaller ones

Distillation when a large model is used to train a small one, it transmits its behavior in a suppressed way. A miniature model won’t match the original in every situation, but for most tasks, it comes surprisingly close.

This means you can use a much cheaper model while retaining most of the useful behavior.

Again, the theme is the same: reduce how much computation is required per token.

KV Caching: Avoiding duplicate work

Finally, there is the realization that not all calculations need to be done from scratch.

In real systems, inputs overlap. Conversations repeat patterns. Information on the sharing structure.

Modern implementations take advantage of this by saving a buffer that reuses intermediate states from previous calculations. Instead of recalculating everything, the model starts where it left off.

This does not change the model at all. It simply deletes the redundant function.

Be careful: There are modern caching techniques such as TurboQuant that provide extreme compression to the KV caching technique. Which leads to even higher savings.

Doing the math yourself is cheap

When the token count was reduced, the next step became obvious:

Make a computer that is already cheap to use.

It uses the same model effectively

Most of the progress here comes from preparing the instructions yourself.

Even with the same model, how you do it matters. Improvements in batching, memory access, and parallelism mean that parallel computations can now be performed faster and with fewer resources.

You can see this in action with models like the GPT-4 Turbo or the Claude 4 Haiku. These are completely new intelligence layers designed to be faster and cheaper to use compared to previous versions.

This is what often appears as a “tuned” or “turbo” variant. The intelligence has not changed: the execution is already strong and efficient.

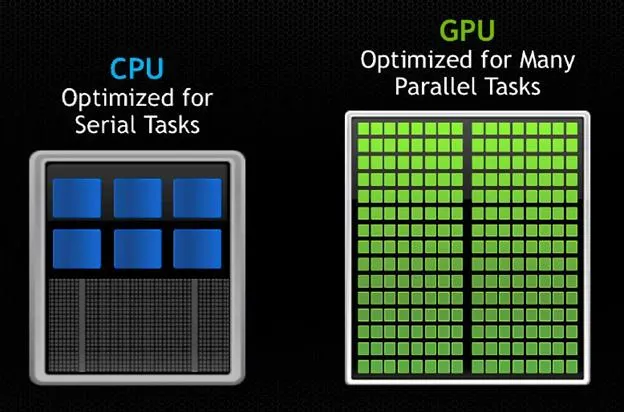

The hardware that powers all of this

All of these improvements benefit from hardware built for this type of work.

Companies like NVIDIA and Google have built chips specifically optimized for the types of AI operations that rely on them, especially large-scale matrix multiplication.

These chips are better at:

- handling low precision calculations (important for measurement)

- to move data effectively

- to process multiple tasks in parallel

Hardware does not reduce costs by itself. But it makes all other settings more efficient.

Putting it all together

Early AI programs were a mess. All tokens use a full model, full precision, every time.

Then things changed. Let’s start cutting unnecessary work:

- simple tasks

- use of the model in part

- small models for simple tasks

- to avoid infamy

Once the work was done, the next step was to make it cheaper to work:

- better execution

- intelligent accumulation

- hardware designed for these exact tasks.

That’s why costs are coming down faster than expected.

There is not just one thing driving this change. Rather it is a constant displacement you only use the computer you really need.

Frequently Asked Questions

A. Tokens are subsets of textual AI processes. More tokens mean more computation, which directly affects cost and performance.

IA. AI is cheaper because the systems reduce the computation per token and make computation more efficient by using optimization techniques and better hardware.

A. AI fees are based on input and output tokens, with a value of one million tokens, including usage and levels of each token.

Sign in to continue reading and enjoy content curated by experts.