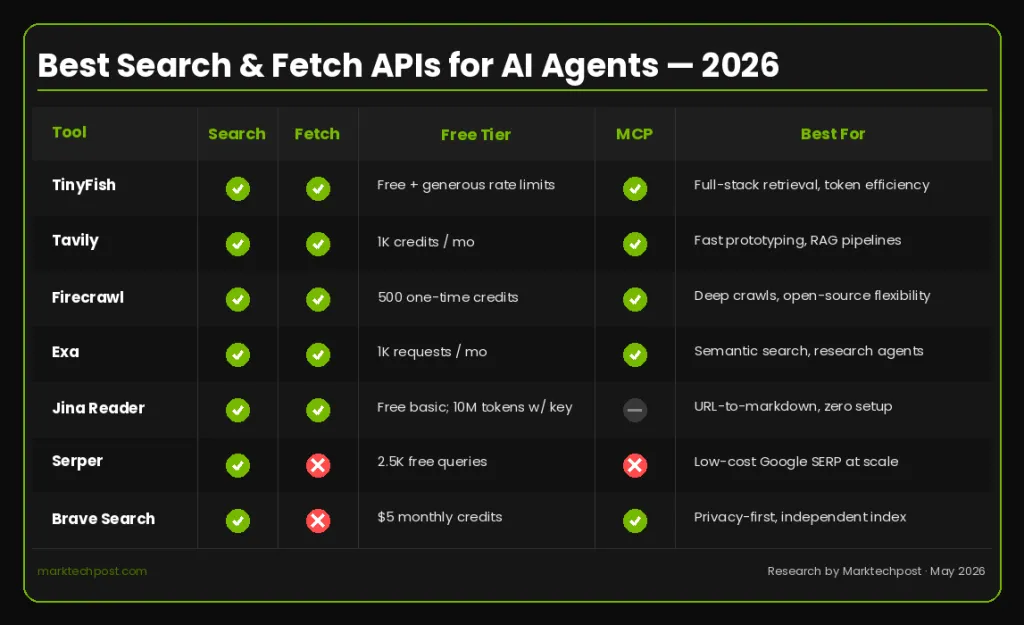

Top Search and Download APIs Building AI Agents in 2026: Tools, Exchanges, and Free Categories

Web search and content retrieval have quietly become the most important infrastructure decisions in AI agent development. An agent without reliable access to live web data is effectively working on outdated information – a serious limitation for any research to handle production deployment, lead enrichment, competitive intelligence, or real-time monitoring. In 2026, the ecosystem of search and retrieval APIs has grown significantly, with purpose-built tools replacing the old pattern of wrapping raw Google SERP data and passing it directly to the language model.

This topic covers leading search and download APIs based on testing across output formats, agent design, token efficiency, free phase allocation, latency, and framework integration.

TinyFish

TinyFish is a significant entrant in this space and among the group’s direct agents. Its Search and Download endpoints are free with open rate restrictions – one API key, no credit card. The free plan includes searches at 5 requests/minute and downloads at 25 requests/minute. Search works on api.search.tinyfish.ai, a structured JSON return that is targeted for agent retrieval rather than human browsing. TinyFish says p50 Search latency is less than 0.5 seconds — fast enough to stay inside the agent tool loop without degrading the user experience. Fetch runs on api.fetch.tinyfish.ai, using a full-fledged native browser that serves any URL – including JavaScript-heavy SPAs, dynamic content, and bot-resistant pages – and returns clean character, JSON, or HTML. Failed URLs are free.

The efficiency angle of the tokens is a very strong separation. Many native download tools – and downloads built into LLM clients – return raw HTML: texts, navigation, ads, cookie banners. TinyFish Fetch cuts through all of that before the content reaches the model, resulting in lower token consumption per page and lower LLM costs per call. The platform uses its own custom Chromium fleet end-to-end without middleware, which enables both free pricing and quality output. Importantly, these are the same endpoints that power the production agent workload – not the demo stage. The same API key and dashboards carry over if you go beyond the free plan; no code changes are required.

TinyFish is available to all local developers who are already using it. Direct access via REST API (api.search.tinyfish.ai and api.fetch.tinyfish.ai). MCP support is a single JSON config release for Claude, Cursor, Codex, ChatGPT desktop, and any MCP-aware client. The CLI (npm install -g @tiny-fish/cli) writes results directly to the file system instead of through the model context window, keeping token usage low and output organized. Agent Skill (npx skills add github.com/tinyfish-io/tinyfish-cookbook -skill tinyfish) teaches the agent when to call Search vs. Fetch and how to use each — one-line installation, works with Claude Code, Codex, Cursor, OpenCode, and Antigravity. Python and TypeScript SDKs are also available.

Agent harnesses and framework integrations include Claude Code, OpenClaw, Hermes Agent (Nous Research), Cline, Cursor, Codex, LangChain, and CrewAI. Platform integrations include n8n (via the n8n-nodes-tinyfish public node), Dify (the TinyFish Web Agent plugin on the Dify Marketplace), and Vercel Skills. ChatGPT App and MCP Apps are also supported.

Tavily

Tavily is a real-time search engine built specifically for AI agents and RAG workflows, providing fast APIs for web search and content extraction. The Researcher plan is free and includes 1,000 API credits per month – enough for prototyping and light testing. The paid tiers rank as follows: Project at $30/month (4,000 credits), Bootstrap at $100/month (15,000 credits), and Startup at $220/month (38,000 credits). A pay-as-you-go option is available for $0.008 per bill with no monthly commitment. Credits reset monthly and do not roll over.

Tavily is notable for its deep integration of LangChain and LlamaIndex and its pre-processing layer that returns moderated, relevance-filtered snippets rather than raw SERP data. One important development to follow: Nebius announced the agreement to acquire Tavily in February 2026, which raised questions among some teams about the stability of future prices and the direction of the road when assessing the long-term infrastructure dependence. Despite this, Tavily remains the fastest way to go from zero to a working prototype and has extensive integration with the LLM framework.

Firecrawl

Firecrawl converts any URL into clean, LLM-ready markdown or structured JSON, and is ready out of the box — connecting to any MCP client with a single command and supporting media parsing of web-hosted PDFs and DOCX files based on click, scroll, and engagement actions before extracting content. It features four different modes of operation: Scrape (single URL markup or JSON), Crawl (recursive domain crawling), Map (URL discovery without downloading content), and a natural language driven data extraction agent endpoint.

The free plan offers 500 one-time credits, enough to test the API and run a proof of concept, but not a recurring production quota. Paid plans start at $16/month (Hobby, 3,000 credits/month) and go up to $83/month (Normal, 100,000 credits/month in annual payments). Bills do not roll over from month to month on standard plans. Firecrawl is open source under AGPL-3.0, which is a reasonable exception for groups with data sovereignty requirements. Framework support is extensive: LangChain, LlamaIndex, CrewAI, Flowise, and Dify all have native integration. The MCP server installs with npx -y firecrawl-mcp and works in Claude Code, Cursor, Windsurf, and VS Code.

Ex

Exa takes a very different approach to search. Instead of matching a keyword, it uses neural embedding to understand the meaning of the query which is why Cursor uses Exa to enable its @web feature. This makes it particularly suitable for search agents, RAG systems where semantic similarity is more important than novelty, and pipelines that need to find semantically related documents across subject collections rather than a single recent result.

The pricing structure of Exa billing is very simple. Text and bold content are now included in the base price of a Search request—with content up to 10 results per request, where content extraction was previously charged separately. The free tier offers up to 1,000 requests per month. Content search is priced at $7 per 1,000 requests. Exa ships the official MCP server that supports Claude Desktop, Claude Code, VS Code, Windsurf, and Gemini CLI.

Jina AI Reader

Jina Reader converts any URL into an LLM-compliant token by simply configuring the URL, with web search available through The Reader API is free for basic use (no API key required). A key is only required to unlock the higher level restrictions, and charges are then applied based on the length of the content rather than per request. New API keys include 10,000,000 free tokens at registration. Jina AI now operates under Elastic following the acquisition, and the platform is committed to the continued development of the Reader, Embeddings, and Reranker APIs.

The usage pattern is as simple as it gets: no SDK, no configuration, just a URL prefix. However, limitations are real. Jina is not immune to anti-bot systems and will return an error when blocked. Jina Reader itself is not deeply integrated into agent frameworks such as LangChain or LangGraph such as Tavily, Firecrawl, or Exa, although Jina AI maintains integration primarily around its embedding and reranker products. Its search endpoint (s.jina.ai) fetches the top five full results rather than returning an adjustable ranking.

A snake

Serper is one of the least expensive options for Google’s raw SERP data, at $1 per 1,000 queries for the Starter plan and down to $0.30 per 1,000 for higher volume plans. New accounts get 2,500 free inquiries with no credit card required. Returns structured JSON that includes SERP-specific elements such as info graphs and response boxes. Serper does not handle content retrieval or page fetching — only the search results API. An effective architecture for cost-sensitive pipelines is usually a search Serper combined with Jina Reader or TinyFish Fetch to retrieve content.

Brave Search API

Brave Search operates a fully independent index of over 40 billion pages with no dependency on Google or Bing making it a strong choice for organizations with privacy or compliance needs. Brave uses an independent index and offers strong privacy controls, with Zero Data Storage available to business customers. It also hosts an official MCP server that supports web, local business, image, video, and news searches.

Recently, Brave removed its free tier for new users, replacing the less expensive plan with a credit-based payment plan. New users get $5 in monthly credits — about 1,000 inquiries — before their card is charged $5 per 1,000 inquiries. Existing users on the old free plan are activated and retain their previous access. Brave does not provide a repository for downloading or extracting content – it is only a search provider, best suited for deployments where directory independence and privacy controls are critical requirements.

Key Takeaways

- TinyFish is an absolute winner for both downloading and searching. It’s a solid choice for full-stack developers who need Search, Download, and native integration under one platform, with a free tier offering the first 500 credits to test both points in a real workflow.

- Tavily remains the fastest way to search for a production-grade agent and has the deepest LLM framework integration in the category, although its credit categories squeeze headroom for scale.

- Exa is quite powerful in semantic discovery and code agent search, where neural mapping results in keyword engines.

- Firecrawl can be a great choice for heavy release workflows and teams looking for an open source foundation that they can handle themselves.

- Jina Reader is a low-collision URL tagging method, requiring nothing but a URL prefix to run.

- Serper saves money on Google SERP data by volume.

- Brave is a robust independent directory for privacy-sensitive deployments, now with an official MCP server.

Feel free to follow us Twitter and don’t forget to join our 130k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? contact us