New in Claude Managed Agents: visualization, effects, and orchestration of multiagents

Anthropic today introduced Claude Managed Agents as a research preview. Dreaming increases memory by reviewing past moments to find patterns and help agents improve themselves. We also make results, multiagent orchestration, and webhooks available to developers building with managed agents. Together, these updates enable agents to handle complex tasks with minimal supervision.

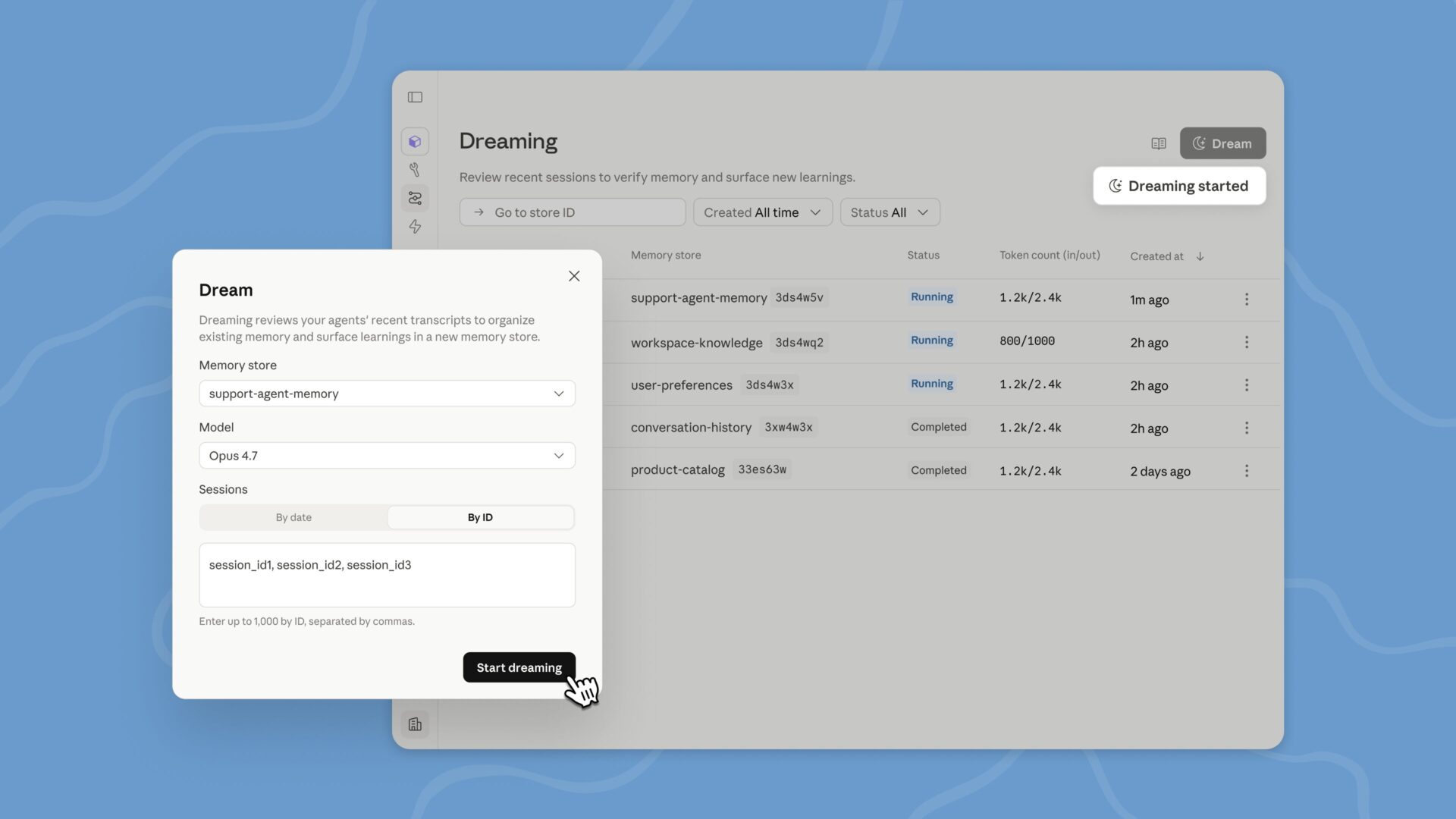

Build self-developing agents by dreaming

Dreaming is a structured process that updates your agent’s sessions and memory stores, extracts patterns, and scales memories so your agents can improve over time. You decide how much control you want: dreaming can update the memory automatically, or you can update the changes before they take effect.

Dreaming reveals patterns that a single agent cannot see on its own, including recurring errors, workflows that agents interact with, and preferences that are shared across the group. It also reprograms the memory to always have a high signal as it changes. This is especially useful for long-running workloads and multiagent orchestration.

Together, memory and dreaming form a robust memory system for self-developing agents. Memory allows each agent to capture what it learns as it works. Dreaming clears that memory between sessionsshared learning across all agents and kept up-to-date.

Dreaming is available to Managed Agents on the Claude Platform; Developers can request access here.

Deliver better results

For results, you write a rubric that describes what success looks like and what the agent is working towards. A different grade evaluates your output against your criteria in its content window, so it’s not influenced by the agent’s reasoning. If something is wrong, the editor points out what needs to be changed and the agent takes another pass.

Agents do their best work when they know what “good” looks like. For example, a structural framework, a standard of presentation, or a set of requirements that need to be met. With results, agents can check their work against that bar and adjust themselves until the output is good enough, without the need for a human to review each attempt.

The results are especially useful for jobs that require attention to detail and thorough coverage. It also works for subjective quality, such as whether the copy matches the brand’s voice or the design follows visual guidelines. In testing, the results improved task performance by up to 10 points in a typical feedback loop, with the biggest gains on the most difficult problems. The results also improved the quality of file production, with +8.4% job success in docx and +10.1% in pptx in our internal benchmarks.

You can also now define a result, let the agent run, and be notified by a webhook when it’s done.

Manage complex operations with multiple agents

If there is too much work for a single agent to do well, multiagent orchestration allows the lead agent to break the work into pieces and assign each to an expert with its own model, information, and tools. For example, a lead agent can conduct investigations while subsidiaries track release history, error logs, metrics, and support tickets.

These professionals work in parallel on a shared file system and contribute to the overall core of the leading agent. The lead agent can also check other agents during the workflow because events are persistent and every agent remembers what it did. You can also track every step in the Claude Console: which agent did what, in what order, and why, giving you full visibility of how your work was entrusted and executed.