A Real-Time AI Model

You must have faced an endless wait for the AI model taking its time to answer your question. To end this wait, Inception Labs’ new conceptual model of Mercury 2 is now live. It works a little differently than others. It uses diffusion to provide quality responses at an almost instantaneous speed. In this article, we will discover the unique qualities of the Mercury 2 thinking model and explore its potential.

A New Way of Thinking: Distribution vs. Automatic regression

Automating reverse coding is a process that many modeling languages currently use, such as those produced by Google and OpenAI. They produce one word or symbol of text at a time. This works like a typewriter, the next word is tied to the previous word.

Although it works, it also has a bottleneck. Difficult questions require a series of thoughts and the model must go through them in sequence. This is a speed-limiting and expensive serial process. It is especially useful for deep thinking processes.

The Mercury 2 thinking model does it differently. It is among the language models for commercial broadcasting. Rather than following a token-by-token approach, it starts with a dirty version of the complete answer. Then it makes it better through the refinement process. Think of it like an editor rather than a typewriter. It checks and corrects all feedback at once, and as a result, is able to correct errors early in the process. The speed of this method remains the same.

This is not a new concept in AI. Distribution models are already successful in creating images and videos. This technology is now being used by Inception Labs, a startup from academics at Stanford, UCLA, and Cornell, and it’s working very well.

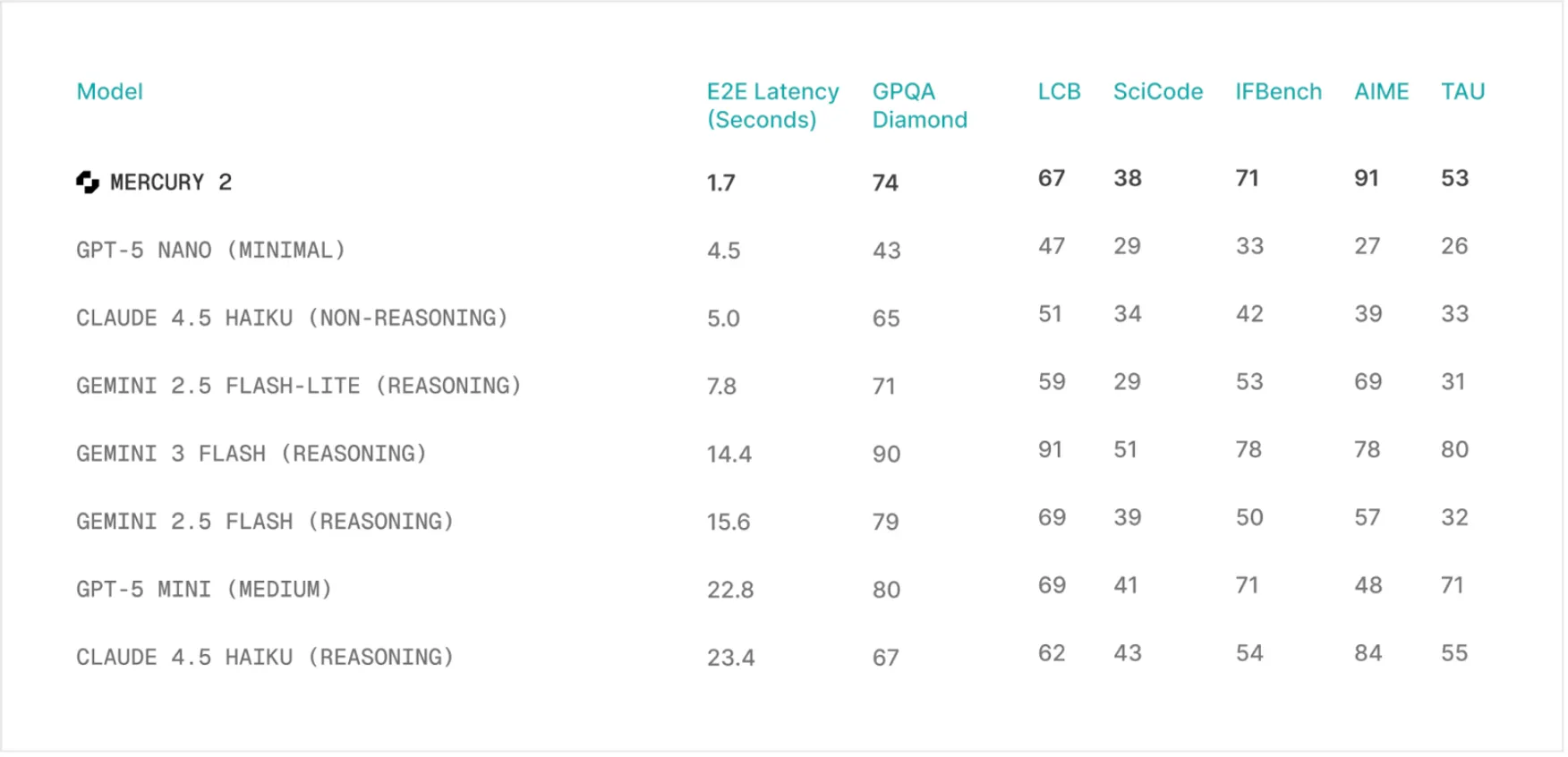

Speed and Cost: The Mercury 2 Advantage

The speed of the thinking model of Mercury 2 is its most outstanding quality. It has an output of about 1,000 tokens in benchmarks. In theory, some popular models like the Claude 4.5 Haiku and the GPT-5 mini run at around 89 and 71 tokens per second, respectively. This increases the speed of the Mercury 2 tenfold. This is not just a figure on a chart, but represents a difference in the real world. To handle complex tasks, it can take some models a few seconds to answer a query. Meanwhile, Mercury 2 can answer a question in less than two seconds.

This speed does not come at any cost. In fact, the Mercury 2 costs less than its competitors. It has a price of 0.25 per million input tokens and an input price of 0.75 per million output tokens. It costs about 2.5 times to produce a response as GPT-5 mini, and more than 6.5 times than Claude Haiku 4.5. This speed, coupled with low costs, enables new use cases, especially those applications based on real-time interactions and complex loops of AI agents.

Quality and performance

Speed can only be used if the answers are correct. In this regard, the conceptual model of Mercury 2 stands alone. It is in line with all other well-known models in terms of quality standards. It scored 91.1 on the AIME 2025 math benchmark. It also scored well on GPQA’s graduate-level science and post-secondary instruction tests on IFBench. These points show that the error-correcting nature of the distribution process does not affect quality at the expense of speed.

The model also supports a 128K size content window, tool usage, and JSON output. So it is a useful tool for developers. Features are essential for building advanced applications that require high-performance thinking. Its ability to process large amounts of information and communicate with other applications makes it ideal for applications such as real-time voice assistants, search tools, and coding assistance.

Go with the Mercury 2 consulting model

Seeing is believing. Mercury 2 is best understood experimentally. You can interact with the model or subscribe to access the API to create your own applications.

An excellent way to test a model and test its unique capabilities is to test its reasoning_effort setting. A simple, practical problem.

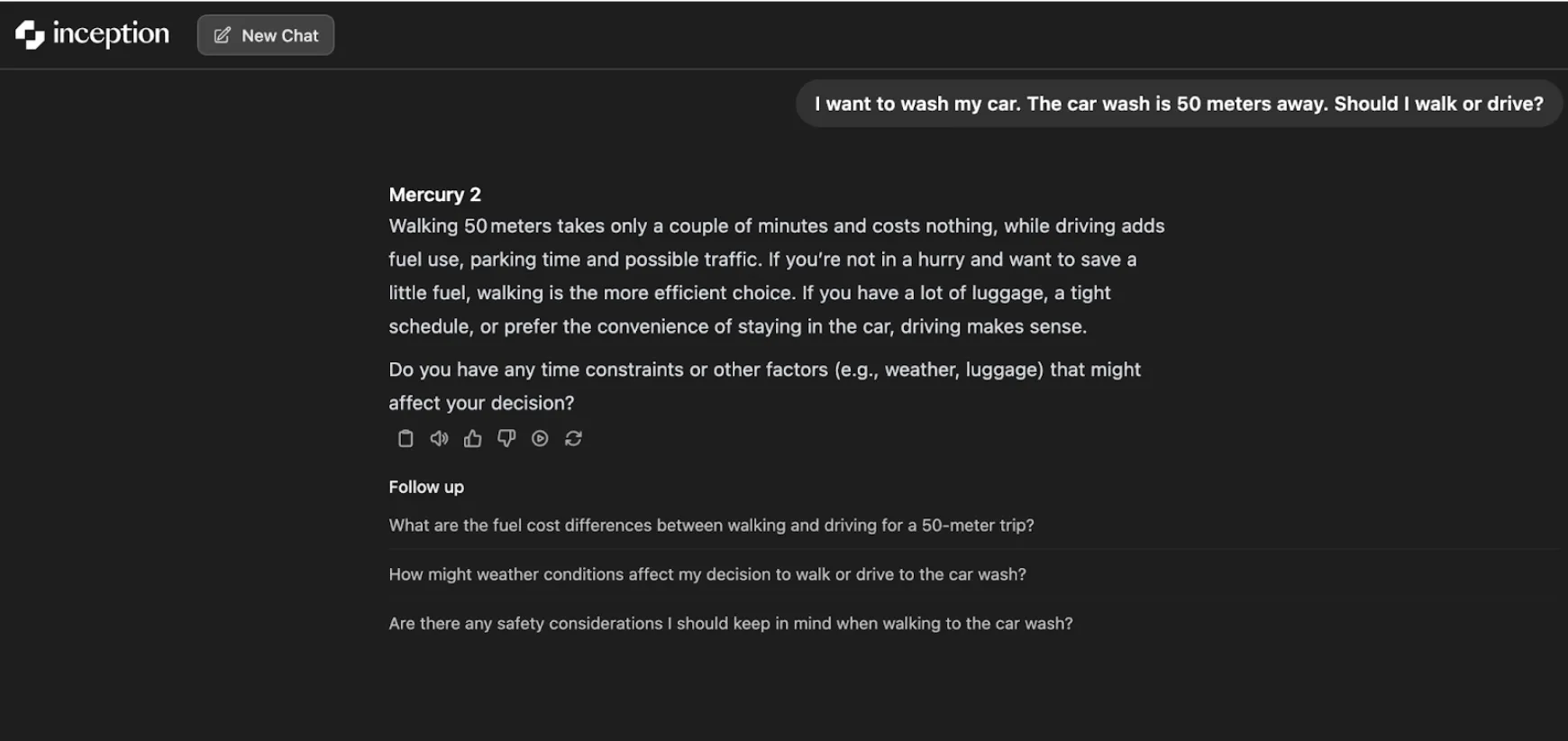

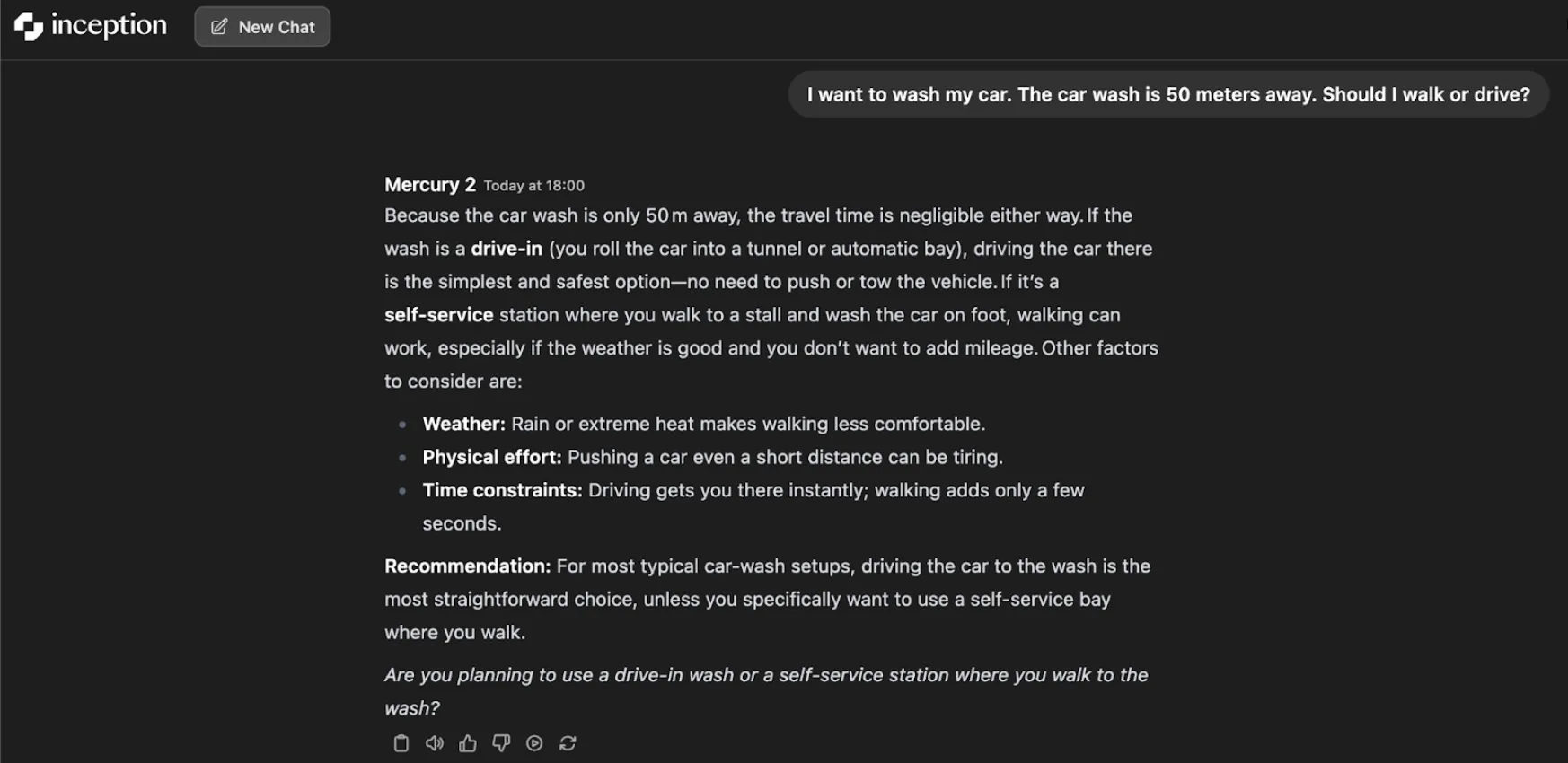

Car Wash Test

Ask the model the following question:

“I want to wash my car. The car wash is 50 meters away. Should I walk or drive?”

A model with low conceptual effort provides a logical and simple answer: it is cheap and can be done in a few minutes by walking. It rightly sees walking as the most efficient option for short distances.

However, the more thought effort you put in, the more realistic and realistic the model becomes. Considers the type of car wash. In the case of a drive-in car wash, the only sensible thing to do is drive. If it is a self-service station, walking may be the solution as long as the conditions are good. A higher context of thinking produces a very good and meaningful recommendation: in the case of many car washes, driving is the easiest option.

It’s just a simple experiment that shows how the iterative process of model refinement can lead to more understanding, given more time to think.

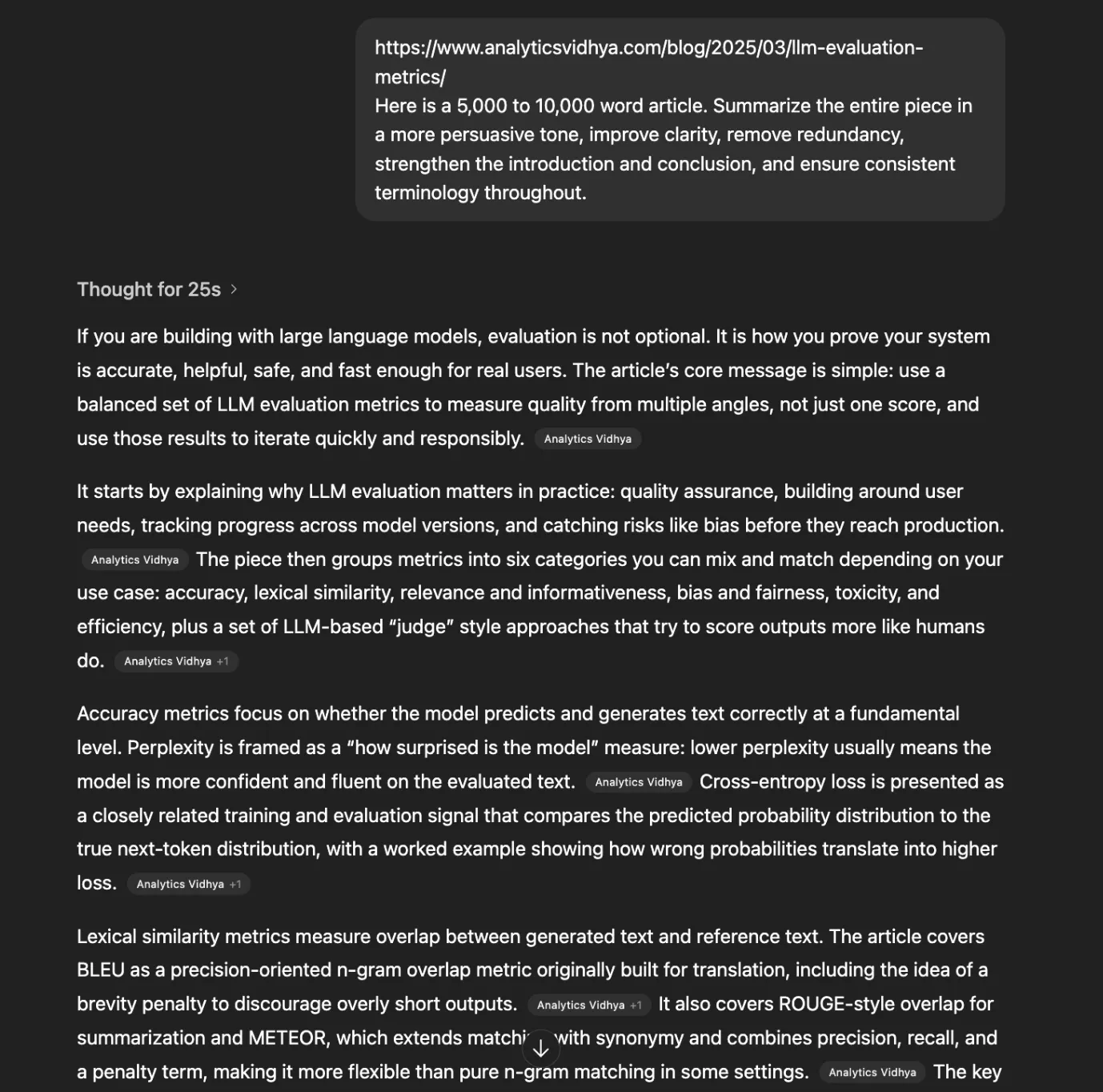

Topic Summary Test

Here is my previous article about LLM Assessment metrics, which is too big to read. Let’s try to summarize it in stages, and let’s see how long it will take.

Notify:

Here is a 5,000 to 10,000 word article. Summarize the entire piece in a persuasive tone, improve clarity, eliminate repetition, strengthen the introduction and conclusion, and ensure consistency throughout.

When we used this notification on Mercury 2 it quickly retrieved the article and gave results in less than 3 seconds.

Video:

Out of curiosity, when I tried the same prompt on ChatGPT, it took about 25 seconds. It took this time just to think about what to do and how to do it and another 10 seconds to generate the answer.

Conclusion: A Glimpse of the Future of AI

The Mercury 2 thinking model is not just another player in the crowded AI market. It is a possible change in the approach of artificial intelligence to its design and communication. It tackles the fundamental problem of latency and, therefore, opens the door to a new generation of truly responsive applications. Soon, the days when AI needs to think for itself will be gone. The future of AI can be said to be fast, cheap, and incredibly powerful with models like the Mercury 2.

Frequently Asked Questions

The Mercury 2 inference model is a new large language model from Inception Labs that uses a propagation-based approach to generate text at high speed.

Instead of generating word-by-word text, Mercury 2 creates a full response frame and refines it in parallel, making it much faster.

The Mercury 2 can generate text at around 1,000 tokens per second, which is ten times faster than comparable models.

Yes, in quality benchmarks, the Mercury 2 performs competitively with other top models in areas such as math, science, and education.

You can chat with the model directly or sign up for early access to the API through the Inception Labs website.

Sign in to continue reading and enjoy content curated by experts.