Composio Open Sources Agent Orchestrator to Help AI Developers Build Multi-Agent Workflows that Scale Beyond Traditional React Loops

A year ago, AI devs relied on the ReAct (Consult + Act) pattern—a simple loop where the LLM thinks, chooses a tool, and implements. But as any software engineer who’s tried to move these agents into production knows, simple loops are tough. They miss things, lose track of complex goals, and struggle with ‘tool noise’ when faced with too many APIs.

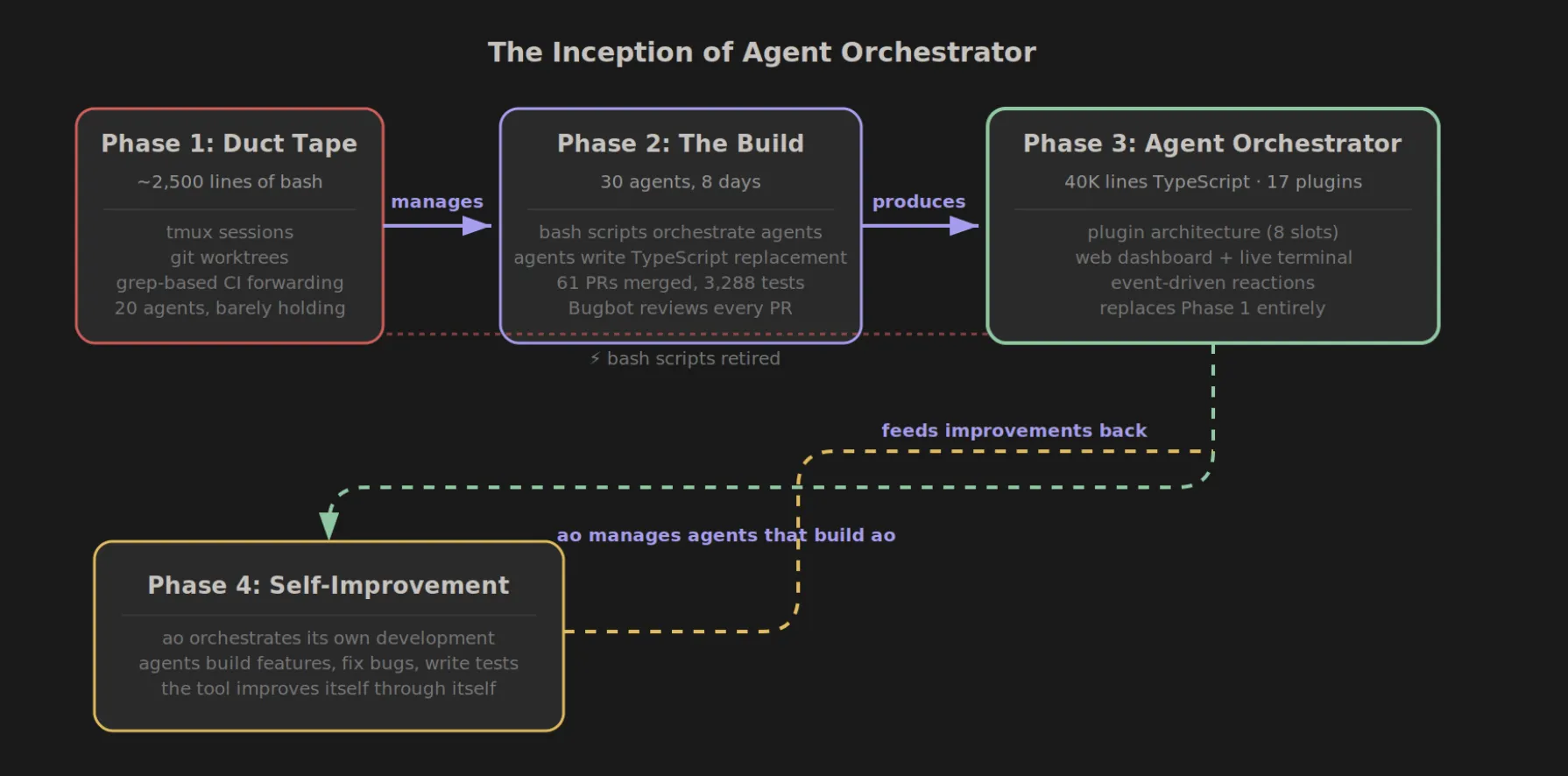

Composio The team moves the goalposts by opening the well Agent Orchestrator. This framework is designed to transform the industry from ‘Agentic Loops’ to ‘Agenttic Workflows’—structured, robust, and verifiable systems that treat AI agents as reliable software modules and not like unpredictable chatbots.

Architecture: Planner vs. Legacy

The main philosophy behind Agent Orchestrator is strict separation of concerns. In a typical setting, an LLM is expected to plan strategy and execute technical details simultaneously. This often leads to ‘greedy’ decision making where the model skips important steps.

Composio’s Orchestrator presents a two-layer architecture:

- Editor: This layer is responsible for the decomposition of the work. Take a high-level goal—like ‘Find all the most important GitHub issues and summarize them on the Insights page’—and break it down into a sequence of verifiable subtasks.

- Executor: This layer handles the actual interaction with the tools. By isolating implementation, the system can use specialized information or different models to greatly enhance API interoperability without integrating global programming logic.

Troubleshooting the ‘Sound Tool’

The most important bottleneck in the operation of the agent is often the content window. If you give an agent access to 100 tools, the scripts for those tools consume thousands of tokens, confusing the model and increasing the likelihood of forgotten parameters.

Agent Orchestrator solves this by using Managed Tools. Instead of exposing all capabilities at once, Orchestrator dynamically delivers only the necessary tool definitions to the agent based on the current step of the workflow. This ‘Just-in-Time’ content management ensures that LLM maintains a high signal-to-noise ratio, resulting in the highest success rates in the field.

State Administration and Oversight

One of the most frustrating aspects of early stage AI engineering is the ‘black box’ nature of agents. When an agent fails, it’s often hard to tell whether the failure was due to bad design, a failed API call, or a lost context.

Agent Orchestrator launches Systematic Orchestration. Unlike stateless loops that effectively ‘start from scratch’ or rely on messy conversation histories for every iteration, Orchestrator maintains a structured state machine.

- Strength: If a tool call fails (eg, a 500 error from a third-party API), the Orchestrator can trigger a specific branch that handles the error without crashing the entire workflow.

- Traceability: Every decision point, from the first draft to the final execution, is included. This provides the level of visibility needed for production-grade software debugging.

Key Takeaways

- Integration Planning from Usage: The framework departs from simple loops of ‘Reason + Action’ through differentiation Editor (which breaks down goals into smaller tasks) from Legacy (which handles API calls). This reduces ‘greedy’ decision making and improves operational accuracy.

- Powerful Tool Route (Content Management): To prevent LLM ‘noise’ and negative feedback, the Orchestrator only feeds the tool definitions relevant to the current work model. This ‘Just-in-Time’ content management ensures high signal-to-noise ratios even when managing 100+ APIs.

- Central Stateful Orchestration: Unlike stateless agents that rely on unstructured conversational history, Orchestrator maintains a structured one the state machine. This enables ‘Restart-on-failure’ capabilities and provides a clear test trail for AI production grade debugging.

- Built-in Error Detection and Robustness: The framework introduces ‘Adjustment Traps’ that are built. If a tool call fails or returns an error (such as a 404 or 500), the Orchestrator can trigger some recovery logic without losing its entire purpose.

Check it out GitHub Repo again Technical details. Also, feel free to follow us Twitter and don’t forget to join our 100k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.