Content Gain – SD Times

When OpenAI announced its persistent memory feature for ChatGPT in early 2025, it was presented as something simple. Users can now make the model remember previous context, preferences, and facts, making communication easier and more personal. Except, it was a feature update. But at a deeper level, it represented a shift that represents one of the most powerful changes in computer history: the migration of control from execution to execution. understanding.

Historical Migration of Strategic Value

Every major technology era redefines where value resides. In the era of the personal computer, it was operating system-the layer that connects the hardware and the operating system. In the age of the Internet, value has moved to the browser and the search index, mediated by scarce attention. In the era of smartphones, the app store became the store of value, the distribution of the middleman. In the cloud era, infrastructure took over, outsourcing hardware to services and computing.

Each of these changes shared a common pattern: value flowed toward the layer that connects the scarce resource over the years. The same pattern is now happening in AI. A scarce resource is not a computer or data, but contexta live understanding of how facts, entities, relationships, and permissions come together at a given moment to make thinking coherent.

Content Fabric: A New Recording System

The power of the cloud was that it outsourced the infrastructure. The power of AI is that it takes the thinking out of itself. What used to require procedural code can now be expressed probabilistically using prompts and returns. But abstraction always introduces new dependencies; any layer that provides comfort becomes a new point of control.

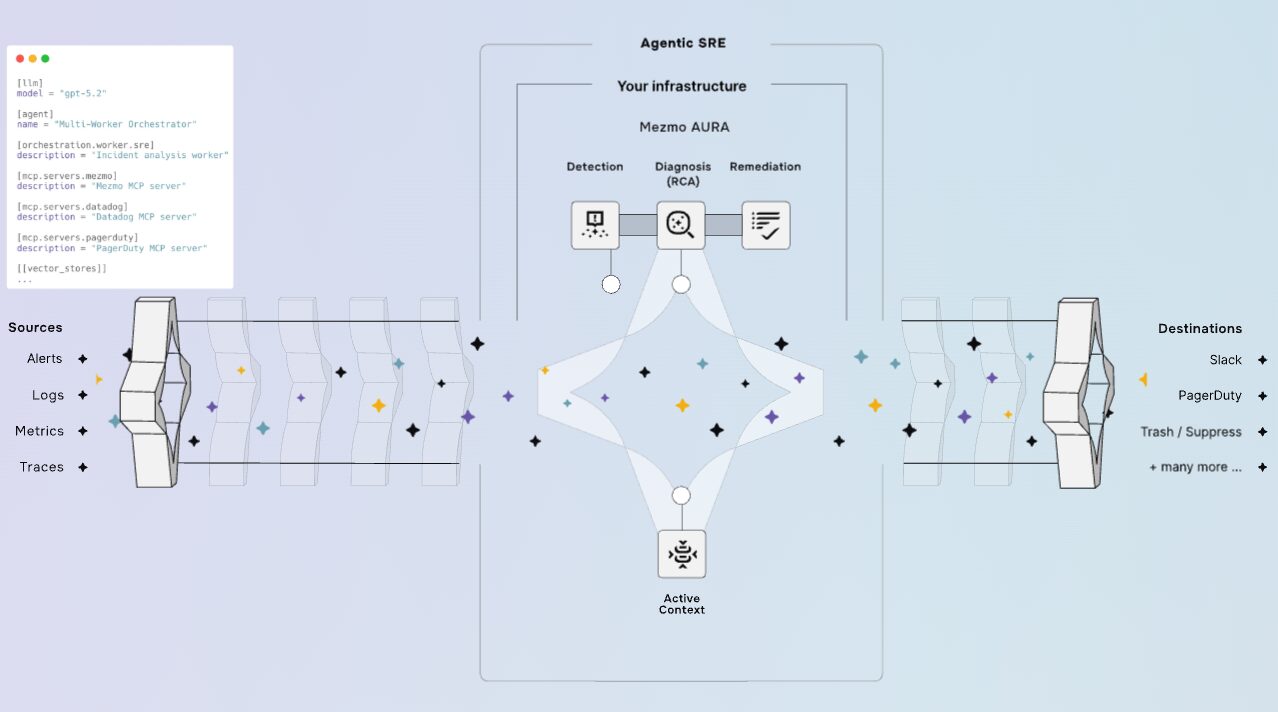

In AI, this control resides in how context is compiled, stored and retrieved. A coreless model is like a processor without memory; it can count, but it cannot think about the world. All businesses serious about AI will eventually build what can be called a context fabric. This is the architectural layer that connects the systems of record (CRM, ERP, tickets, documents, telemetry, etc.) to the imaging systems.

This fabric is a new recording system. It does not store the data itself, but the relationships that give the data meaning, turning facts into actionable information. The stability of the fabric depends on:

- Services: Recovery of regulatory layer management, quality, and policy.

- Contracts: Stable schemas, entity identifiers, and policy definitions that keep the definition consistent over time.

- Note: Feedback, tracking, and drift detection to monitor system performance.

Beyond just retrieval, the context fabric enables an important feedback loop. As visible services within the fabric monitoring model responses (using feedback, tracking, and drift detection), they identify conceptual gaps. This rich, proven context can be used to repair those gaps, leading to a virtuous cycle. Context leads to better thinking, which always refines the composition of the fabric. This ensures that the core fabric is a self-improving stackable material.

It is important not to confuse context fabric with Retrieval-Augmented Generation (RAG). RAG is a way to retrieve data to answer a question. The context fabric is the governing system of record that ensures that what you download is authentic, secure, and immutable across the enterprise.

Another important point to note is that, as the fabric becomes the brain of the business, security is moving from protecting the walls to ensuring the integrity of the core. If a bad actor creates “content poisoning” (injecting false policies or corrupted documents), the AI will confidently reveal missing objects or leak secrets. Breach detection and detection is becoming the new frontier of cybersecurity.

Economic Matters: Why Matters Are Moat

For an enterprise CIO, the shift to context is not just an architectural detail, but a key economic feature of the AI era. Building a content fabric is an upfront investment, but it creates ongoing economic benefit—the “moat”—by fundamentally changing the structure of intellectual property costs. This change is visualized in the context cache curve (see Figure 2 below).

Just as classic cloud computing created data gravity, AI creates context gravity, which is the tendency for intelligence to focus on where the richest, cleanest, most relevant context resides.

What makes the fabric so valuable is that it combines efficiency over time. In AI systems, an important economic variable is context reuse, which is how existing embeddings, features, and retrieval can be used without re-computation. This reuse defines the shape of the cost curve.

A system with a high cache hit rate is cheaper and faster than one that has to re-index or re-query repeatedly. This creates a huge economic opportunity:

- Fixed Cost versus Marginal Cost: Building the core fabric is a fixed cost. Once established, the high hit rate of the cache in the garment (right side of Figure 2) means that the subsequent logic operations have almost zero cost, increasing intelligence.

- Economic Moat: Competitors stuck in a different situation (low reuse, left side of the curve) get several times higher costs for the same thinking function. This efficiency is also the basis for the next layer of the stack: agent AI, which requires a reliable, low-latency context from the active device to the active participant.

- The Open-Source Paradox: The rise of open source models (such as Llama or Mistral) reinforces the advantage of context. If the model is a commodity, the entire value proposition shifts to the essence of ownership. The context fabric allows the business to change models (portability) without losing the “memory” of the business.

A journey of maturity

Most businesses won’t start with a scrap cloth. They will begin, as they do with the cloud, in patches. Teams will build individual return pipelines, creating a “spread”. The journey to the field of truth follows a predictable growth model:

- Sprawl: Single test and split detection logic.

- Integration: Standardization begins, requiring common identifiers and shared ontologies to achieve interoperability.

- Platformization: Context Fabric is established as a virtual platform, serving multiple domains with discovery and policy as shared services.

- Portability: Fabric is portable, able to run between different model providers and clouds without losing definition.

Organizational Obstruction: Fighting Conway’s Law

The most important obstacle in this journey is not technical, but organizational. Conway’s Law suggests that programs should be similar to the communication structures of the organizations that build them. A closed organization will naturally produce a “scatter” of disconnected core pipes.

True “total content” requires the organization to combat this ignorance. Achieving a unified fabric creates conflict: different departments must agree on shared definitions of reality. The winners of the AI era will be organizations that are able to reconfigure their social infrastructure to match AI’s demand for a unified context.

Capturing the Content Advantage: Implications for the CIO

Content ownership is the final frontier. Cloud infrastructure makes computing scalable. Content infrastructure will enable creativity to grow.

While the infrastructure layer drives the cloud world, the context will drive the AI world, making the transition from infrastructure to semantics. The winners will be those who know how to navigate and build the fabric of context:

- Enterprise CIO: Unlike the era of the cloud, when value has been moved to external hyperscalers, the context fabric, built on proprietary systems, offers the CIO a rare opportunity to rediscover the strategic value of its intelligence layer.

- Direct Special Suppliers: The first to build a strong, controlled fabric to direct the vertical (eg, legal acquisition, precision manufacturing, health care, etc.) will take almost the entire amount. Their pre-established schemes and policy contracts become an impenetrable barrier due to the gravity of the context.

- Metadata layer: New middleware providers will provide a dense layer that manages contracts, shared data formats, entity IDs, and policy rules. These providers will set the standard for how the systems should work with each other, verify the interoperability principles and ensure that everything stays the same.