Liquid AI Releases LFM2.5-VL-450M: A 450M Parametric Language Vision Model with Bounding Box Prediction, Multilingual Support, and Sub-250ms Edge Inference

Liquid AI recently released the LFM2.5-VL-450M, an updated version of its previous LFM2-VL-450M vision language model. The new release introduces the prediction of the binding box, the following advanced instructions, the extended understanding of many languages, and the support of calling – all within the 450M parameter-a parameter designed to work directly on the edge hardware from embedded AI modules such as NVIDIA Jetson Orin, to small PC-PC APUs such as AMD Ryzen AI Max+ 395, similar to Snapdragon Samsung 8 inside the phone.

What is a Visual Language Model and Why Model Size Matters

Before diving in, it helps to understand what a visual language model (VLM) is. VLM is a model that can process both images and text together — you can send an image and ask questions about it in natural language, and it will respond. Most large VLMs require large GPU memory and cloud infrastructure to run. That’s a problem for real-world deployment scenarios like warehouse robots, smart glasses, or retail shelf cameras, where computing is limited and latency must be minimal.

The LFM2.5-VL-450M is Liquid AI’s answer to this hurdle: a model small enough to fit into edge hardware while supporting a reasonable set of vision and language capabilities.

Construction and Training

The LFM2.5-VL-450M uses the LFM2.5-350M as its language model backbone and the SigLIP2 NaFlex optimized for the 86M architecture as its optical encoder. The context window is 32,768 tokens with a vocabulary size of 65,536.

For image management, the model supports native resolution processing up to 512×512 pixels without upscaling, maintains a standard aspect ratio without distortion, and uses a tiling strategy that divides large images into non-overlapping 512×512 slices while including global context icon image text. Icon encoding is important: without it, tiling will give the model local patches that lack a sense of the whole scene. At runtime, users can tune the image token limit and tile count for speed/quality trading without retraining, which is useful when deploying to all hardware with different computing budgets.

The recommended production parameters from Liquid AI are temperature=0.1, min_p=0.15again repetition_penalty=1.05 in writing, and min_image_tokens=32, max_image_tokens=256again do_image_splitting=True vision input.

On the training side, Liquid AI scaled pre-training from 10T to 28T tokens compared to LFM2-VL-450M, followed by post-training using preference settings and reinforcement learning to improve baseline, instruction following, and overall fidelity across vision language tasks.

New Power Over the LFM2-VL-450M

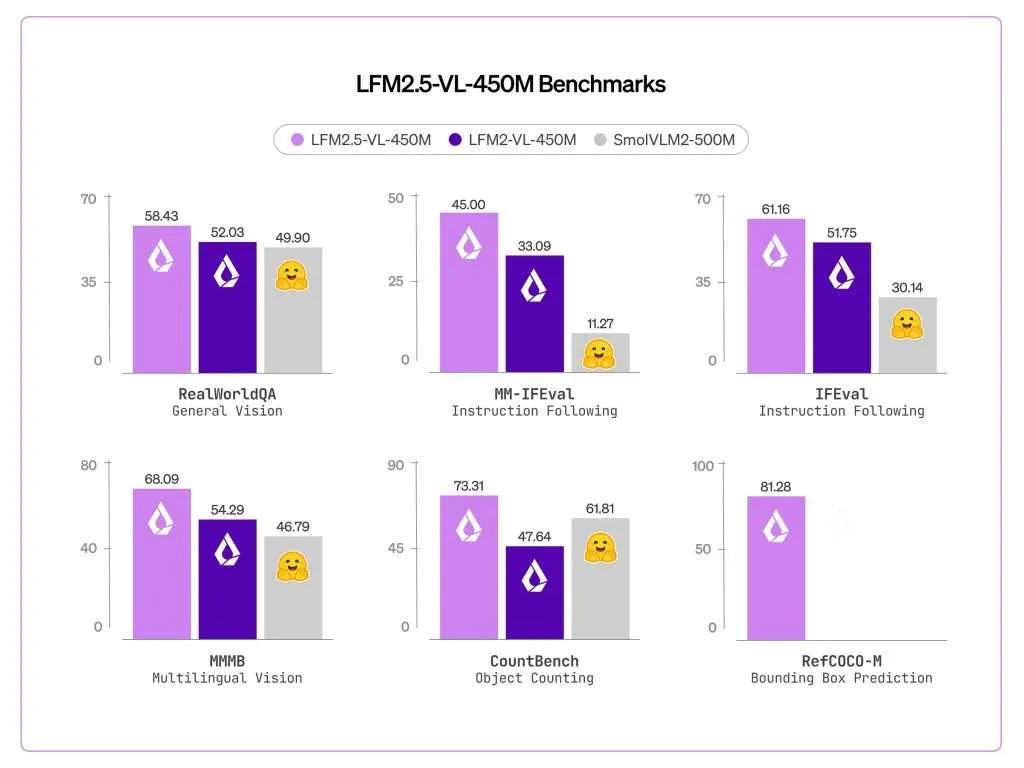

The most important addition is the prediction of the bounding box. The LFM2.5-VL-450M scored 81.28 on the RefCOCO-M, up from zero on the previous model. RefCOCO-M is a visual benchmark that measures how accurately a model can detect an object in an image given a natural language description. Basically, the output of the model is structured JSON with standard coordinates that point to where things are in the scene – not just to describe what’s there, but also to find it. This is very different from pure image coding and enables the model to be applied directly to lines that need to be out of place.

Multilingual support has also been greatly improved. MMMB scores improved from 54.29 to 68.09, covering Arabic, Chinese, French, German, Japanese, Korean, Portuguese, and Spanish. This is compatible with global applications where local language information must be understood in conjunction with visual input, without requiring separate localization pipelines.

The following instructions have also improved. The MM-IFEval scores ranged from 32.93 to 45.00, which means that the model adheres more reliably to the constraints that were immediately given – for example, answering in a certain format or limiting the output to certain fields.

Function calling support for text-only input has also been added, as measured by BFCLv4 in 21.08, a capability that the previous model did not include. Function calling allows the model to be used in agent pipelines when it needs to invoke external tools – for example, to call a weather API or trigger an action in a downstream system.

Benchmark Performance

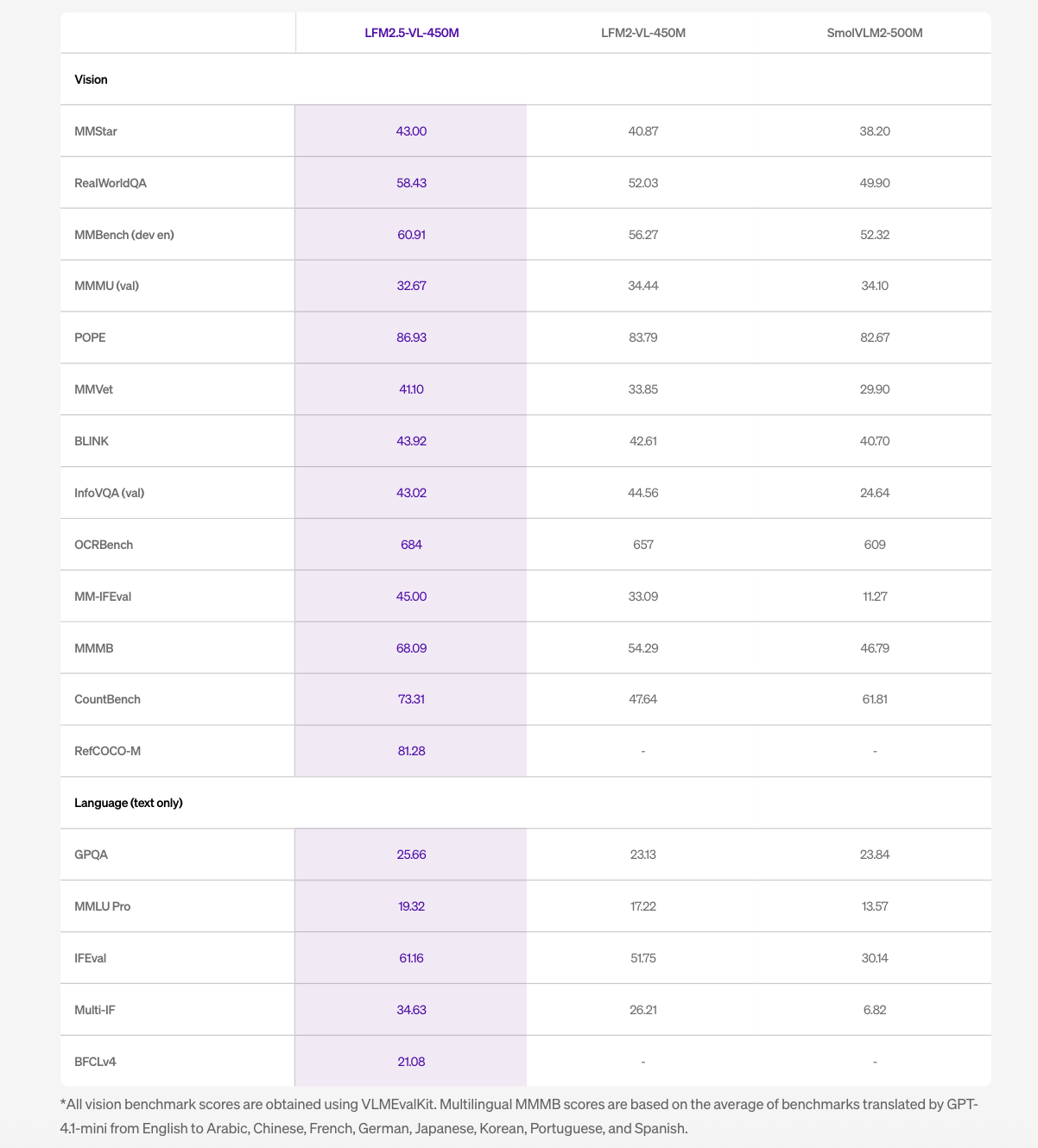

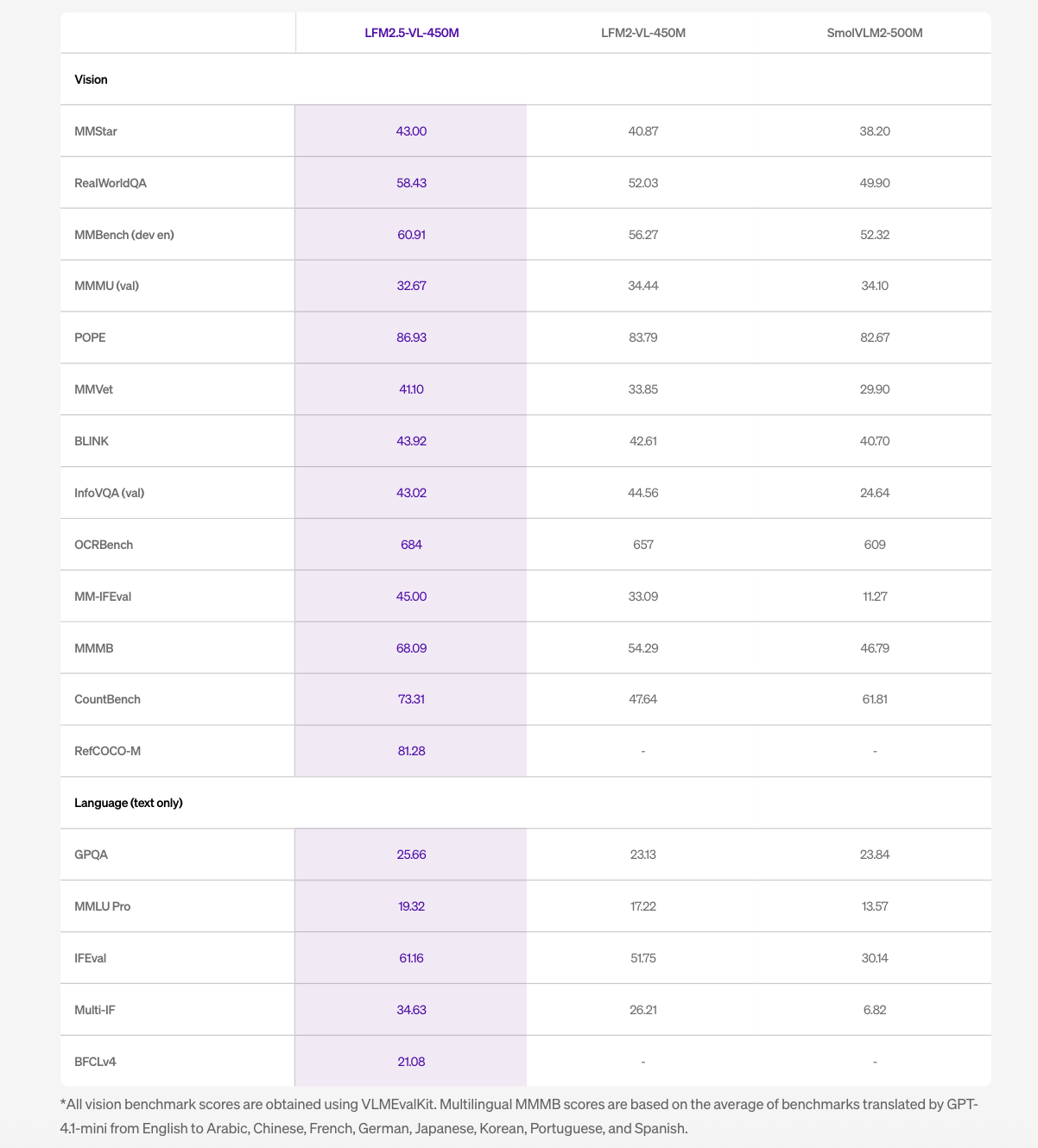

In all vision benchmarks tested using VLMEvalKit, the LFM2.5-VL-450M outperforms both the LFM2-VL-450M and the SmolVLM2-500M in most tasks. Notable scores include 86.93 in POPE, 684 in OCRBench, 60.91 in MMBench (dev en), and 58.43 in RealWorldQA.

Two benefits of the benchmark stand out beyond the headline numbers. The MMVet – which tests more open visual perception – improved from 33.85 to 41.10, a significant relative gain. CountBench, which evaluates the model’s ability to count objects in space, improved from 47.64 to 73.31, which is one of the biggest improvements relative to the table. InfoVQA is almost flat at 43.02 compared to 44.56 on the previous model.

In language-only benchmarks, IFEval improved from 51.75 to 61.16 and Multi-IF from 26.21 to 34.63. The model does not succeed in all tasks – MMMU (val) dropped slightly from 34.44 to 32.67 – and Liquid AI notes that the model is not well suited for data-intensive tasks or fine-grained OCR.

Edge Inference Performance

The LFM2.5-VL-450M with Q4_0 quantization works across the full range of target hardware, from embedded AI modules like the Jetson Orin to PC-PC APUs like the Ryzen AI Max+ 395 to mobile SoCs like the Snapdragon 8 Elite.

The latency numbers tell a clear story. On the Jetson Orin, the model processes a 256×256 image in 233ms and a 512×512 image in 242ms – staying well under 250ms for both resolutions. This makes it fast enough to process every frame in a 4 FPS video stream with full understanding of the visual language, not just detection. On the Samsung S25 Ultra, the latency is 950ms for 256×256 and 2.4 seconds for 512×512. On the AMD Ryzen AI Max+ 395, it’s 637ms at 256×256 and 944ms at 512×512 – less than one second of minimum resolution on both consumer devices, which keeps apps responsive.

Real-World Use Cases

The LFM2.5-VL-450M is best suited for real-world applications where low latency, compact results, and efficient semantic reasoning matter, including settings where offline operation or processing on the device is important for privacy.

In automated industries, computer-limited environments such as passenger cars, agricultural machinery, and warehouses often limit cognitive models to the output of the junction box. The LFM2.5-VL-450M goes further, providing scene-based insights in a single pass – enabling rich output for settings such as warehouse aisles, including worker actions, forklift movements, and inventory flow – while incorporating existing edge hardware such as Jetson Orin.

With wearables and always-on monitoring, devices like smart glasses, wearable assistants, dash cams, and security or industrial monitors can’t afford large vision stacks or consistent cloud streaming. A well-functioning VLM can produce coherent semantic results in situ, transforming raw video into useful structured insights while keeping computing demands low and preserving privacy.

In retail and e-commerce, tasks such as catalog import, visual search, product matching, and shelf compliance require more than item discovery, but rich visual insights are often too expensive to scale. The LFM2.5-VL-450M enables structured visual thinking for these workloads.

Key Takeaways

- The LFM2.5-VL-450M adds a binding box prediction for the first timeit scored 81.28 in RefCOCO-M compared to zero in the previous model, which allows the model to extract the spatial coordinates of the detected objects – not just to describe what it sees.

- Pre-training is scaled from 10T to 28T tokenscombined with post-training by enhancing preferences and reinforcement learning, driving consistent visual gains across visual tasks and language tasks over LFM2-VL-450M.

- The model uses edge hardware with sub-250ms latencyprocesses a 512×512 image in 242ms on NVIDIA Jetson Orin with Q4_0 capacity — fast enough for full understanding of the visual language in every frame of 4 FPS video playback without cloud loading.

- Visual comprehension of many languages has improved significantlywith MMMB scores increasing from 54.29 to 68.09 across Arabic, Chinese, French, German, Japanese, Korean, Portuguese, and Spanish, making the model workable for global deployment without separate localized models.

Check it out Technical details again Model weight. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? contact us