Meta AI and KAUST Researchers Propose Neural Computers That Wrap Computation, Memory, and I/O into a Single Learned Model

Researchers from Meta AI and King Abdullah University of Science and Technology (KAUST) have introduced Neural Computers (NCs) – a proposed form of machine in which the neural network itself acts as a running computer, rather than as a layer sitting on top of another. The research team presents both a theoretical framework and two video-based working prototypes that demonstrate early primitives in command line interface (CLI) and graphical user interface (GUI) settings.

What makes this different from agents and world models

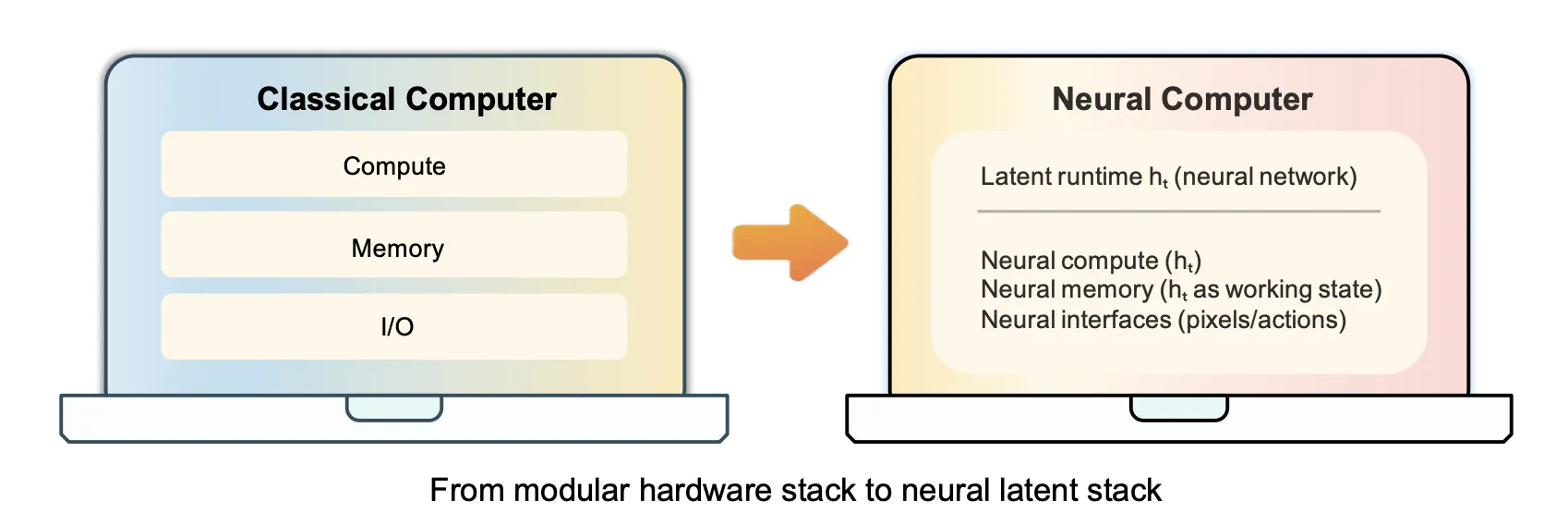

To understand the proposed research, it helps to place it against existing system types. A normal computer makes clear programs. An AI agent takes over tasks and uses the existing software stack – operating system, APIs, terminals – to accomplish them. The earth model learns to predict how the landscape changes over time. Neural Computers have none of these roles. Researchers also clearly distinguish Neural Computers (NCs) from the Neural Turing Machine and the Differentiable Neural Computer line, which focuses on separable external memory. The Neural Computer (NC) question is different: can machine learning begin to take on the role of an active computer itself?

Formally, a Neural Computer (NC) is defined by the update function Fθ and decoder Gθ working in the latent period of work ht. At each step, the NC updates ht from the current observation xt and user action utthen sample the next frame xt+1. The hidden state manages what the operating system stack would normally do – the executable context, active memory, and interactive state – inside the model rather than outside of it.

The long-term goal is the Completely Neural Computer (CNC): a mature, general-purpose implementation that satisfies four conditions at once – Fully experimental, globally programmable, consistent with behavior without being explicitly reprogrammed, and reflects native machine architecture and programming language semantics. A key performance requirement related to behavioral consistency is the implementation/update contract: regular inputs must use input capabilities without silently changing them, while behavior change updates must occur transparently in a visible, traceable and reversible manner.

Two Prototypes Built in Wan2.1

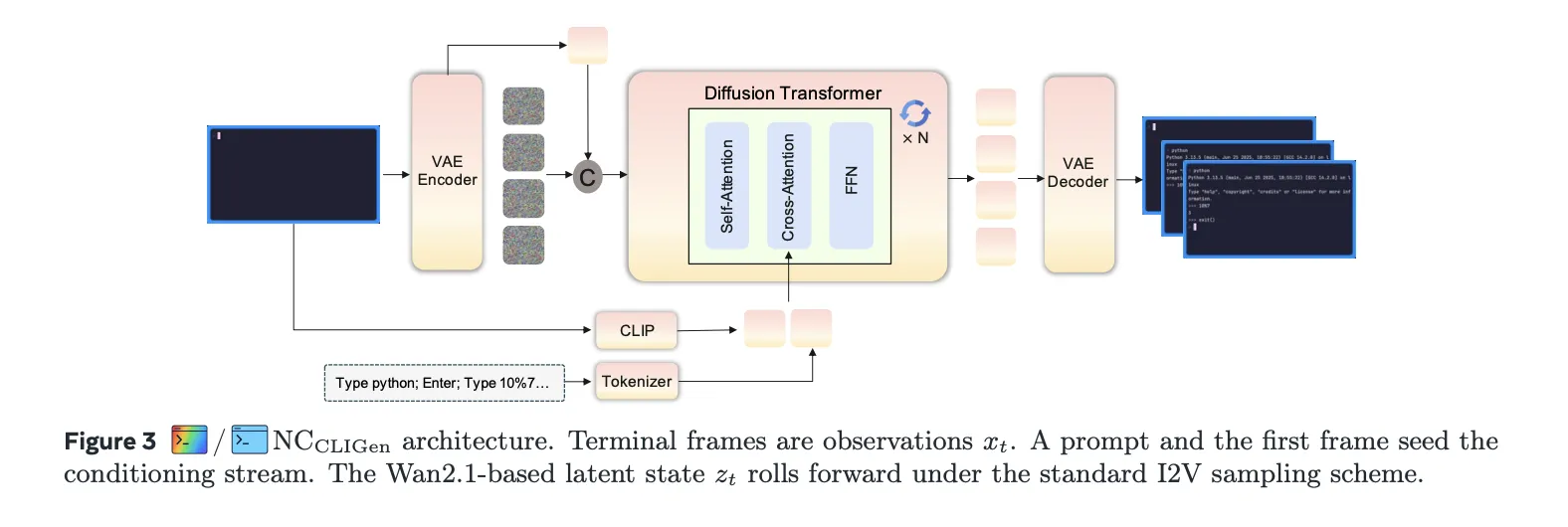

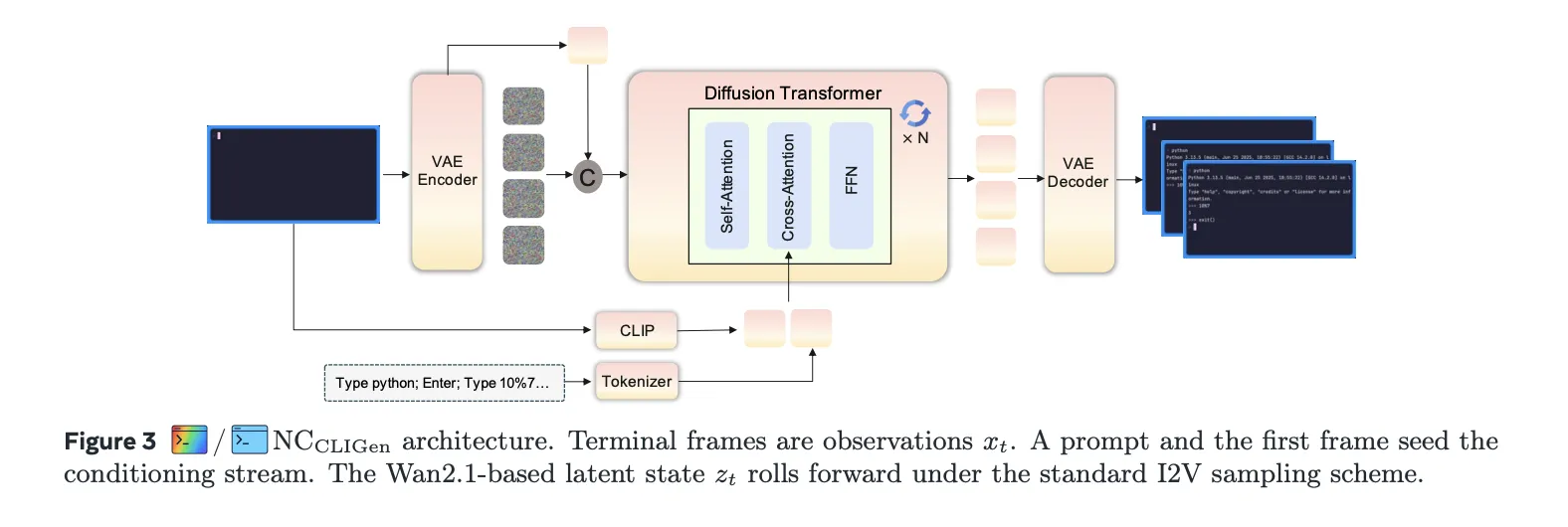

Both prototypes – NCCLIGen again NCGUIWorld – were built on top of Wan2.1, which was a modern video production model at the time of testing, with NC-specific conditioning and action modules added on top. The two models are trained separately without shared parameters. Both experiments operate in open-loop mode, outputting recorded notifications and logged action streams rather than interacting with the live environment.

NCCLIGen terminal interface models from text prompts and initial screen frames, treating CLI generation as text-and-image-to-video. The CLIP image encoder processes the first frame, the T5 text encoder embeds the captions, and these scene features are combined with the diffusion noise and processed by the DiT (Diffusion Transformer) stack. Two datasets were collected: CLIGen (Common), which contains approximately 823,989 video streams (approximately 1,100 hours) taken from the public recording of asciinema.cast; and CLIGen (Clean), divided into approximately 78,000 traces and approximately 50,000 Python statistical validation traces generated using the vhs toolkit within Dockerized environments. Training NCCLIGen in CLIGen (Normal) required about 15,000 H100 GPU hours; CLIGen (Clean) requires about 7,000 H100 GPU hours.

The reconstruction quality in CLIGen (Normal) reached an average PSNR of 40.77 dB and SSIM of 0.989 at a font size of 13px. Character level accuracy, measured using Tesseract OCR, increased from 0.03 initially to 0.54 in 60,000 training steps, with linear accuracy reaching 0.31. The specification of captions had a significant effect: detailed captions (average of 76 words) improved the PSNR from 21.90 dB under semantic descriptions to 26.89 dB – an advantage of almost 5 dB – because the terminal frames are mainly controlled by the placement of the text, and the literal captions act as wrapping the structure of the text to the prepixel. One training dynamic found to be worth noting: PSNR and SSIM Plateau around 25,000 steps in CLIGen (Clean), with training up to 460,000 steps not bringing significant additional benefits.

In a symbolic calculation, the accuracy of the arithmetic search on a fixed pool of 1,000 math problems is up to 4% for NC.CLIGen and 0% for base Wan2.1 — compared to 71% for Sora-2 and 2% for Veo3.1. Re-asking alone, by giving an apparently correct answer in the allotted time, raised the NC.CLIGen accuracy from 4% to 83% without changing the backbone or adding reinforced learning. The research team interpreted this as evidence of directionality and reliable rendering of contextual content, not native arithmetic calculations within the model.

NCGUIWorld addresses full desktop interactions, modeling each interaction as a synchronized sequence of RGB frames and input events collected at 1024×768 resolution on Ubuntu 22.04 with XFCE4 at 15 FPS. The dataset includes approximately 1,510 hours: Random Slow (~1,000 hours), Random Fast (~400 hours), and 110 hours of goal-oriented approaches collected using Claude CUA. Training used 64 GPUs for about 15 days per run, totaling about 23,000 GPU hours per full pass.

The research team tested four schemes of injection action – external, core, residual, and internal – which differ in how the depth of action embeds interacts with the distribution core. The internal condition, which includes cross-action attention directly inside each transformer block, achieved the best structural uniformity (SSIM+15 of 0.863, FVD+15 at 14.5). The remaining condition achieved the best visual distance (LPIPS+15 by 0.138). In cursor control, the SVG mask/reference condition raised cursor accuracy to 98.7%, versus 8.7% with only coordinate monitoring – indicating that treating the cursor as a clear visual object to be monitored is important. The quality of the data appeared to be consistent with the architecture: the Claude CUA dataset of 110 hours performed better than the 1,400 hours of random testing in all metrics (FVD: 14.72 vs. 20.37 and 48.17), which confirms that the selected, goal-oriented sample is more effective than the transient data.

What Remains Unsolved

The research team speaks candidly about the gap between current prototypes and the definition of CNC. Stable reuse of learned processes, reliable symbolic computation, long-horizon interoperability, and transparent runtime management are all enabled. The roadmap they present focuses on three adoption lenses: input-reuse, implementation consistency, and governance review. Advances in all three, the research team says, are what could make Neural Computers look less like stand-alone displays and more like the machine form of a next-generation computer candidate.

Key Takeaways

- Neural Computers propose to make the model itself into a working computer. Unlike AI agents that operate on existing software stacks, NCs aim to collapse computation, memory, and I/O into a single state of the learned runtime – eliminating the separation between the model and the machine on which it runs.

- Early prototypes show measurable interface primitives. Built on Wan2.1, NCCLIGen achieved 40.77 dB PSNR and 0.989 SSIM for terminal rendering, and NCGUIWorld achieved 98.7% cursor accuracy using SVG mask/position pointer – ensuring I/O alignment and short-horizon control is readable in the clustered interface trace.

- Data quality is more important than data scale. In the GUI tests, 110 hours of goal-oriented methods from Claude CUA worked for almost 1,400 hours of random testing in all metrics, showing that the selected interaction data is more effective for sampling than for transactional collection.

- Current models are powerful givers but not native thinkers. NCCLIGen scored only 4% on the arithmetic probes without help, but the refactoring pushed the accuracy to 83% without changing the spine – proof of robustness, not internal computation. Figurative thinking is still a major open challenge.

- Three functional gaps must be closed before a Fully Neural Computer can be realized. The research team posits near-term progress in terms of reusability (learned skills that persist and remain callable), consistency of practice (behavior that repeats across runs), and review governance (behavioral changes are traceable to overt reprogramming instead of silently drifting).

Check out Paper and technical specifications. Also, feel free to follow us Twitter and don’t forget to join our 130k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? contact us

Michal Sutter is a data science expert with a Master of Science in Data Science from the University of Padova. With a strong foundation in statistical analysis, machine learning, and data engineering, Michal excels at turning complex data sets into actionable insights.