Meta Releases TRIBE v2: A Brain Coding Model That Predicts fMRI Responses to Across Video, Audio, and Text Stimuli

Neuroscience has long been a divide-and-conquer field. Researchers typically map specific cognitive functions to isolated brain regions—such as movement in area V5 or the surface of the fusiform gyrus—using models designed to summarize experimental paradigms. Although this has provided deep insight, the resulting field is fragmented, lacking a unified framework to explain how the human brain integrates multisensory information.

Meta’s FAIR team presented TRIBE v2a tri-modal base model designed to bridge this gap. By aligning the latent representations of modern AI architecture with human brain activity, TRIBE v2 predicts high-resolution fMRI responses across a variety of environmental conditions and tests.

Architecture: Multi-modal Integration

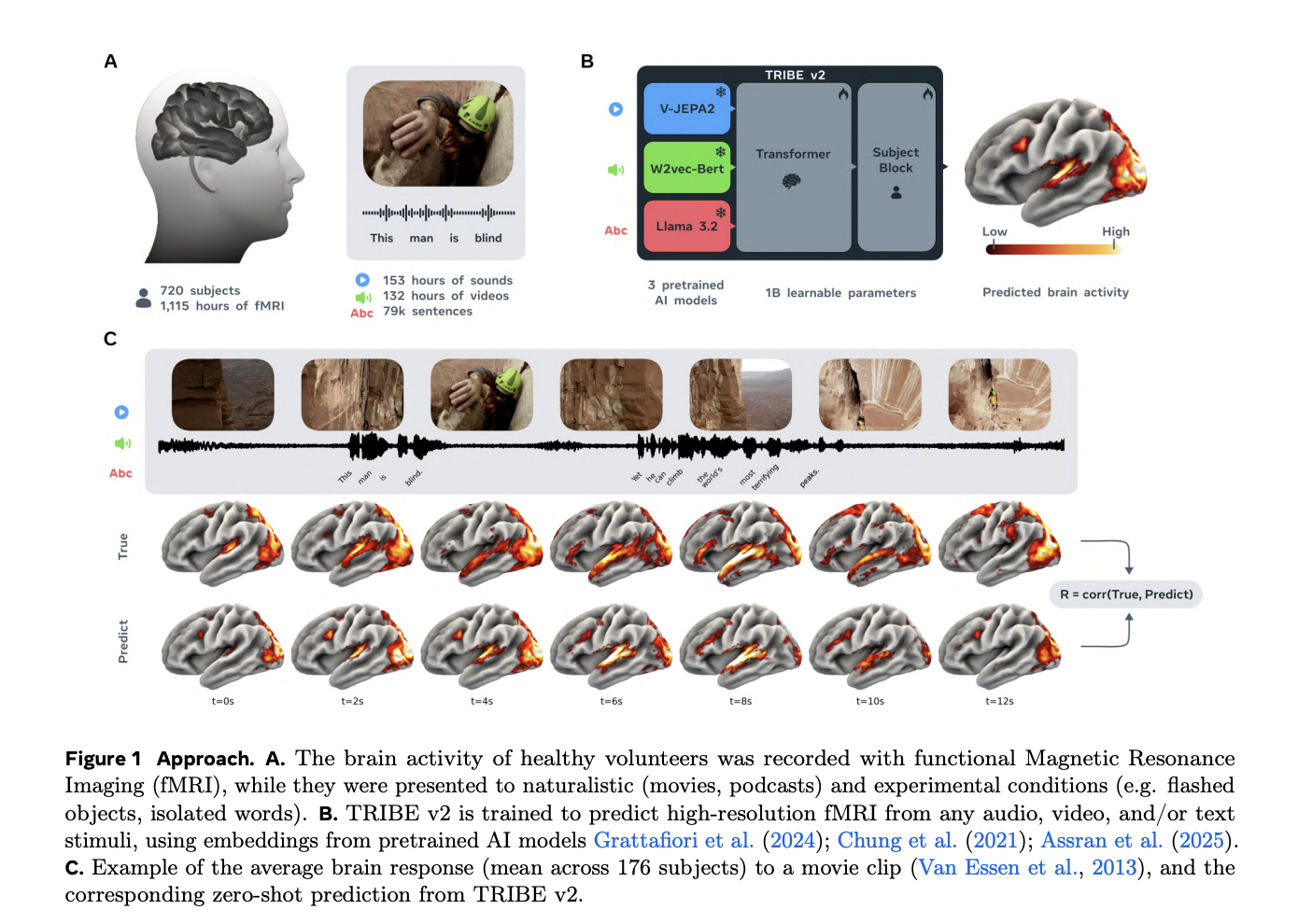

TRIBE v2 does not learn to ‘see’ or ‘hear’ from scratch. Instead, it increases the representational similarity between deep neural networks and the monkey brain. The architecture has three basic frozen models that work as features extractors, step transformer, and a topic-specific prediction block.

The model processes the stimulus using three special codes:

- Text: Embedded content is removed from it LLaMA 3.2-3B. For each word, the model processes the 1,024 preceding words to provide a temporal context, which is then plotted on a 2 Hz grid.

- Video: The model is used IV-JEPA2-Giant processing 64 frame segments covering the previous 4 seconds in each time bin.

- Sound: The sound is processed Wav2Vec-BERT 2.0presentations were modulated at 2 Hz to match the stimulus frequency .

2. Temporary Integration

The resulting embedding is compressed into a shared dimension and aggregated to form a multidimensional time series model . This sequence is inserted into a Transformer encoder (8 layers, 8 attention heads) that exchange information in a 100 second window.

3. Topic-Specific Forecasting

To predict brain activity, the output of the Transformer is reduced to 1 Hz fMRI frequency and passed a Block Block. This block generates hidden representations at 20,484 cortical vertices and 8,802 subcortical voxels.

Data and measurement rules

A key obstacle to coding the brain is the lack of data. TRIBE v2 addresses this by using ‘deep’ datasets for training—where several subjects are recorded over many hours—and ‘broad’ datasets for testing.

- Training: The model was trained with 451.6 hours of fMRI data from 25 subjects across four natural subjects (movies, podcasts, and silent videos).

- Testing: It has been tested on a wide range of 1,117.7 hours in 720 subjects.

The research team observed a log-linear increase in encoding accuracy as the volume of training data increased, with no evidence of a plateau. This suggests that as neuroimaging databases expand, the predictive power of models like TRIBE v2 will continue to grow.

Results: Hitting the Base

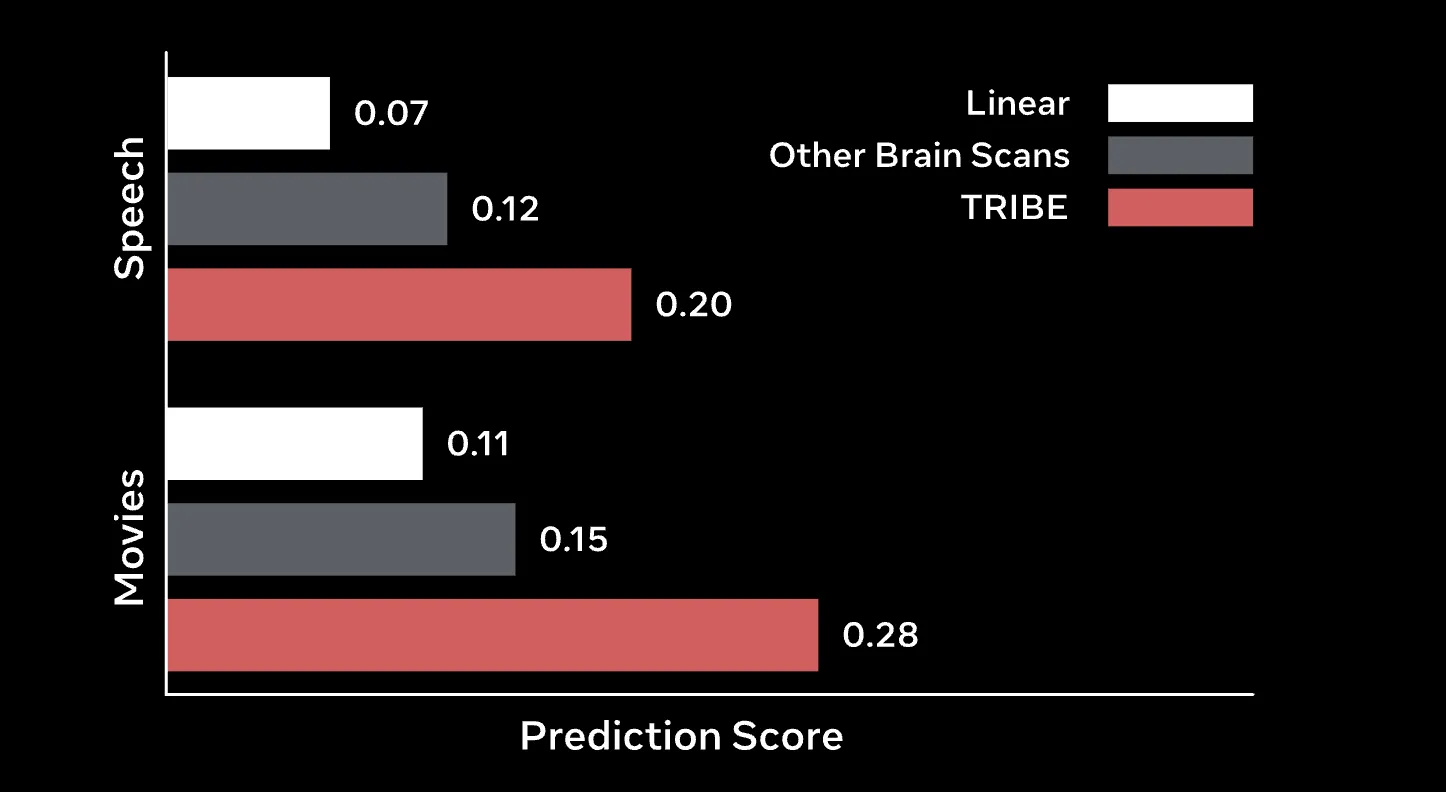

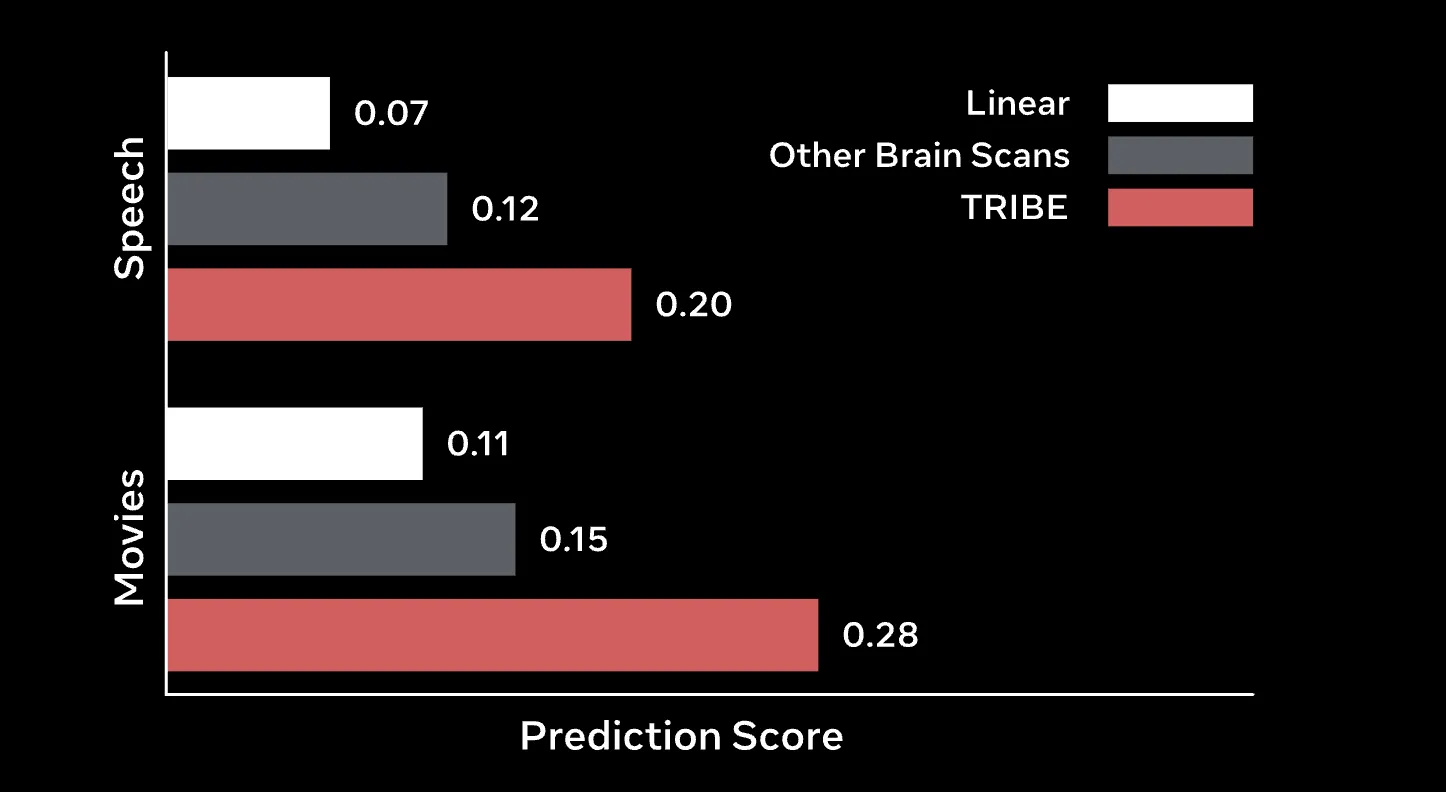

TRIBE v2 is more traditional Finite Impulse Response (FIR) models, the long-term gold standard for voxel-wise encoding.

Zero-Shot and teamwork

One of the skills of the models stands out the most Zero shot production in new studies. Using an ‘invisible subject’ layer, TRIBE v2 can predict the average group response of a new group more accurately than the actual recordings of many subjects in that group. In the high-resolution Human Connectome Project (HCP) 7T dataset, TRIBE v2 achieved group correlation around 0.4, a two-fold improvement over the median subject group prediction.

Fine tuning

Given a small amount of data (typically one hour) for a new participant, fine-tuning TRIBE v2 for one session leads to a two- to fourfold improvement over linear models trained from scratch..

In-Silico Experimentation

The research team says TRIBE v2 could be useful screening or pre-screening neuroimaging studies. By using physical tests on Individual Brain Charting (IBC) dataset, The model has received classic performance landmarks:

- Idea: Locate precisely the position of the fusiform face (FFA) and the parahippocampal area (PPA).

- Language: It successfully restored the temporo-parietal junction (TPJ) emotional processing and This is Broca’s area of syntax.

In addition, applying Independent Component Analysis (ICA) to the last layer of the model revealed that TRIBE v2 naturally learns five well-known functional networks: primary auditory, language, movement, automatic mode, and visual..

Key Takeaway

- Powerhouse Tri-modal Architecture: TRIBE v2 is a basic integrative model video, audio, and language by using high quality encoders like LLaMA 3.2 textual, V-JEPA2 for video, as well Wav2Vec-BERT for sound.

- Log Scaling Rules: Like the Main Language models we use every day, TRIBE v2 follows a log-linear scaling law; its ability to accurately predict brain activity is gradually increasing as more fMRI data is provided, with no performance plateau currently in sight.

- Superior Zero-Shot Generalization: The model can predict brain responses of articles that can’t be seen in new test situations without additional training. Surprisingly, its zero-shot predictions are often more accurate in measuring group-averaged brain responses than recordings of individual subjects.

- The Dawn of In-Silico Neuroscience: TRIBE v2 enables ‘in-silico’ experiments, allowing researchers to perform virtual neuroscientific experiments on the computer. It has successfully replicated decades of pseudo research by identifying special areas such as fusiform facial area (FFA) again This is Broca’s area by using only digital simulation.

- Emergent Biological Interpretability: Although it is a ‘black box’ of deep learning, the internal representations of the model naturally organize themselves into five known functional networks: auditory, language, movement, autonomic, and visual priorities.

Check it out The code, Weights again Demo. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Michal Sutter is a data science expert with a Master of Science in Data Science from the University of Padova. With a strong foundation in statistical analysis, machine learning, and data engineering, Michal excels at turning complex data sets into actionable insights.