The Complexity Engineering Challenge of AI Modeling on Code-Free Platforms

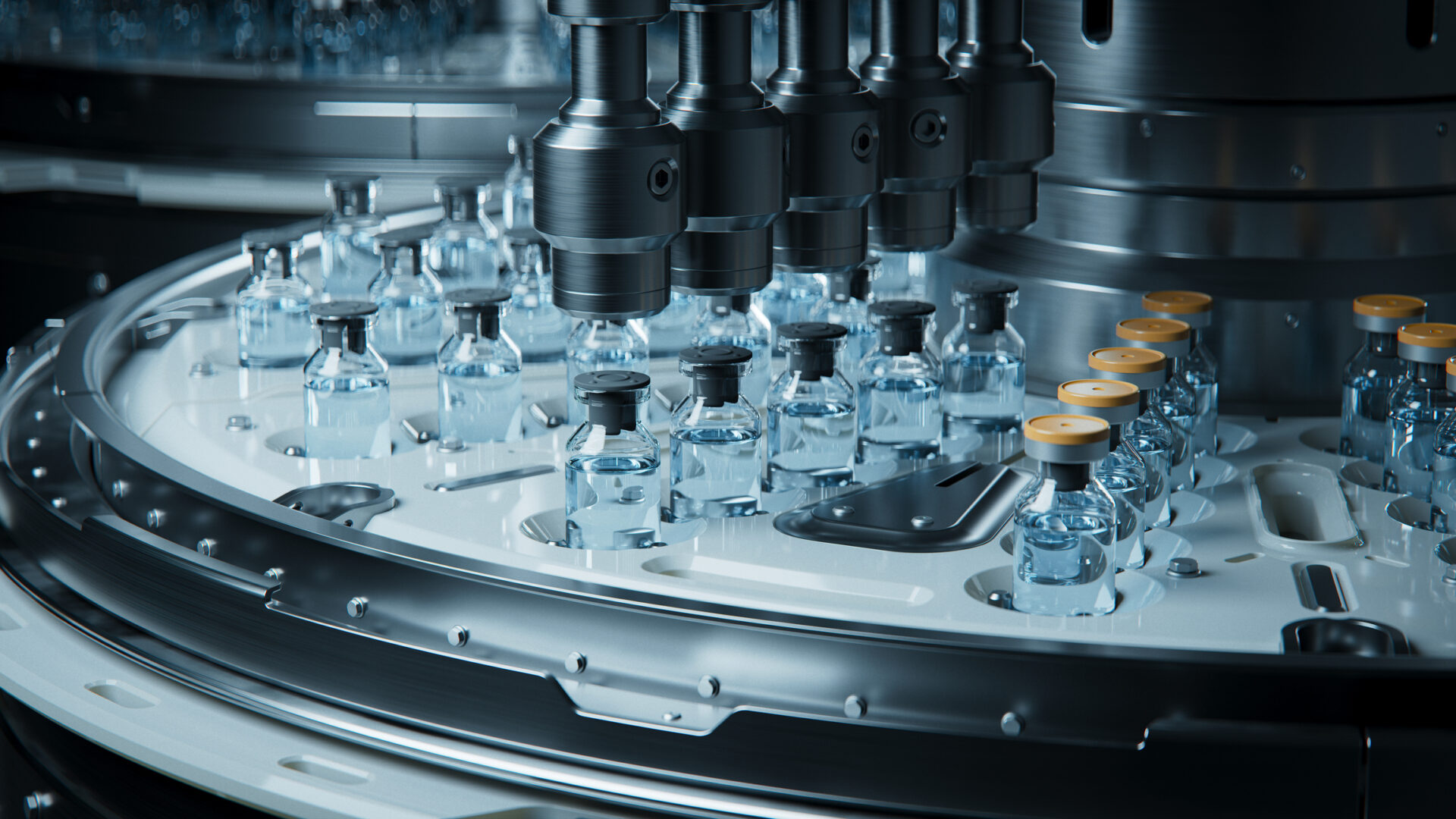

A Fortune 500 business needs to apply sentiment analysis to all customer support tickets, product reviews, and social media mentions. This situation represents a paradigm shift from “build vs. buy” to “prepare vs. code.”

Organizations can approach the implementation of AI in three ways: building custom integrations against model providers’ APIs, purchasing separate SaaS solutions for each vendor, or configuring a unified visualization platform that outsources that integration behind a single orchestration layer. Each approach carries different trade-offs across API management, authentication, rate limiting, and error management – while custom builds require teams to implement each of these concerns manually for each provider, visual platforms are all-in-one, shifting maintenance responsibility to the platform and allowing teams to focus on workflow concepts instead of infrastructure.

Organizations trying to integrate multiple AI models and services face a key question: How do you build code-free platforms without sacrificing what engineers want for technical control? The technical structure underlying visual abstractions presents unique engineering challenges that require complex solutions.

The Technical Architecture Behind Visual Snapshots

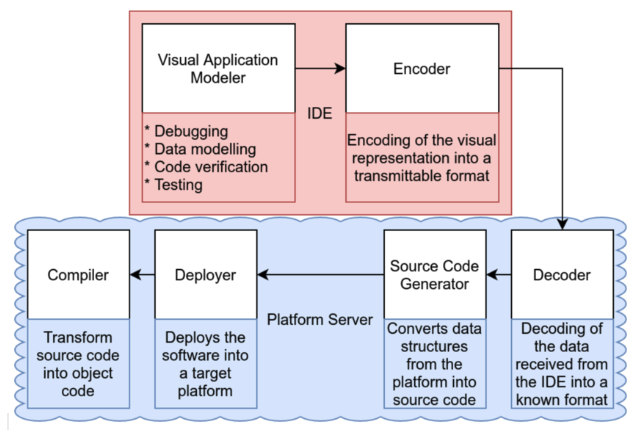

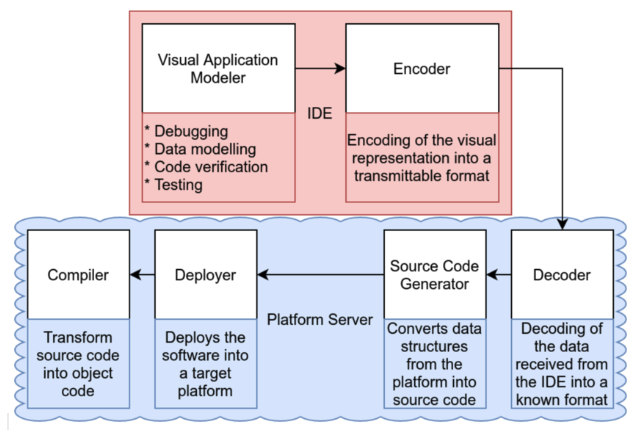

No-code AI platforms must outsource abstract model training and orchestration into virtual constructs while maintaining the full range of underlying AI capabilities. Active platforms use the following multi-layered architecture a common architecture pattern for low-code platformswhere i API gateway/vendor layer it bypasses authentication, applies rate constraints, and routes each canvas block to the appropriate microservice model.

This figure shows the architecture of the many layers of AI flow orchestration from visual abstraction to model implementation. Source: ResearchGate

LLM orchestration frameworks, such as LangChain and LangGraph, expose high-level primitives (data, memory, tools) that map directly to drag-and-drop nodes. n8n is an example this approach with broad AI nodes integrated with LangChain, showing how platforms can achieve AI-native architecture while maintaining simple visibility.

This creates declarative configuration methods in which visual workflows generate JSON or YAML configurations that describe desired conditions instead of critical code, allowing platforms to develop mechanisms behind the scenes.

Abstraction introduces trade-offs. Drag and drop links provide accessibility but can limit fine-grained control over parameters. Advanced platforms address this with progressive disclosure principles, where complexity is revealed based on the user’s skill level.

Multi-Genre Music Challenges

Coordinating multiple AI services at the same time creates difficulties. Multi-agent orchestration it requires intelligent routing systems that analyze objective queries and route to the correct models based on semantic understanding, performance metrics, and cost considerations.

State management presents a very important challenge. Coordinating multiple AI models requires managing distributed tasks across services while maintaining a conversational state and using a memory architecture that balances short-term and long-term needs.

Zapier’s business success reflects incremental orchestration, and yours AI adoption reaches 89%while enterprise customers reported resolving 28% of IT tickets automatically with a 3-person team supporting 1,700 employees.

Platform Engineering Trade-offs

Balancing the accessibility and control of technology creates a central tension in the design of a non-code AI platform. Successful platforms use a hybrid approach that supports both visual development and code-based customization, ensuring that all visual functions remain programmatically accessible with API-first design principles.

Integration patterns that prevent technical debt need to be carefully considered: the integration of version control, where virtual workflows reach Git-compatible formats, the use of an API contract using standard specifications, and a modular structure that encourages reusable components over a monolithic design.

Common edge cases that challenge codeless platforms include complex conditional logic that requires complex branching beyond visual representation, high-frequency real-time processing that exceeds the capabilities of abstract visualization, and dynamic workflow generation based on runtime conditions. Field design should anticipate these conditions through the use of escape hatches and hybrid use models.

Considerations for Developer Experience

Business adoption depends on technology capabilities that maintain flexibility while providing accessibility. Key requirements include full API implementation with REST, GraphQL, and WebSocket support, as well as business authentication features such as SSO and OAuth 2.0, as well as local development scenarios in terms of testing capabilities.

Debugging and visibility become especially challenging when the workflow involves multiple AI resources. Distributed tracking uses OpenTelemetry standards provides application tracking across services, while real-time monitoring dashboards and AI-powered root cause analysis can identify issues in seconds.

More efficient extension methods include fully documented custom component SDKs, plugin architectures for dynamic extension loading, and webhook systems for external integration.

Future Technical Directions

The platform’s AI orchestration architecture is rapidly evolving to support multimodal AI integration. Integrated multimodal links that handle text, image, audio, and video processing require stream processing architectures to handle real-time data and multi-mode transformation capabilities, allowing automatic transitions between processes.

AI agents are taking on key infrastructure roleswith self-service agents that automate system operations, self-healing infrastructure that detects and corrects errors, and predictive maintenance agents that prevent failures before they happen.

In the next 2-3 years, orchestration engines should resolve semantic interactions across emerging AI models and providers, achieve real-time performance at scale with consistent sub-100ms latency, and use privacy-preserving computing to process sensitive data.

Competitive Position Insights

Success is not in removing complexity, but in designing systems that scale from workflow to complex business integration without forcing users to choose between power and accessibility. The competitive advantage will be for platforms that manage the balancing act of technology to hide complexity while maintaining engineer control through escape hatches, scalability, and structured access.

The platforms that dominate the next phase of AI adoption will solve a fundamental engineering challenge: making AI accessible to non-technical users while providing the technical depth that engineers need.