AI Compute Architectures Every Developer Should Know: CPUs, GPUs, TPUs, NPUs, and LPUs Compared

Modern AI is no longer powered by a single type of processor—it operates on a diverse ecosystem of specialized computing architectures, each making deliberate trade-offs between flexibility, parallelism, and memory efficiency. While traditional systems relied heavily on CPUs, today’s AI workloads are distributed across GPUs for greater throughput, NPUs for on-device optimization, and TPUs specifically designed for neural network use with improved data flow.

Emerging innovations such as Groq’s LPU continue to push the boundaries, delivering extremely fast and energy-efficient computing for large language models. As businesses transition from general-purpose computing to full-scale workloads, understanding these architectures has become essential for all AI developers.

In this article, we’ll explore some of the most common AI computing architectures and break down how they differ in design, functionality, and real-world use cases.

Central Processing Unit (CPU)

I CPU (Central Processing Unit) it remains the foundation of modern computing and continues to play an important role even in AI-driven systems. Designed for general-purpose workloads, CPUs excel at handling complex logic, branch operations, and system-level tuning. They act as the “brain” of the computer—managing operating systems, coordinating hardware components, and running a variety of applications from databases to web browsers. Although AI workloads have shifted to specialized hardware, CPUs are still important as controllers that manage data flow, schedule tasks, and coordinate accelerators such as GPUs and TPUs.

From an architectural perspective, CPUs are built with a small number of high-performance cores, deep cache hierarchies, and access to off-chip DRAM, allowing for efficient sequential processing and multitasking. This makes them more versatile, easier to program, more widely available, and less expensive for general computing applications.

However, their linear nature limits their ability to handle highly parallel tasks such as matrix multiplication, making them less suitable for large AI workloads compared to GPUs. While CPUs can handle a variety of tasks reliably, they often become bottlenecks when working with large data sets or highly parallel computations—that’s where special processors excel. Most importantly, CPUs are not being replaced by GPUs; instead, they complement them by scheduling workloads and managing the overall system.

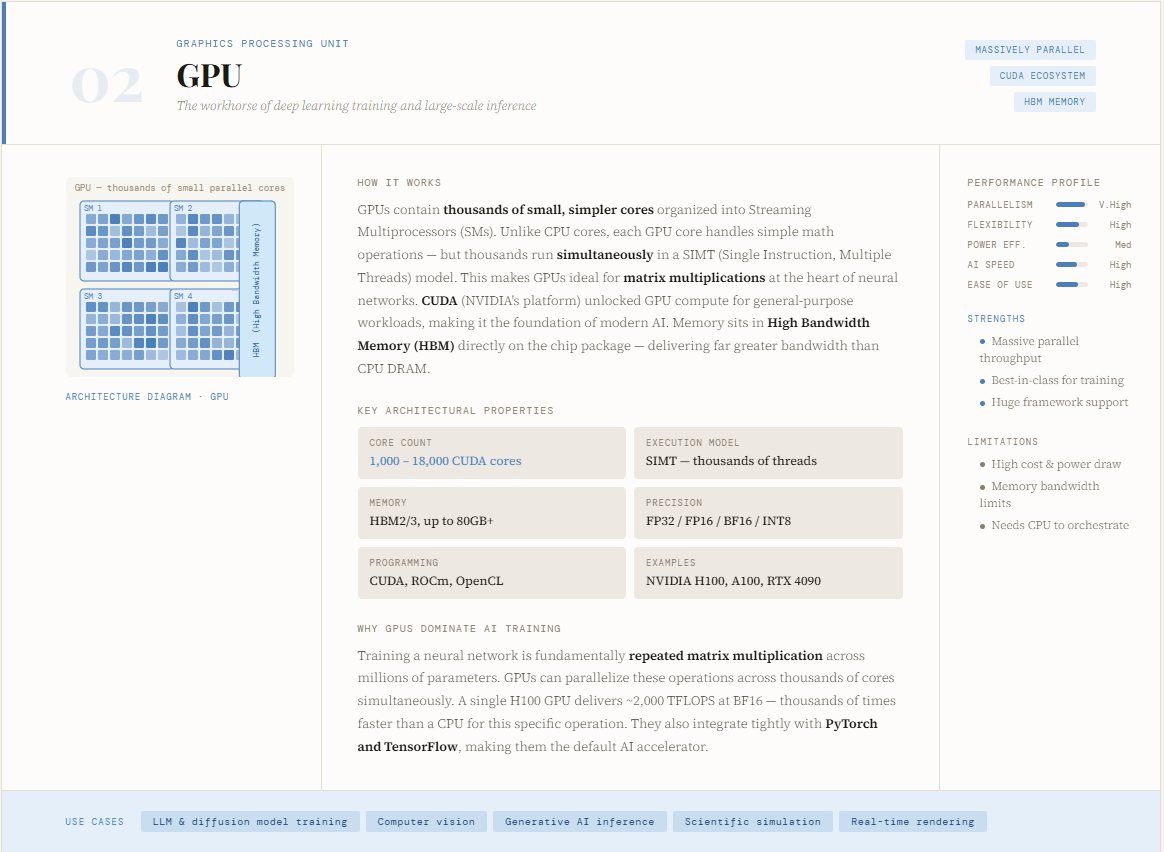

Graphics Processing Unit (GPU)

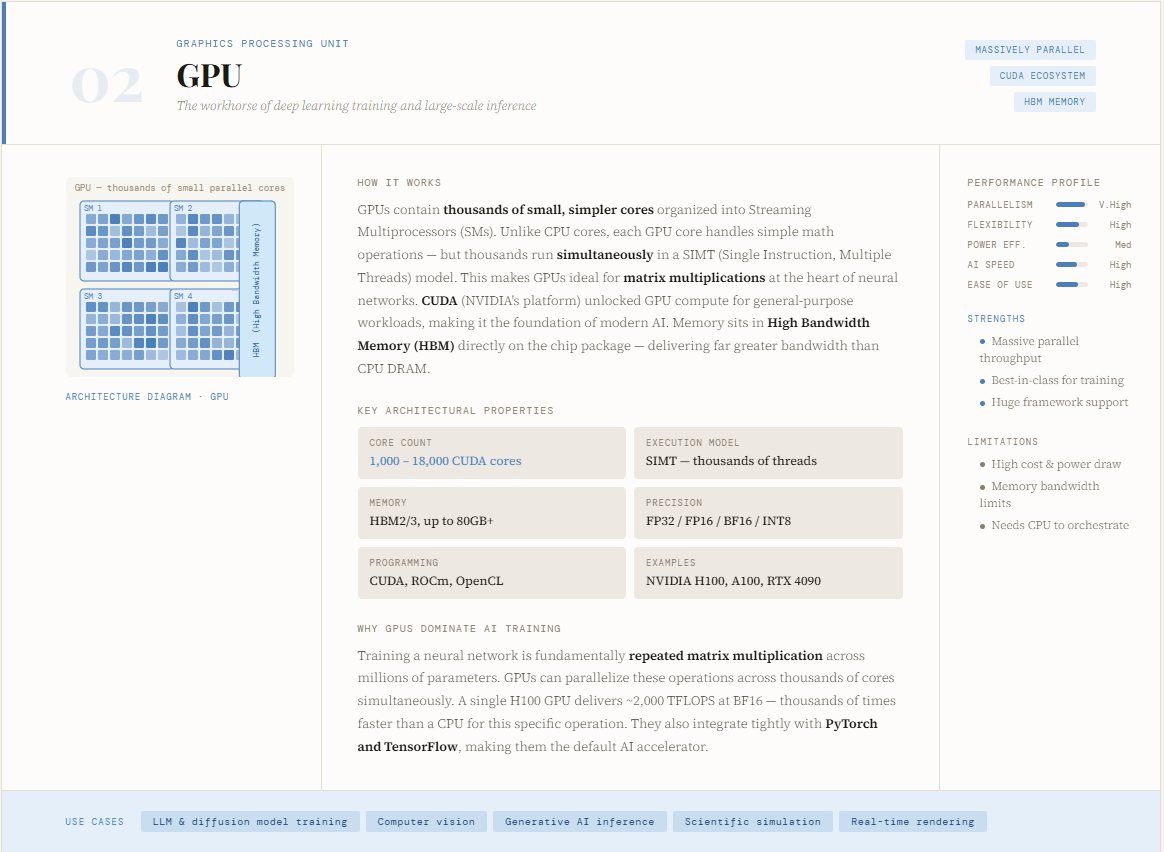

I GPU (Graphics Processing Unit) has become the backbone of modern AI, especially for training deep learning models. Originally designed to render graphics, GPUs evolved into powerful computing engines with the introduction of platforms like CUDA, which enabled developers to use their same processing capabilities in general-purpose computing. Unlike CPUs, which focus on sequential operations, GPUs are designed to handle thousands of simultaneous operations—making them well suited for the matrix multiplication and tensor operations that power neural networks. This architectural shift is precisely why GPUs dominate AI training workloads today.

From a design perspective, GPUs contain thousands of small, slow cores optimized for parallel computing, allowing them to break large problems into smaller pieces and process them simultaneously. This allows for significant acceleration of data-intensive tasks such as deep learning, computer vision, and generative AI. Their strengths lie in handling highly parallel workloads and integrating well with popular ML frameworks such as Python and TensorFlow.

However, GPUs come with tradeoffs—they are more expensive, more readily available than CPUs, and require specialized programming knowledge. Although they significantly outperform CPUs for similar tasks, they are less efficient at tasks that involve complex reasoning or sequential decision making. Essentially, GPUs act as accelerators, working alongside CPUs to handle heavy computing tasks while the CPU handles processing and control.

Tensor Processing Unit (TPU)

I TPU (Tensor Processing Unit) is a special AI accelerator designed by Google specifically to carry a neural network. Unlike CPUs and GPUs, which retain some level of general-purpose flexibility, TPUs are purpose-built to maximize the performance of deep learning tasks. They power Google’s massive AI systems—including search, recommendations, and models like Gemini—that serve billions of users around the world. By focusing only on tensor operations, TPUs push performance and performance much more than GPUs, especially in large-scale training and computing environments used with platforms such as Google Cloud.

At the architectural level, TPUs use a grid of multiply-accumulate (MAC) units—commonly called a matrix multiplication unit (MXU)—through which data flows in a systolic (wave-like) pattern. The weights flow from one side, the activation from the other, and the average results spread across the grid without repeatedly accessing the memory, greatly improving speed and energy efficiency. Execution is controlled by the compiler rather than hardware-scheduled, which enables excellent performance and predictability. This design makes TPUs extremely powerful for large matrix-centric AI tasks.

However, this specialization comes with a trade-off: TPUs are less flexible than GPUs, rely on specific software systems (such as TensorFlow, JAX, or PyTorch with XLA), and are primarily accessible through cloud environments. Essentially, while GPUs outperform the same general-purpose acceleration, TPUs take it a step further—sacrificing flexibility to achieve unparalleled performance for neural network computation at scale.

Neural Processing Unit (NPU)

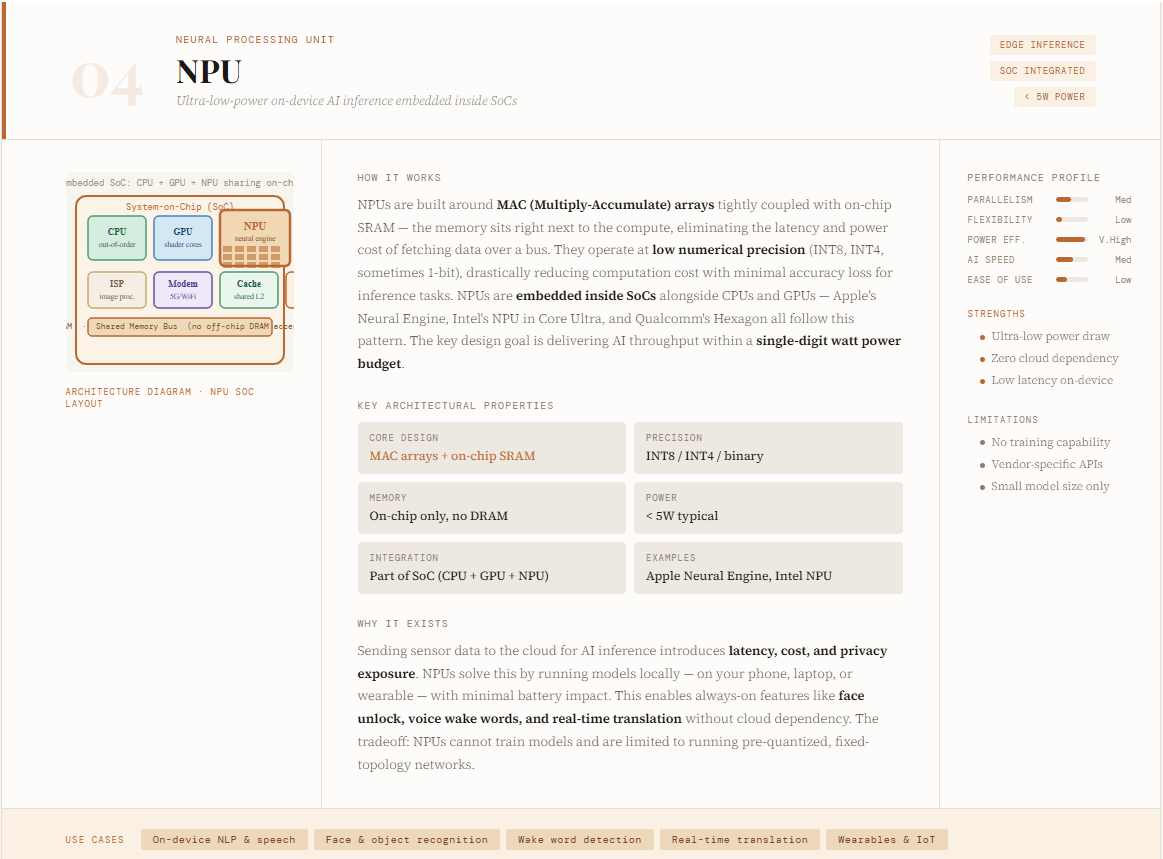

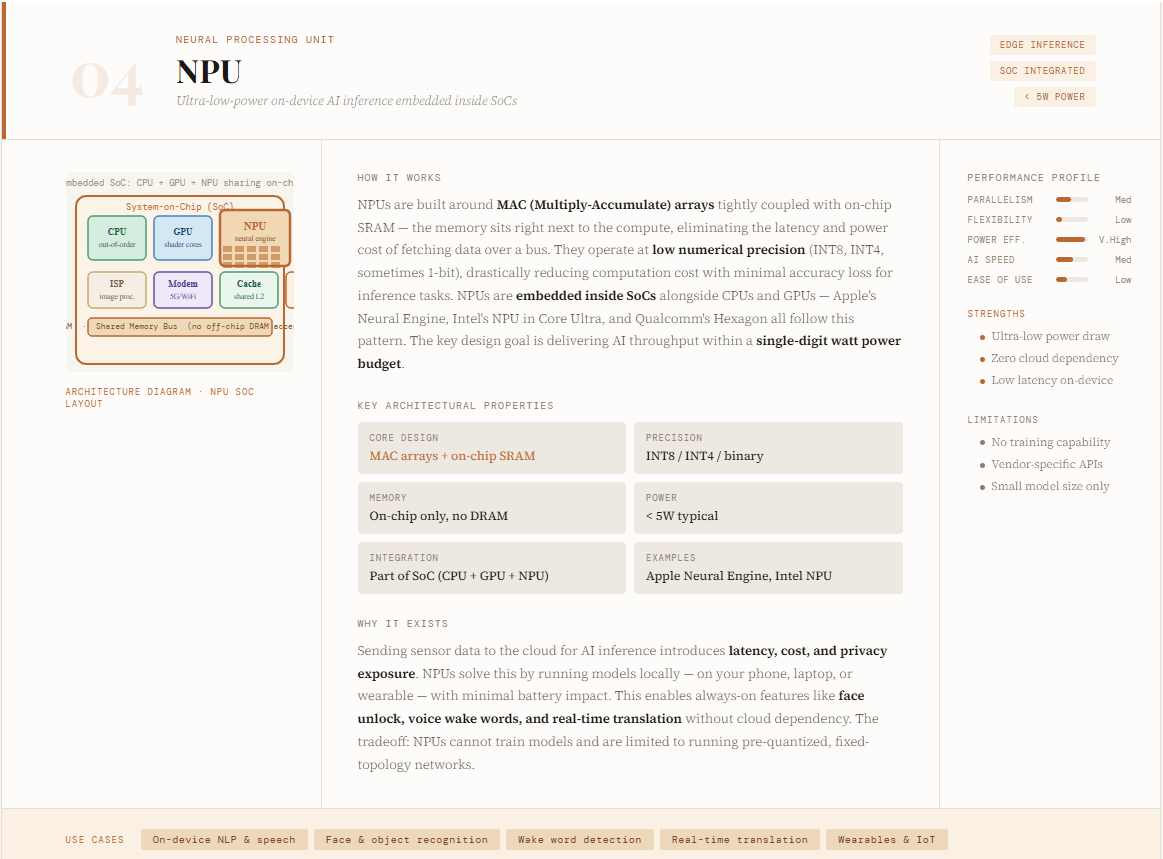

I NPU (Neural Processing Unit) is an AI accelerator specifically designed for efficient, low-power projection—especially at the edge. Unlike GPUs that target large training or data center workloads, NPUs are optimized to run AI models directly on devices such as smartphones, laptops, wearables, and IoT systems. Companies like Apple (with its Neural Engine) and Intel have adopted this architecture to enable real-time AI features such as speech recognition, image processing, and on-device AI generation. The core design is focused on delivering a high level of low power consumption, often operating within a single digit watt power budget.

Architecturally, NPUs are built around neural compute engines made up of MAC (multiply-accumulate) arrays, on-chip SRAM, and optimized data paths that minimize memory movement. They emphasize parallel processing, low-precision arithmetic (like 8-bit or less), and tight integration of memory and computation using concepts like synaptic weights—allowing them to process neural networks very well. NPUs are often integrated into system-on-chip (SoC) designs alongside CPUs and GPUs, creating heterogeneous systems.

Their strengths include ultra-low latency, high power efficiency, and the ability to handle AI tasks such as computer vision and NLP locally without cloud dependencies. However, these technologies also mean that they are not flexible, they are not suitable for general-purpose computing or large-scale training, and they are often dependent on specific ecosystems. Essentially, NPUs bring AI closer to the user—trading raw power for efficiency, responsiveness, and device intelligence.

Language Processing Unit (LPU)

I LPU (Language Processing Unit) a new class of AI accelerators introduced by Groq, specially designed for ultra-fast AI predictions. Unlike GPUs and TPUs, which still retain some general-purpose flexibility, LPUs are designed from the ground up to use large-scale language models (LLMs) with high speed and efficiency. Their innovation lies in removing off-chip memory from the critical path of execution—keeping all loads and data in on-chip SRAM. This greatly reduces latency and eliminates common issues such as memory access delays, cache misses, and runtime scheduling. As a result, LPUs can deliver faster processing speeds and up to 10x better power efficiency compared to traditional GPU-based systems.

Structurally, LPUs follow a software-first, integrator-driven design with a programmable “assembly line” model, where data flows to the chip in a deterministic, well-planned manner. Instead of dynamic hardware configuration (as in GPUs), all operations are programmed in advance at compile time—ensuring zero execution variance and fully predictable performance. The use of on-chip memory and high-bandwidth data “conveyor belts” eliminates the need for complex caching, routing, and synchronization mechanisms.

However, this advanced technology introduces tradeoffs: each chip has a limited memory capacity, which requires hundreds of LPUs to be connected to provide large models. Besides this, the benefits of latency and efficiency are huge, especially for real-time AI applications. In many ways, LPUs represent the far end of the AI hardware evolution spectrum—from general-purpose processors (CPUs) to discrete, optimized architectures designed for speed and efficiency.

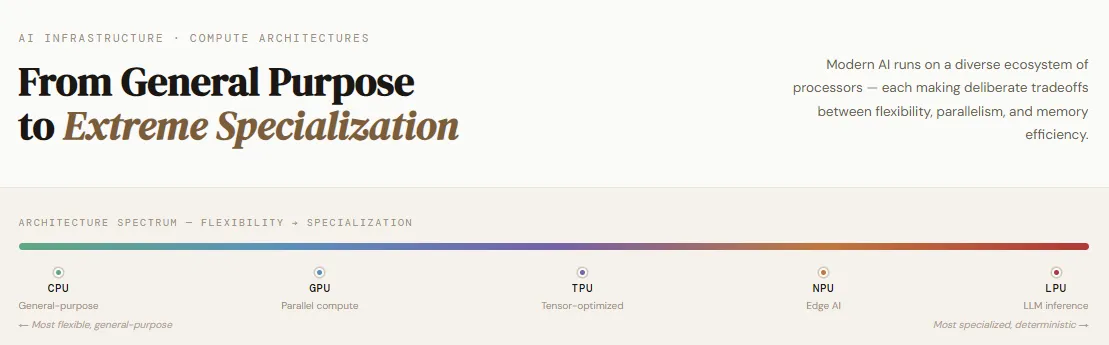

Comparing different properties

AI computing architectures exist on a spectrum—from simple to extremely professional—each designed for a different role in the AI lifecycle. CPUs stay at the more flexible end, holding a sense of general purpose, orchestration, and program control, but struggle with large concurrent statistics. GPUs to parallelism, thousands of cores are used to accelerate matrix performance, making it the best choice for training deep learning models.

TPUsdeveloped by Google, continues to specialize in tensor performance with systolic array architectures, bringing high efficiency for both training and interpretation to structured AI workloads. NPUs push optimization to the edge, enabling low-power, real-time prediction for devices like smartphones and IoT systems by trading raw power for energy efficiency and latency. Finally, LPUsintroduced by Groq, represents extreme expertise—built exclusively for high-speed, deterministic AI guidance with on-chip memory and compiler-controlled execution.

Together, these architectures are not replacements but complementary parts of a diverse system, where each type of processor is used based on specific performance, scale, and efficiency requirements.

I am a Civil Engineering Graduate (2022) from Jamia Millia Islamia, New Delhi, and I am very interested in Data Science, especially Neural Networks and its application in various fields.