TinyFish AI Releases a Full Web Infrastructure Platform for AI Agents: Search, Download, Browser, and Agent Under One Key API.

AI agents struggle with tasks that require interacting with the live web — fetching a competitor’s pricing page, extracting structured data from a JavaScript-heavy dashboard, or automating multi-step workflows on a real site. The tools are fragmented, requiring teams to integrate different providers for search, browser automation, and content retrieval.

TinyFish, a Palo Alto-based startup that has previously deployed autonomous web agents, is launching what it describes as a complete infrastructure platform for AI agents running on the live web. This launch presents four products combined under one API key and one credit system: Web Agent, Web Search, Web Browseragain Downloading the Web.

What TinyFish ships with

Here’s what each product does:

- Web Agent – Implements multi-step automated workflows for real websites. The agent navigates sites, fills out forms, clicks through the flow, and returns structured results without requiring manual steps.

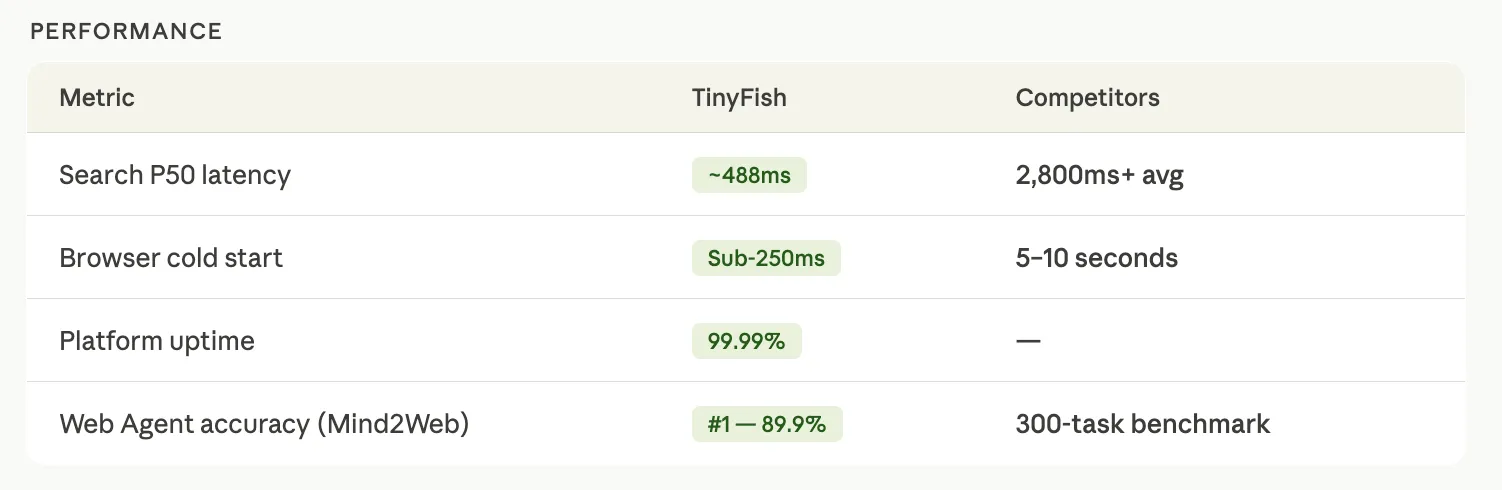

- Web Search — Returns structured search results as pure JSON using a custom Chromium engine, with a P50 latency of around 488ms. Competitors in this space are averaging 2,800ms for the same task.

- Web Browser – Provides private Chrome sessions managed via the Chrome DevTools Protocol (CDP), with a cold start of less than 250ms. Matches usually last 5–10 seconds. The browser includes 28 anti-bot methods built at the C++ level — not through JavaScript injection, which is a more common and visible method.

- Downloading the Web – Converts any URL to pure Markdown, HTML, or JSON with full browser rendering. Unlike the native fetching tools built into most AI coding agents, TinyFish Fetch extracts the irrelevant markup – CSS, scripts, navigation, ads, footer – and returns only the content needed by the agent.

Token Issue in Agent Pipelines

One of the problems with consistent performance in agent pipelines is the contamination of the context window. When an AI agent uses a standard web crawler, it typically pulls the entire page — including thousands of navigation elements tokens, ad code, and boilerplate markup — and places it all in the context model window before accessing the actual content.

TinyFish Fetch addresses this by rendering the page to the full browser and returning only plain text content such as Markdown or JSON. The company’s measurements show CLI-based operations using approximately 100 tokens per operation compared to approximately 1,500 tokens when migrating the same workflow through MCP – an 87% reduction per operation.

Beyond token counting, there are architectural differences to understand: MCP functions return output directly to the agent’s context window. The TinyFish CLI writes output to a file system, and the agent only reads what it needs. This keeps the context window clean for all multi-step operations and enables usability with native Unix pipes and redirects – something not possible with sequential MCP round trips.

For complex multi-step tasks, TinyFish reports 2× higher task completion rates using CLI + Skills compared to MCP-based execution.

CLI and Agent Capability system

TinyFish ships two developer-facing components around API endpoints.

I The CLI includes with one command:

npm install -g @tiny-fish/cliThis gives the terminal access to all four endpoints – Search, Download, Browser, and Agent – directly from the command line.

I Agent skill is a markup instruction file (SKILL.md) that teaches AI coding agents — including Code Claude, Cursor, Codex, OpenClaw, and OpenCode — how to use the CLI. Install it with:

npx skills add --skill tinyfishOnce installed, the agent learns when and how to call the TinyFish endpoint without SDK integration or configuration. A developer can ask their coding agent to “find competitor values from these five sites,” and the agent automatically recognizes the TinyFish capability, calls the appropriate CLI commands, and writes structured output to a file system – without the developer writing assembly code.

The company also notes that MCP is still supported. The point is that MCP is worth acquiring, while CLI + Skills is the recommended method for heavy-duty, multi-step web development.

Why Integrated Stack?

TinyFish is built for search, download, browser, and agent in-house. This is a noticeable difference from other competitors. For example, Browserbase uses Exa to power its Search front end, which means the layer is not proprietary. Firecrawl offers search, visibility, and an agent endpoint, but the agent endpoint has reliability issues for many tasks.

The infrastructure argument is not just about avoiding vendor dependency. If each layer of the stack is managed by the same team, the system can only prepare for one result: whether the task is completed. If a TinyFish agent succeeds or fails in its search and download operations, the company receives an end-to-end signal at every step – what was searched, what was downloaded, and where the failure occurred. Companies in the search or download layer running on a third-party API cannot access this signal.

There are also practical costs that multi-supplier groups encounter. Search finds a page that the download layer cannot serve. Downloading returns content that the agent cannot parse. Browser sessions drop context between steps. The result is custom glue code, logic, callback handlers, and validation layers — a piece of engineering work. The combined stack removes the component boundaries where this failure occurs.

The platform also maintains session consistency at every step: same IP, same fingerprint, same cookies for all workflows. Different tools operating independently appear to the target site as multiple unrelated clients, increasing the likelihood of detection and session failure.

Key metrics

Key Takeaways

- TinyFish moves from a single web agent to a four-product environment – Web Agent, Web Search, Web Browser, and Web Download – all accessible under one API key and one credit system, eliminating the need to manage multiple providers.

- The combination of CLI + Agent Capability allows AI code agents to use the live web automatically – install and agents like Claude Code, Cursor, and Codex automatically know when and how to call the TinyFish endpoint, without manually compiling code.

- CLI-based jobs generate 87% fewer tokens per job than MCP, and write output directly to the file system instead of dumping it in the agent’s context window – keeping the context clean throughout the multi-step workflow.

- Every layer of the stack – Search, Download, Browser, and Agent – is built in-house, providing end-to-end signals when an operation succeeds or fails, a data feedback loop that can be replicated by integrating third-party APIs.

- TinyFish maintains a single session identity for all workflows – the same IP, fingerprint, and cookies – and different tools appear to target sites as many unrelated clients, increasing the risk of detection and failure rates.

Getting started

TinyFish offers 500 free spins with no credit card required at tinyfish.ai. The open source cookbook and Skill files are available at github.com/tinyfish-io/tinyfish-cookbook, and the CLI documentation is at docs.tinyfish.ai/cli.

Note: Thanks to the leadership at Tinyfish for supporting and providing the information for this article.