Your AI Doesn’t Know What “Income” Means. That’s a Bigger Problem Than You Think.

Here’s a scenario that plays out all the time in enterprise software teams. A product manager asks the company’s AI assistant: “Who are our top customers this quarter?” The program returns a clean, ranked list. It looks okay. Everyone is moving on.

Except that the product group defines “up” by engagement. Financials are defined as net profit. Marketing defines you by deal size. The AI chose one interpretation, presented it with complete confidence, and no one noticed until a strategic decision was made based on numbers that meant something different to everyone in the room.

This is not dreaming in the way people often talk about it. The program did nothing. It simply makes a choice about a meaning it never chose to choose.

The Real Problem Is Not the Model

There is a widespread belief in the adoption of enterprise AI that if you choose the right model, tune it carefully, and feed it with good data, you will get reliable results. That assumption misses the actual failure mode.

LLMs have amazing language skills. They are not well defined by the organization. Ask your AI what your churn rate is, and watch what happens. The model does not know whether you are measuring churn at the subscriber level or the customer level. He doesn’t know whether to count down or ignore. They don’t know if business accounts with multiple seats are treated differently. These are not answers buried in a text somewhere. They are organizational decisions that live on tribal knowledge, team agreements, and data model comments written two years ago by someone who has since left the company.

The model will help you. And thoughtfulness, delivered with confidence, is a must.

Embedding Doesn’t Fix This

The usual answer to this problem is better retrieval. Embed your documents, pull the most relevant pieces, give the model more context. It’s a reasonable idea and a small improvement. But it doesn’t solve the underlying issue.

Embedding measures how close two pieces of text are in vector space; they say nothing about whether the given definition is right for your organization. “Revenue” and “profit” are neighbors in the embedding space because they appear together frequently in financial statements. In your financial reporting system, consolidation is a big mistake. No return value solves that because the correct answer is not in any document. It’s in the decisions that your finance team made about how to define things that, almost years ago, probably weren’t written down in a way that a machine could use.

The same structural problem appears everywhere. “Active user” means something different to your engineering team (API call) than to your product team (completed work). “Conversion” means a successful HTTP request to one party and a subscription-to-payment transition to another. “Engagement” is event frequency in one dashboard and session depth in another. Retrieval does not resolve ambiguity of meaning. It just outputs more text that contains ambiguity.

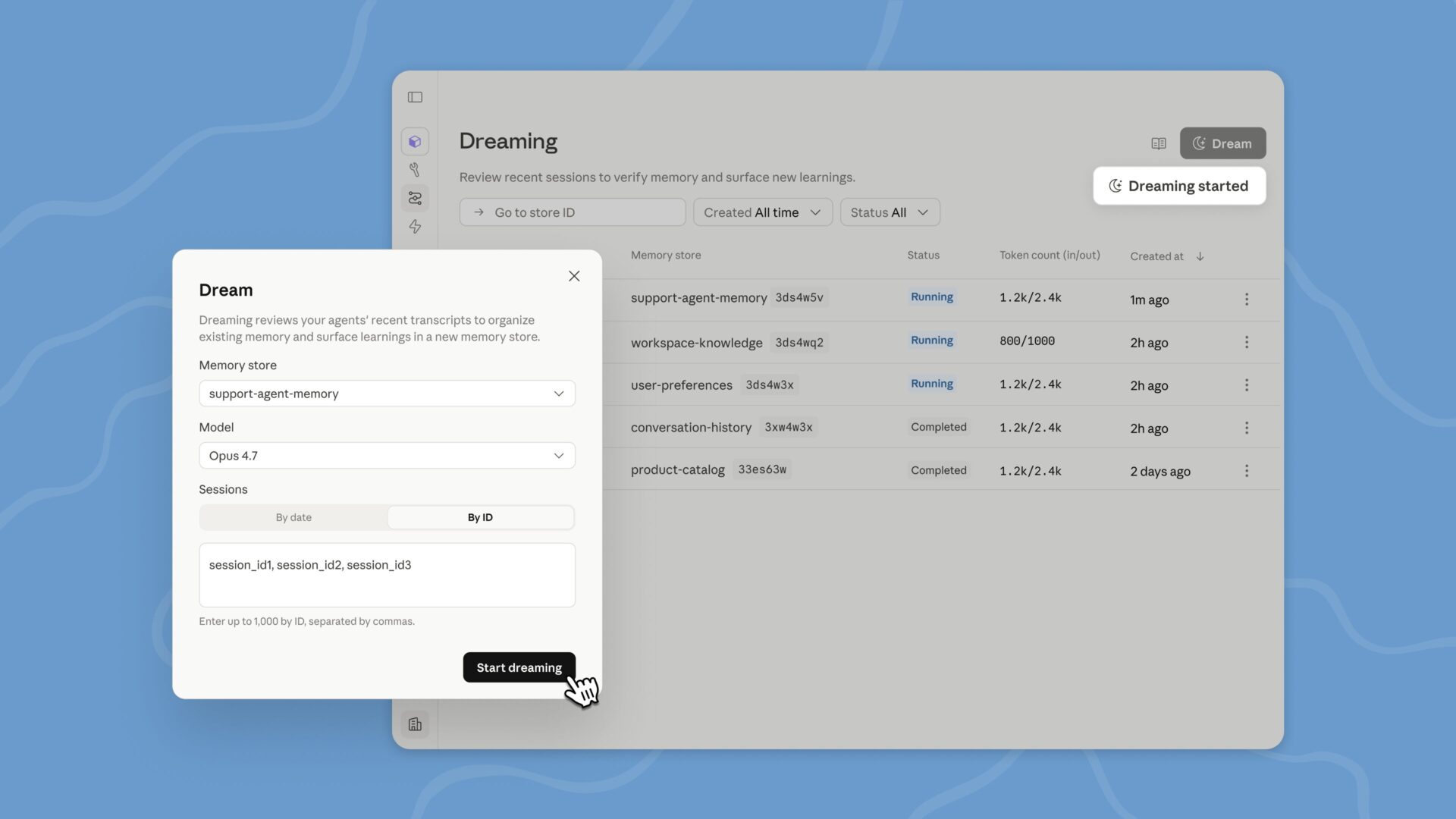

Figure 1: Without the semantic layer, the output of LLM is visible but inconsistent. On the other hand, they are grounded and fair.

What Should Actually Happen

The answer is the semantic layer, a structured, machine-readable representation of what your organization’s words actually mean. Not a list of names. They are not better papers. Formal encoding of entities, relationships, metrics, and rules of thumb resides between your data and your AI system, so that when someone asks about active or active accounts or top customers, the system doesn’t have to guess.

This is not a new idea in the world of data. Tools like dbt and Locker have been used in business intelligence for years. What’s new is the pressure to extend it to AI pipelines, and the resource is growing: The dbt Semantic Layer now supports direct AI pipeline integration, and platforms like Cube are building native LLM interfaces for this purpose.

A practical starting point for many groups is the schema-based approach: YAML or JSON configuration filesversion-controlled in git, injected at preview time. It is less rigid than formal ontologies, but more maintainable, and often sufficient. If you already have a BI semantic layer, your definition work is mostly done. The challenge is to make the questions when the AI needs them.

A Serious Problem for Society

Here’s what most architecture posts leave out: implementing the technology is the easy part. Getting the three departments to agree on what “working” means is not. Building and maintaining a semantic layer forces conversations that organizations often avoid, and exposes disagreements that have been quietly producing inconsistent results for years. That’s not right. It’s also a point.

There is a simple test I use: if a new hire will need to read internal documents to understand what a business keyword means, that word belongs in the semantic layer, not in the information.

The next phase of business AI is not about which model you use. It’s about how well your organization has organized its user experience. From information engineering to context engineering. From data pipes to speech pipes. Teams that get this right will produce AI results that don’t just learn well; they will be right. In business settings, speaking well is not enough. If your AI is wrong by definition, it is not reliable in practice.

Instead of asking: “Who are our top customers?”— Explained:

TopCustomer = revenue_last_90_days > $50K AND active_subscription = true